In 2026, the retail landscape has shifted from a keyword-first world to a vision-first reality. For growth marketers, the goal is no longer just ranking on page one of a text-based SERP; it is about ensuring that every pixel of a product image is intelligible to machine vision models. As the global visual search market climbs toward its projected $151.60 billion valuation by 2032, the ability to be 'seen' by AI has become the primary driver of e-commerce revenue. Visual search engine optimization (VSEO) is the technical discipline of preparing your visual assets so that Google Lens, Amazon StyleSnap, and autonomous AI agents can identify, index, and recommend your products with 100% confidence.

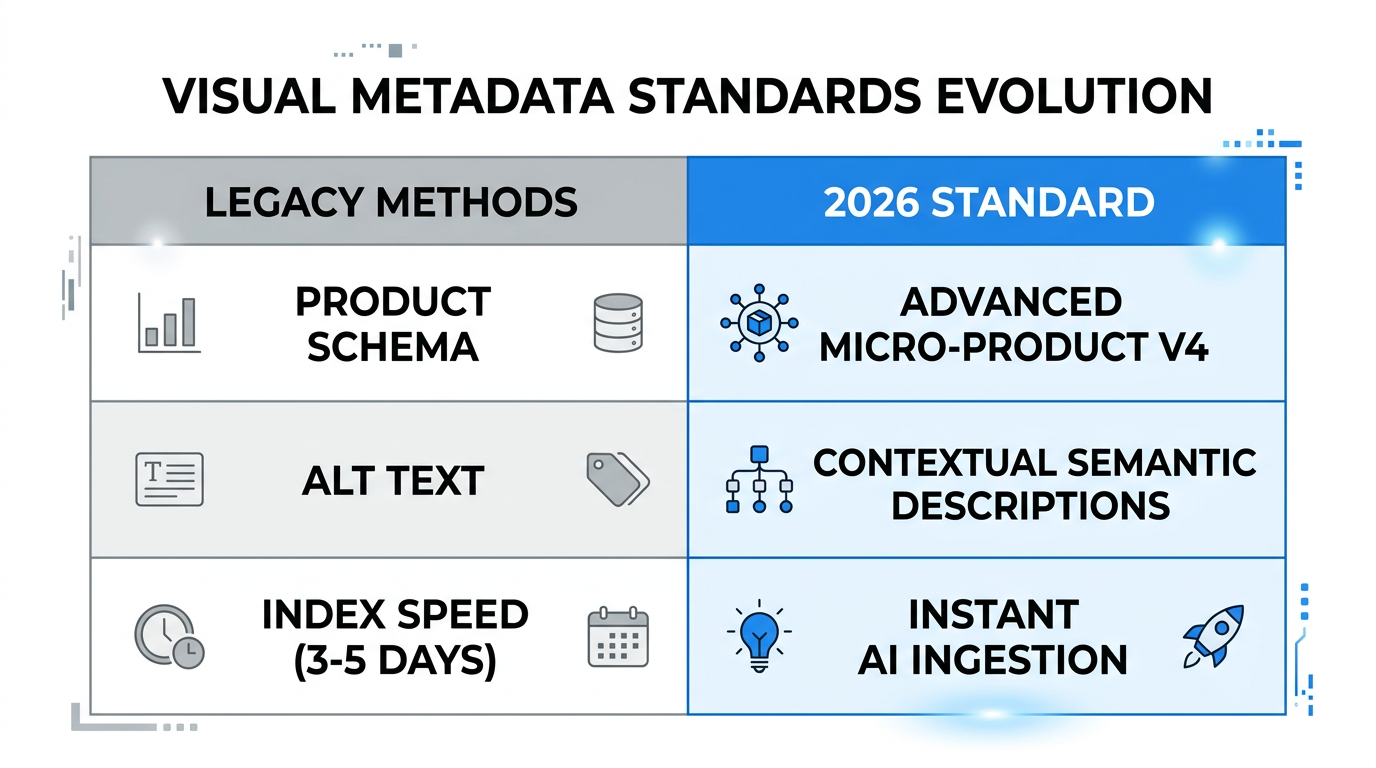

The 2026 Standard for Image Metadata: Beyond Alt Text

Gone are the days when alt text was a secondary consideration for accessibility alone. In 2026, product image SEO requires a highly structured approach to metadata. Search engines now process over 20 billion monthly Google Lens searches, 4 billion of which are strictly high-intent shopping queries. To capture this traffic, your metadata must provide a clear 'intent roadmap' for the AI.

First, your naming conventions must move away from generic strings like IMG_882.jpg. Use descriptive, hyphenated filenames that describe the object, color, material, and brand. For example: mens-waterproof-trail-running-shoes-blue-stormy.jpg. This helps the initial indexing phase by providing immediate semantic context. Furthermore, intent-based alt text should focus on the utility and aesthetic of the product. Instead of saying 'blue shoes,' use 'men\'s blue waterproof trail running shoes with rugged soles for mountain hiking.' According to research by AdsX, descriptive alt text that highlights use-case attributes is a critical factor in ranking within the 'visually similar' carousels on major marketplaces.

"Visual search is no longer a convenience; it is a core revenue engine. By 2025, it was already generating over $14.7 billion in direct US e-commerce revenue."Implementing Product and ImageObject JSON-LD Schema

While metadata provides the description, structured data for ecommerce provides the technical proof. To rank in a multimodal search environment—where users might upload a photo of a dress and type 'available in green?'—your backend must be flawlessly organized. Using JSON-LD schema is non-negotiable for building AI 'confidence.'

You must implement the Product and Offer schemas to communicate price, availability, and SKU data directly to search crawlers. However, the 2026 differentiator is the ImageObject schema. By explicitly linking specific images to product entities, you prevent AI models from misidentifying which asset belongs to which SKU. This is especially vital for brands with massive catalogs where 80% of consumers now rely on AI-generated answers for product discovery. Detailed schema markup ensures that when an AI personal shopper 'scans' the web for a deal, your product is the one it recommends with high confidence scores. You can use tools like Yoast SEO to manage these complex schema hierarchies without needing a full engineering team.

| Schema Type | Purpose for AI | Required Attributes |

|---|---|---|

| Product | Entity Identification | Name, Brand, Category |

| Offer | Commercial Context | Price, Currency, Availability |

| ImageObject | Machine Vision Mapping | ContentURL, Caption, RepresentativeOfPage |

| AggregateRating | Social Proof Indexing | RatingValue, ReviewCount |

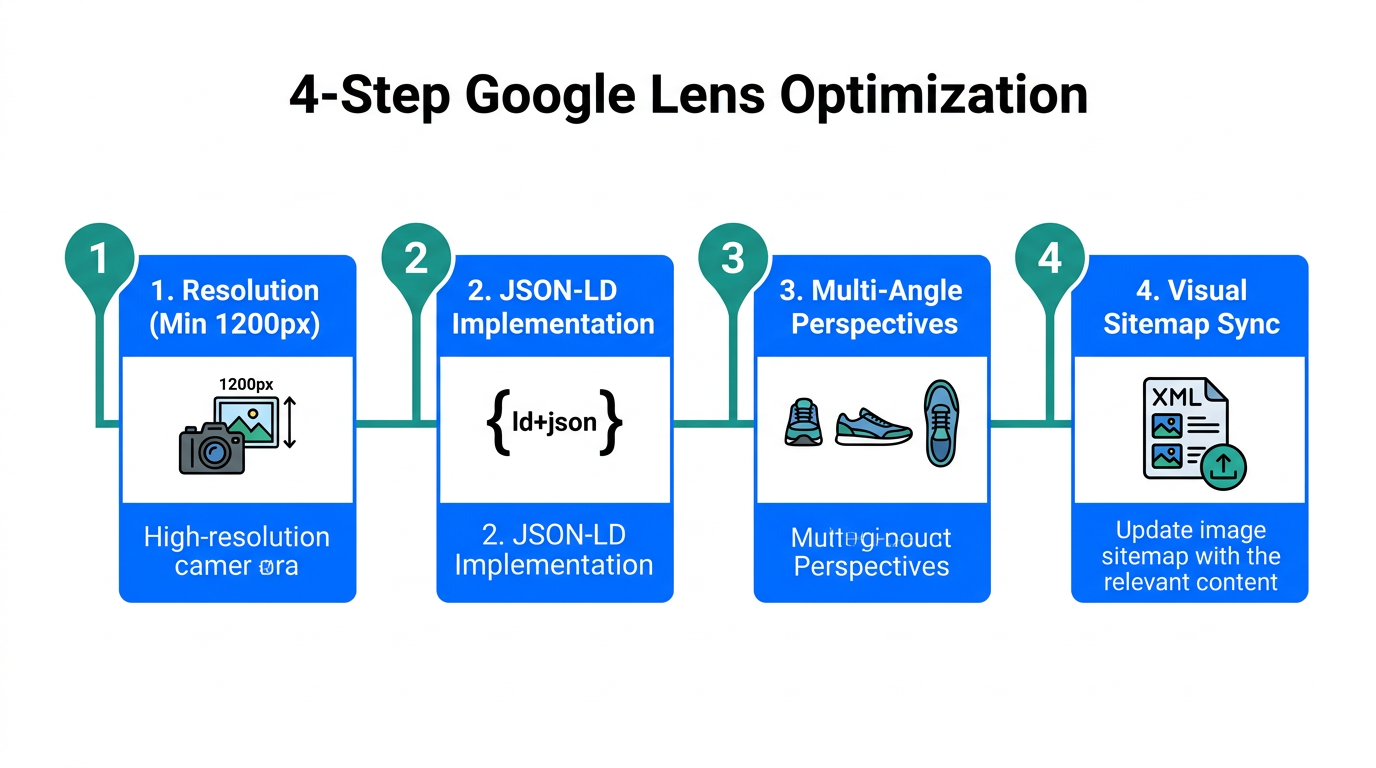

Technical Requirements: The 5-8 High-Resolution Angle Rule

Machine vision models like Amazon StyleSnap and Google Lens require multiple perspectives to accurately 'triangulate' a product's dimensions, texture, and quality. The 2026 standard for high-intent product pages is 5 to 8 high-resolution images. These images must be at least 1080px on the shortest side to ensure that the AI can detect micro-details like fabric weave or stitch patterns. According to AdsX, this multi-angle approach is what builds the necessary 'confidence' for an AI model to place your product at the top of a search result.

However, high resolution cannot come at the cost of speed. Growth marketers must adopt WebP or AVIF formats to maintain crisp visuals while keeping file sizes small. This is critical because 71% of visual searches occur on mobile devices, and a slow-loading site will kill your conversion rate regardless of your AI ranking. Technical experts at web.dev suggest that AVIF is the preferred choice in 2026 for its superior compression-to-quality ratio. To source these high-quality, multi-angle assets at scale, many brands are turning to UGC creators found on platforms like Stormy AI, where they can quickly identify creators who specialize in high-definition product photography.

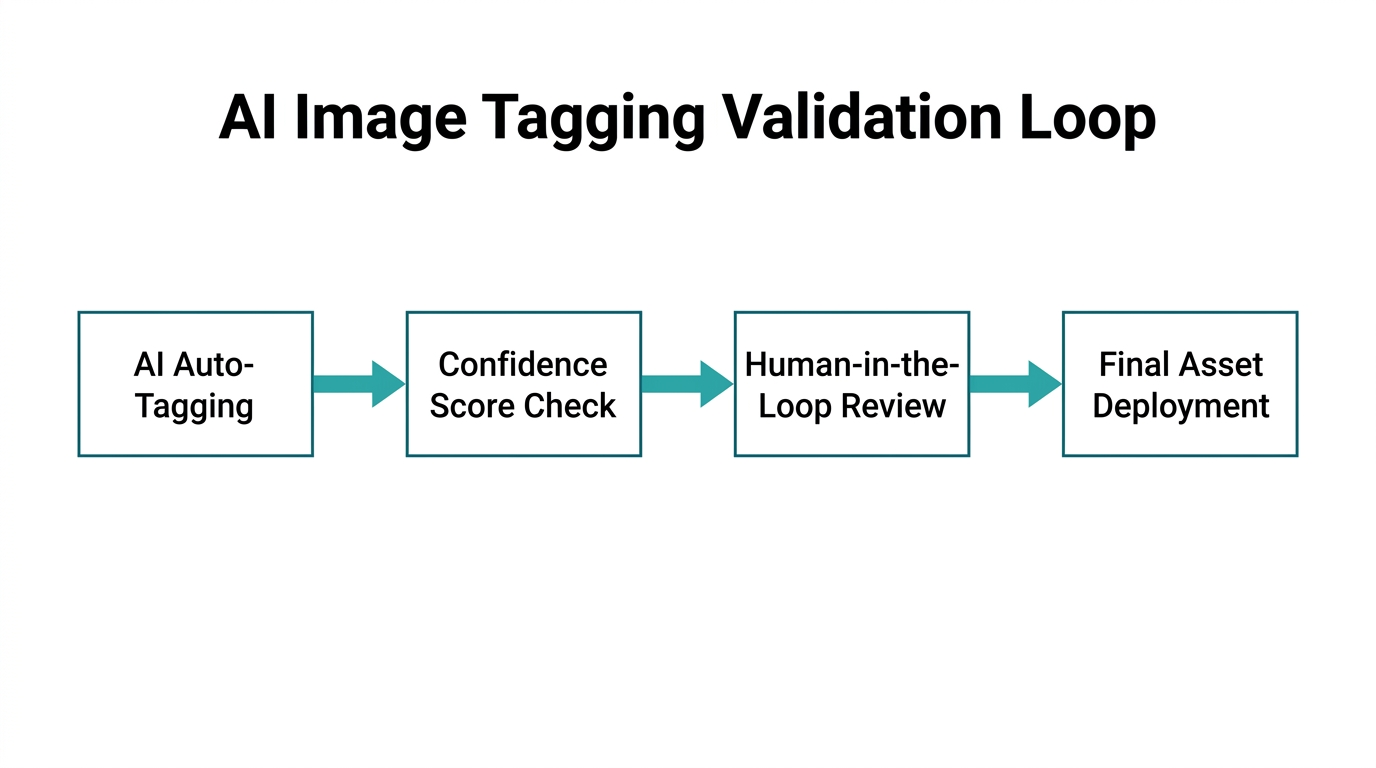

"If an AI model can't see the texture of your product from at least five angles, it will likely prioritize a competitor that provides a more complete data set."Avoiding the 'Auto-Tagging Hallucination' Trap

One of the biggest mistakes in AI image indexing is over-reliance on fully automated tagging. While AI discovery tools are powerful, they are prone to 'hallucinations'—misidentifying a floral pattern as a camouflage print, or a leather texture as vinyl. If your catalog is indexed with incorrect tags, your products will appear in irrelevant search results, leading to high bounce rates and low quality scores.

The solution is a human-in-the-loop (HITL) review process. Before pushing a catalog live to marketplaces like Amazon or Google Shopping, a human should verify the AI-generated tags for accuracy. Brands that implement this review step see a 30% increase in conversion rates because their products actually match user intent. Organizations like Market Vantage warn that ignoring this step can lead to 'algorithmic drift,' where your products are slowly sidelined by search engines because of low engagement on irrelevant queries. Use tools like ViSenze for the initial tagging pass, but always keep a human eyes-on for the final audit.

The 2026 Google Lens Optimization Checklist

To ensure your brand is ready for the visual-first shopper, follow this step-by-step playbook for mobile and visual discovery performance:

- Audit Image Dimensions: Ensure every product has at least 5 shots at 1080px+ resolution in AVIF format.

- Validate Schema: Use the Google Search Console to verify your

ImageObjectandProductJSON-LD implementation. - Enhance Mobile Speed: Optimize your LCP (Largest Contentful Paint) for mobile. According to PageSpeed Insights, mobile performance is the single greatest predictor of visual search conversion.

- Implement Multimodal Support: Use smart search tools like Searchanise to allow users to combine image uploads with text queries.

- Monitor AI Rankings: Use tools like Google Cloud Vision AI to see how the engine interprets your images before they go live.

- Social Proof Integration: Link your Stormy AI creator campaigns to your product pages. AI models give higher weight to products that are frequently mentioned across visual platforms like TikTok and Instagram.

Conclusion: The Vision-First Future

By 2026, visual search engine optimization has evolved from a niche tactic into a foundational requirement for growth. Brands that master the technical trio of metadata, structured data, and high-fidelity assets are already seeing a massive lift in customer engagement. Whether it's a shopper using Amazon StyleSnap to find a lookalike outfit or an AI agent autonomously sourcing the best price for a specific product, your visual assets are your storefront.

Don't let your products stay invisible. By implementing 5-8 high-resolution angles, utilizing AVIF for speed, and validating your data with human review, you ensure that your brand is the first thing AI models see. Start your optimization journey today by sourcing high-quality UGC and managing your creator assets with an all-in-one platform like Stormy AI, ensuring your visual presence is as strong as your product itself.