In the high-stakes world of luxury marketing, a single PR scandal can wipe out decades of brand equity in a matter of hours. As we move into 2025, the creator economy is facing a dual crisis: an unprecedented surge in sophisticated influencer fraud and a growing authenticity gap that threatens traditional campaign ROI. According to research on The Payments Association, influencer fraud is now estimated to cost businesses a staggering $1.3 billion annually [Source: CHEQ]. To combat this, forward-thinking brands like Prada are rewriting the rules of engagement by leveraging virtual influencer marketing to ensure 100% brand safety and data-clean results.

The Lil Miquela x Prada Case Study: A Virtual Takeover

During Milan Fashion Week, Prada made waves not just for its designs, but for its choice of digital ambassador. By partnering with Lil Miquela, a CGI-generated entity with millions of followers, Prada executed a flawless Instagram takeover. Unlike human creators, who are subject to the unpredictability of live events and personal controversies, Miquela provided a controlled, brand-safe environment that aligned perfectly with Prada's aesthetic precision.

The campaign wasn't just about the novelty of a virtual avatar; it was a masterclass in data-driven marketing. Because Miquela's entire existence is digital, every interaction was measurable with surgical accuracy. Prada used AI to track the specific demographics of every engagement, ensuring the campaign reached their target luxury audience without the typical "noise" of bot interference often found in high-profile human accounts. This level of transparency is becoming the new gold standard for influencer marketing trends in 2025.

"The Lil Miquela Prada partnership proved that virtual entities can achieve higher-quality engagement than human creators by eliminating the 'unpredictability' factor from the brand safety equation."

The $1.3 Billion Problem: The State of Influencer Fraud in 2025

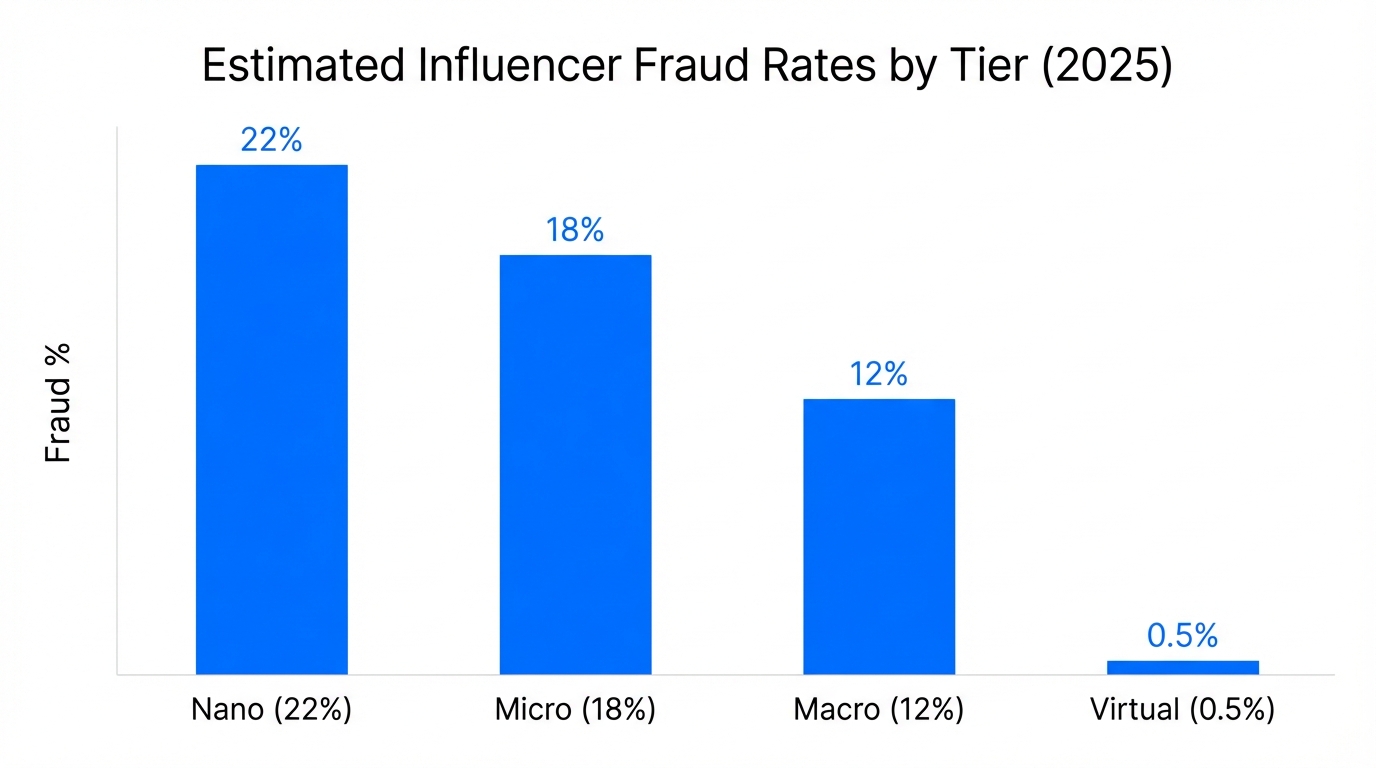

While Prada's move toward virtual influencers seems futuristic, it is a pragmatic response to a deteriorating landscape of trust. Fake followers are expected to increase by 60% on Instagram by the end of 2025, and TikTok is projected to host nearly 950 million fake accounts. This isn't just a vanity metric issue; it is a financial drain. Approximately 15% of total influencer marketing spend is currently lost to bot-driven traffic according to Statista.

The complexity of fraud has evolved. We are no longer just dealing with simple click farms; fraudsters now use agentic AI to create realistic personas that autonomously engage in conversations, making basic bot detection obsolete. Research from Persona highlights that identity fraud in digital spaces is becoming more sophisticated, requiring brands to adopt AI-powered brand safety strategies to protect their investments.

| Platform | Projected Fake Accounts (2025) | Average Bot Percentage | Risk Level |

|---|---|---|---|

| N/A (60% growth rate) | 14.1% | High | |

| TikTok | 950 Million | 18.5% | Critical |

| Mega-Influencers (1M+) | Variable | 23% | High |

How AI Detects the Undetectable: Advanced Auditing Techniques

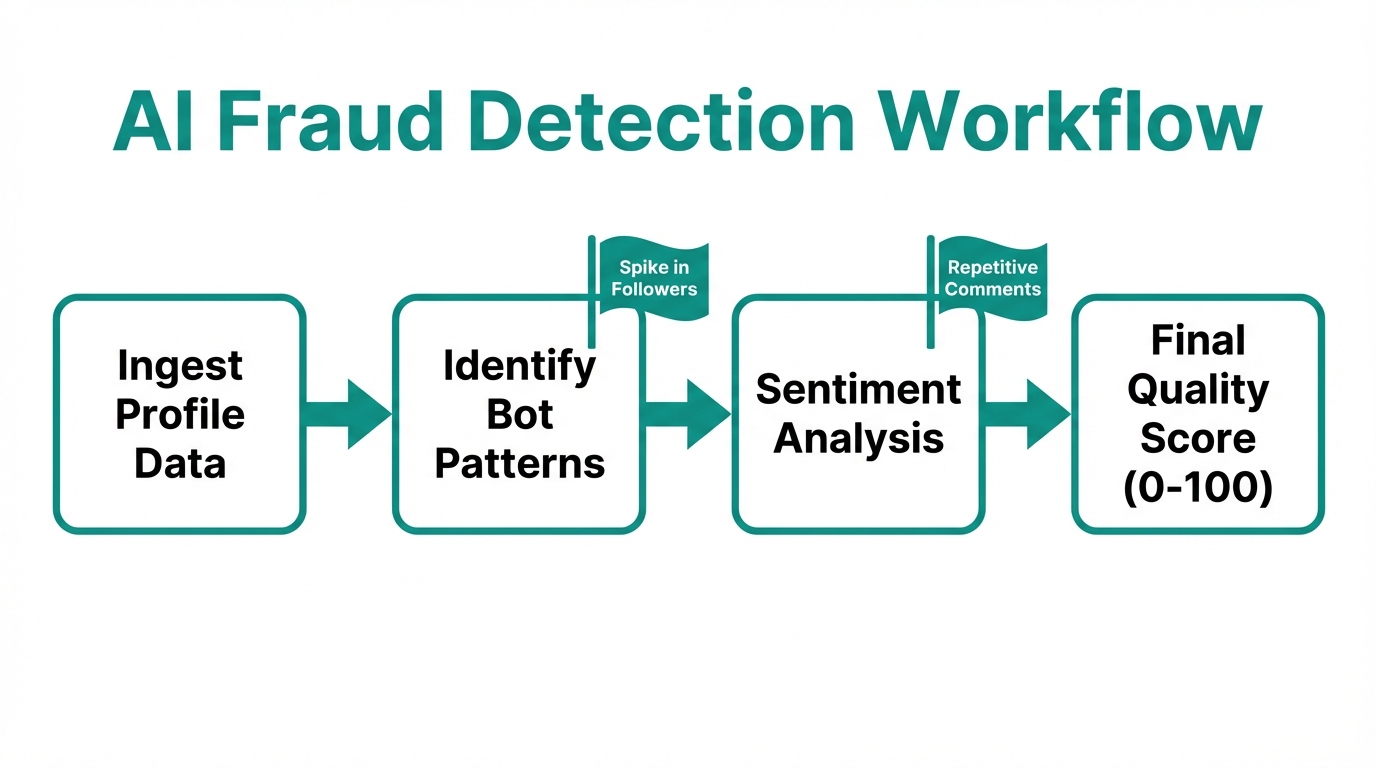

To navigate this minefield, brands are turning to AI tools that analyze signals invisible to the human eye. Modern brand safety strategies rely on four primary AI pillars to vet creators. Platforms like Stormy AI incorporate these capabilities to help marketers source and manage UGC creators at scale while filtering out fraudulent accounts.

- Behavioral Pattern Recognition: AI identifies sudden, unnatural spikes in followers that don't correlate with viral moments or media mentions.

- Natural Language Processing (NLP): By scanning thousands of comments, AI can differentiate between repetitive "bot-speak" (e.g., "Great post!", "Cool!") and genuine, context-aware human responses. Tools like Aithor demonstrate the power of NLP in identifying patterns in generated text.

- Image Recognition & Reverse Search: AI detects if profile pictures in a follower list are stolen stock photos or AI-generated "deepfake" faces, a common tactic for padding follower counts.

- Audience Topology Analysis: AI maps the network of an influencer's followers. If a large cluster is interconnected in an "engagement pod" but has no other outside interests, the account is flagged.

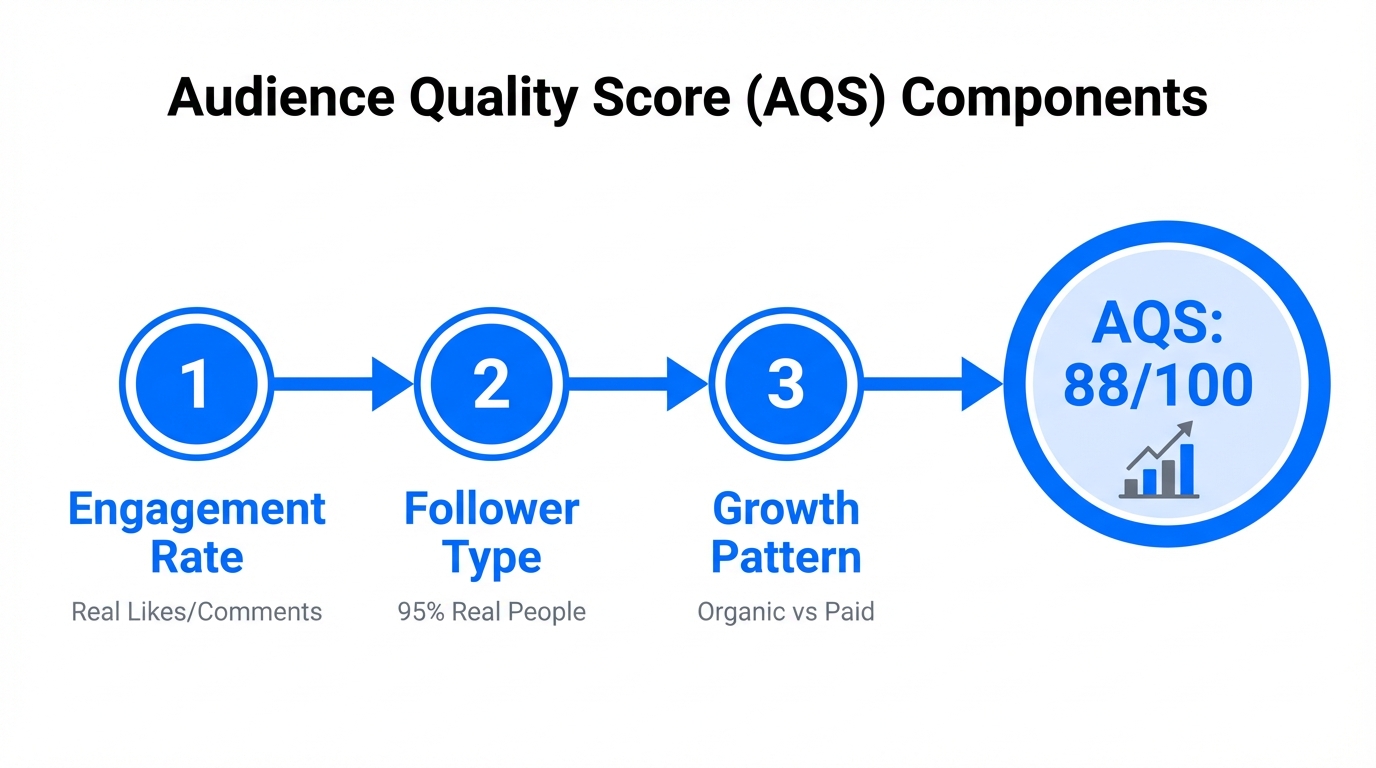

As AI Certs points out, the professionalization of AI auditing is becoming essential for marketers who need to prove the Audience Quality Score (AQS) of their selected creators.

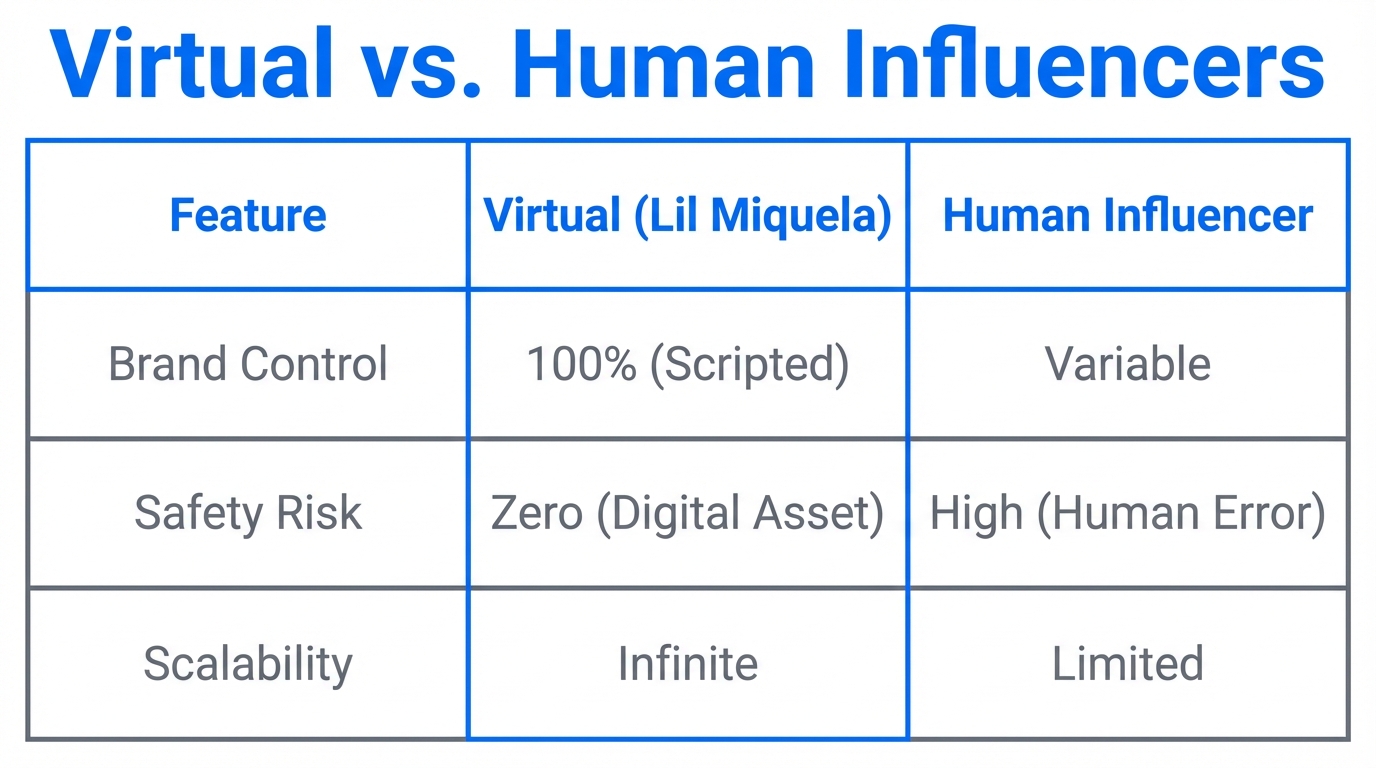

Virtual vs. Human: The Great Authenticity Debate

The shift toward AI-generated creators offers a compelling solution to brand safety, but it isn't without its trade-offs. Brands must balance the 100% safety of a digital entity with the raw, relatable authenticity of a human creator. Marketing analysts at Adweek suggest that while virtual influencers are highly effective for luxury and tech, lifestyle and wellness still thrive on human vulnerability.

"While AI influencers are cost-effective, long-term ROI depends on genuine, human influencers who are actual fans of the brand. We are facing an authenticity crisis that AI alone cannot solve."

Short-term profitability through AI influencers can lead to long-term brand erosion if the audience feels the "connection" is entirely manufactured. The key is to find a middle ground. Many brands are now using AI-powered creator discovery platforms to find human influencers with the "cleanest" possible data. By using Stormy AI, marketers can discover creators who have been pre-vetted for audience quality, ensuring the best of both worlds: human connection and digital safety.

| Feature | Virtual Influencers | Human Creators |

|---|---|---|

| PR Risk | Zero | High |

| Scalability | Infinite | Limited |

| Authenticity | Perceived/Stylized | High/Relatable |

| Data Transparency | 100% Clean | Variable (requires auditing) |

Measuring Audience Quality Scores (AQS) for 2025

In the 2025 creator economy, the most important metric is no longer follower count—it is the Audience Quality Score (AQS). This metric aggregates various data points to provide a single percentage representing how much of an audience is actually human and engaged. For brands running high-spend campaigns, an AQS below 80% is often a dealbreaker.

Managing these metrics across hundreds of creators requires a sophisticated workflow. Integrating tools like Eesel can help marketing teams organize the vast amount of documentation and data reports generated during the vetting process. A clean data stack ensures that your brand safety strategy is rooted in real-time API data rather than easily faked static screenshots of "insights."

The Brand Safety Playbook: A Step-by-Step Guide

To implement a robust virtual influencer marketing and safety strategy, follow these sequential steps:

Step 1: Define Your Safety Threshold

Determine the maximum acceptable percentage of bot followers and inactive accounts for your campaign. For luxury brands, this is typically under 10%.

Step 2: Use AI for Multi-Platform Vetting

Employ AI-powered tools to audit creators across TikTok, Instagram, and YouTube. Look for the "Follow-Unfollow" pattern—where creators follow thousands of people to get a follow back, then instantly unfollow. This is a primary indicator of non-organic growth.

Step 3: Analyze Sentiment, Not Just Engagement

High engagement isn't always positive. Use sentiment analysis to ensure that the comments on a creator's posts are actually supportive of their content and, by extension, your brand. If 90% of comments are negative or spam, the partnership will damage your reputation.

Step 4: Diversify with Virtual Creators

Integrate AI generated creators into your mix for high-risk or highly stylized campaigns where absolute control is necessary. This creates a "safety buffer" for your overall marketing portfolio.

Step 5: Monitor Post-Campaign Performance

Continue to track creators after the campaign ends. Brands should monitor for "engagement pods" that may have artificially inflated the results during the active campaign period. Using a tool like Notion or a dedicated CRM can help keep these historical logs organized.

"In 2025, brand safety isn't a checkbox; it's a continuous AI-driven process of verification and adaptation."

Conclusion: Balancing Profitability and Brand Equity

The lessons from Prada's venture with Lil Miquela are clear: data-clean marketing is the only way to survive the influencer fraud epidemic of 2025. Whether you choose to work with AI generated creators or human influencers, the tools you use for discovery and vetting will determine your ROI. By prioritizing Audience Quality Scores and utilizing advanced AI auditing, brands can protect themselves from the $1.3 billion fraud trap.

As the creator economy continues to evolve, the most successful brands will be those that pair human creativity with AI-powered precision. Start building your brand safety strategy today by focusing on transparency, verification, and the right technology stack to ensure every dollar spent reaches a real, human customer.