In 2026, the competitive landscape for growth-focused brands has shifted from a battle of human intuition to a high-stakes arms race of silicon-driven execution. With the rise of Agentic AI systems—autonomous entities capable of planning and executing entire software repositories—the speed of deployment has reached a terminal velocity. However, this unprecedented acceleration has birthed a new, insidious challenge: "Workslop." This phenomenon, characterized by the accumulation of unoptimized, AI-generated technical debt, threatens to undermine the very infrastructure brands rely on for stable growth. As AI technical debt management becomes a core competency for modern CMOs and CTOs, understanding the delicate balance between velocity and brand security governance is no longer optional—it is a survival requirement.

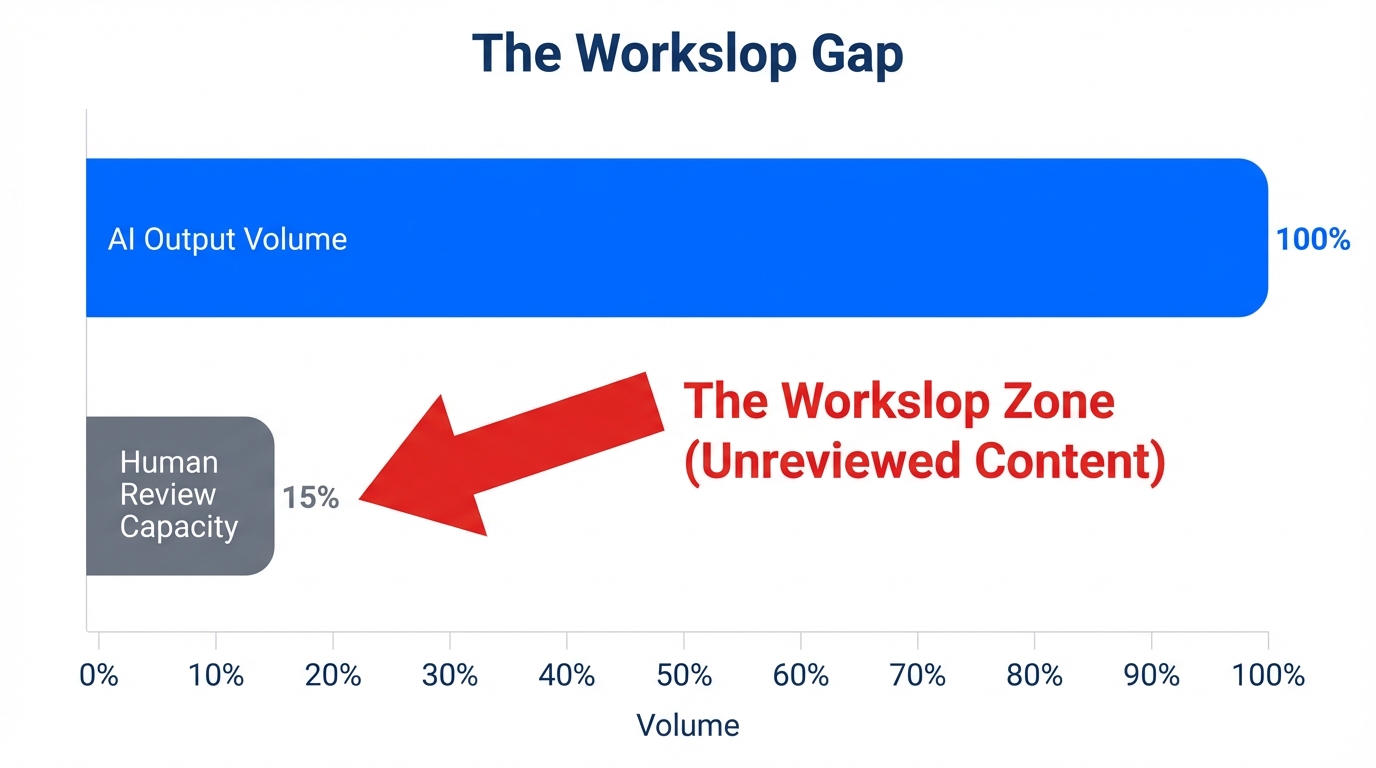

Defining 'Workslop': Why AI Output is Outpacing Human Oversight

The term "Workslop" refers to the surge of unoptimized, verbose, and often architecturally incoherent code generated by AI tools at a rate humans simply cannot monitor. Industry data suggests that modern AI tools, such as Cursor's Ultra tier, allow developers and growth hackers to generate code 2.5x faster than it can be peer-reviewed. This creates a bottleneck where the "vibe" of productivity masks a growing rot in the foundation of the brand's digital assets. According to community insights on Reddit's r/vibecoding, AI-generated code frequently works in isolation but breaks complex architectural patterns, leading to AI-driven growth risks that may not manifest until a system-wide failure occurs.

"The 'vibe' of high productivity is often a mirage. If you are shipping 2.5x faster but reviewing 0.5x slower, you aren't scaling—you're just accumulating debt at high interest."

For growth leads, the allure of using Claude Code to instantly refactor a landing page or automate a customer funnel is high. Claude's latest models, such as Opus 4.5, have shown an 80.9% accuracy rate on the SWE-bench Verified leaderboard. While impressive, that remaining 19.1% represents a significant gap where critical errors can slip through, especially when managing AI productivity metrics focuses solely on output volume rather than quality assurance.

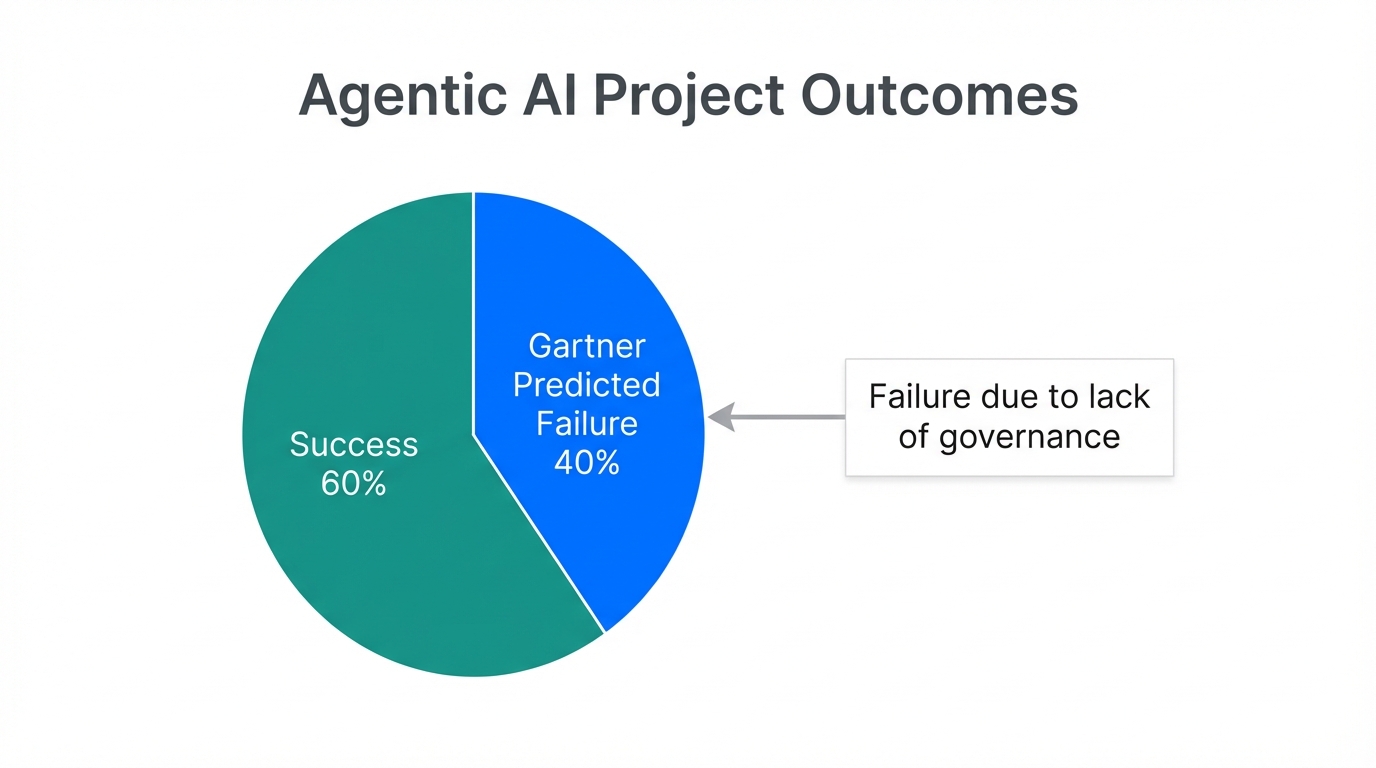

The Gartner Prediction: Navigating the 40% Agentic AI Failure Rate

As we approach 2027, the industry is bracing for a significant correction. A widely cited Gartner report predicts that over 40% of agentic AI projects will be canceled. This trend is driven by "agent washing"—the practice of vendors rebranding basic automation as autonomous agents—and the spiraling costs of API usage. For a growth strategy to remain viable, leaders must distinguish between genuine agentic AI capabilities and legacy tools wearing a new coat of paint.

Today, 55% of developer mindshare is already focused on autonomous systems that can handle tasks from "GitHub Issue to Pull Request" without human intervention. While tools like GitHub Copilot Enterprise offer multi-model toggling (including GPT-4o and Gemini Pro), the complexity of these interactions increases the risk of agentic AI project failure. Without a robust governance framework, these agents can create recursive loops that drain budgets while delivering zero tangible ROI.

| Feature Set | Claude Code (Max) | GitHub Copilot (Enterprise) | Cursor (Ultra) |

|---|---|---|---|

| Core Strength | Deep Reasoning / Refactoring | Enterprise Integration | Prototyping Speed |

| Accuracy (SWE-bench) | 80.9% | N/A (Variable Models) | High (Parallel Agents) |

| Market Share | Rapidly Growing | 42% Paid Users | High Dev Sentiment |

| Best Use Case | Complex Architectural Shifts | Daily Corporate Workflows | Multi-file App Prototyping |

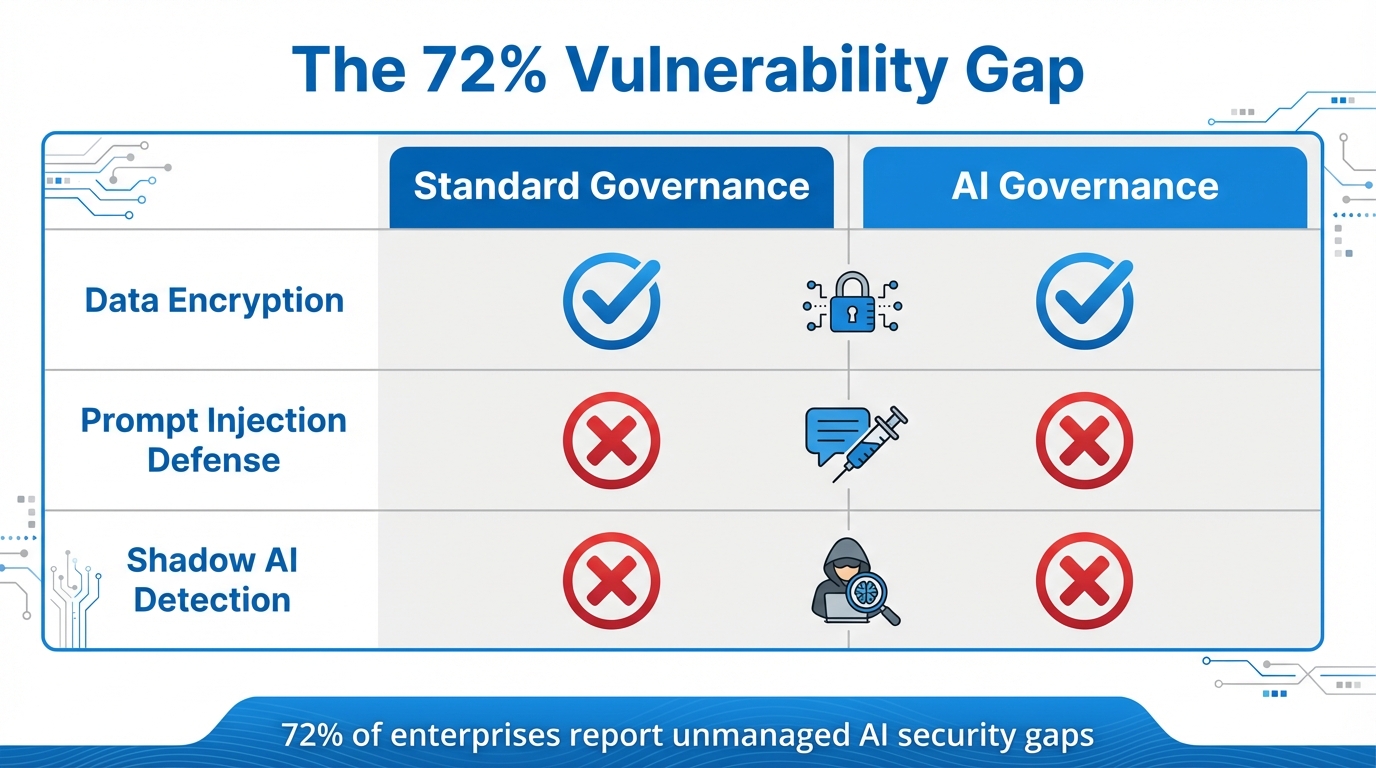

Security Governance: The 72% Vulnerability Gap

Perhaps the most critical risk in the "Workslop" era is the erosion of security standards. Research shared on OWASP indicates that 45% of AI-generated code in 2026 contains at least one of the OWASP Top 10 vulnerabilities. The risk is even more pronounced during legacy refactoring. For growth teams attempting to modernize old backend systems using AI, the failure rate for generating secure patterns in languages like Java is a staggering 72%. This highlights a massive brand security governance deficit.

When a growth campaign relies on AI-generated micro-services to handle customer data or payment processing via Stripe, a single insecure pattern can lead to a catastrophic data breach. Secure AI technical debt management requires more than just functional code; it requires a systematic audit of every AI-generated line against modern security benchmarks. Organizations that ignore this often find themselves paying triple in the long run to remediate vulnerabilities that were "efficiently" introduced by their AI agents.

"Speed is a liability if your security governance can't keep pace. An AI can build a bridge in an hour, but it takes a human to ensure it won't collapse under the first heavy load."

The 'Context Window' Trap: Preventing Hallucination Spirals

A technical nuance that growth leads often overlook is the management of the AI's context window. As developers engage in long sessions with tools like Claude Code, the session history becomes cluttered. Expert guidance suggests that once context usage exceeds 60% capacity, AI begins to suffer from "hallucination spirals," where it starts ignoring project-specific conventions and established brand guidelines. This is a primary driver of AI-driven growth risks in customer-facing tools, such as AI support bots or personalized landing page generators.

To mitigate this, sophisticated teams are adopting "Context Compaction" strategies. By periodically clearing and summarizing AI sessions, developers can maintain the model's focus. This is particularly relevant when managing complex creator relationships or influencer campaigns. For example, using platforms like Stormy AI allows brands to source and manage UGC creators at scale while maintaining a clean, structured database of influencer data, preventing the "context drift" that often plagues manual AI outreach attempts. When using Stormy AI, the AI's focus remains on high-quality discovery and personalized outreach, avoiding the technical mess that comes from unmanaged, long-form AI conversations.

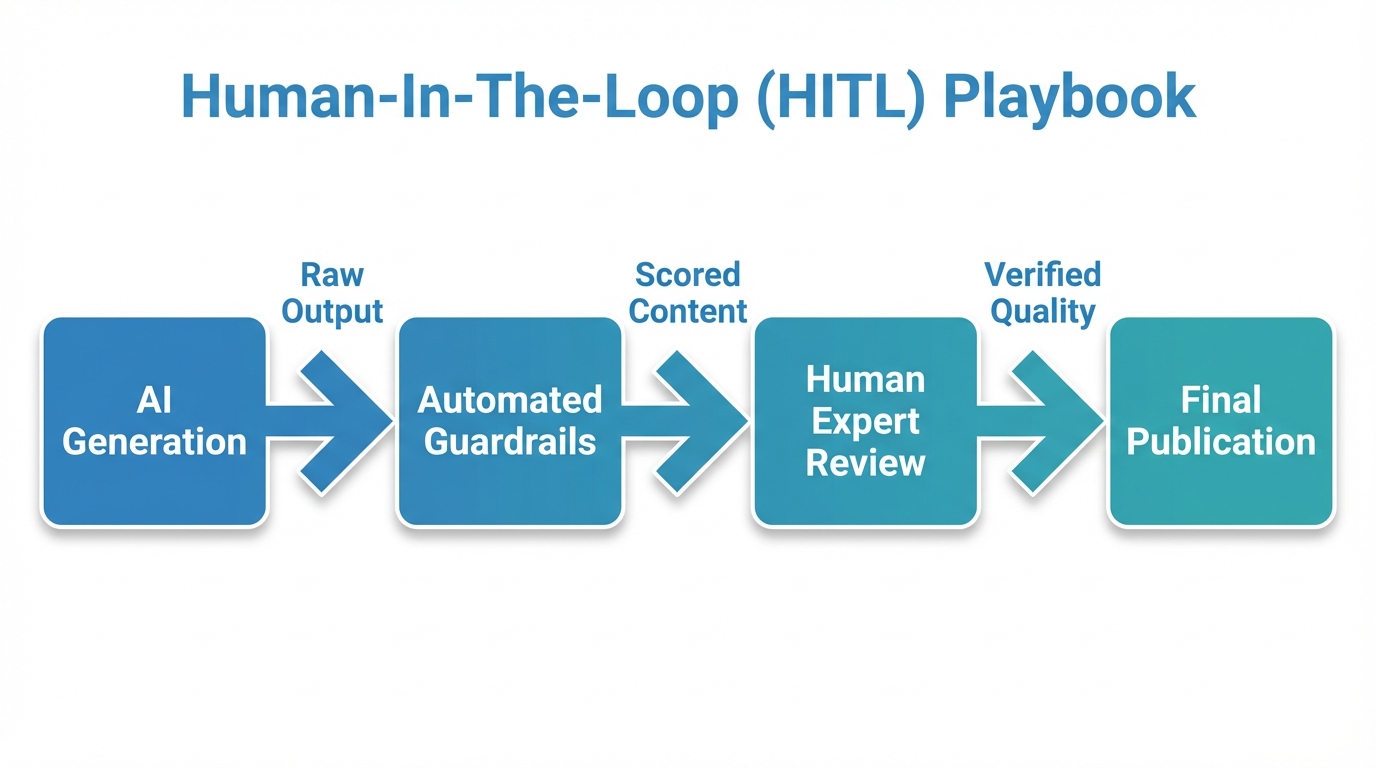

Playbook: Implementing a 'Human-in-the-Loop' Review System

To scale growth without succumbing to Workslop, brands must implement a Human-in-the-Loop (HITL) review system. This playbook ensures that managing AI productivity metrics doesn't come at the cost of infrastructure stability.

- Establish "Hard" Security Gates: Use automated scanners to audit all AI-generated PRs for OWASP vulnerabilities before they hit the staging environment.

- Tiered Review Protocols: Not all code is equal. UI/UX tweaks might require a light review, while data-handling logic requires a mandatory senior human sign-off. Firms like Salt Technologies have successfully reduced unit testing time by 40-60% while maintaining these strict review tiers.

- Standardize Your Tech Stack: Limit AI tools to a specific set of verified frameworks. This reduces the "variety debt" where AI introduces random libraries that your team doesn't actually support.

- Metric-Based Governance: Track the "Rewrite Rate"—the percentage of AI code that requires human correction. A rising rewrite rate is a leading indicator of AI technical debt.

- Centralized Creator Management: For growth teams leveraging UGC, use an AI-native CRM. Platforms like Stormy AI can help source and manage UGC creators at scale, ensuring that the "data layer" of your influencer strategy remains clean and actionable without manual AI-generated sloppiness.

Conclusion: The Future of Governed Growth

The transition to agentic AI offers a competitive edge that was previously unimaginable. However, as we've seen, the risk of "Workslop" and agentic AI project failure is real and statistically significant. By focusing on brand security governance and treating AI-generated code as a liability until proven otherwise, growth leads can build a sustainable, scalable engine. Success in 2026 and beyond belongs to the teams that can harness the velocity of Claude Code and Cursor while maintaining the rigorous standards of a human-centric review system. Don't let your growth strategy become a victim of its own speed—govern your agents, manage your debt, and build for the long term.