By 2026, the promise of AI-driven marketing operations has shifted from mere experimentation to a battle over efficiency. While models like Claude 3.5 and the latest Opus iterations are more capable than ever, many marketing teams are hitting a performance ceiling. The culprit isn't the model's intelligence; it's the bloat in how we talk to them. As we manage increasingly complex workflows—from multi-channel attribution to high-scale creator outreach—the way we architect our AI agents determines whether we get high-quality output or expensive digital "slop."

The 2026 Context Window Crisis: Why Bloated Prompts Are Killing Performance

Why agent.md files create a massive context burden every time you chat.

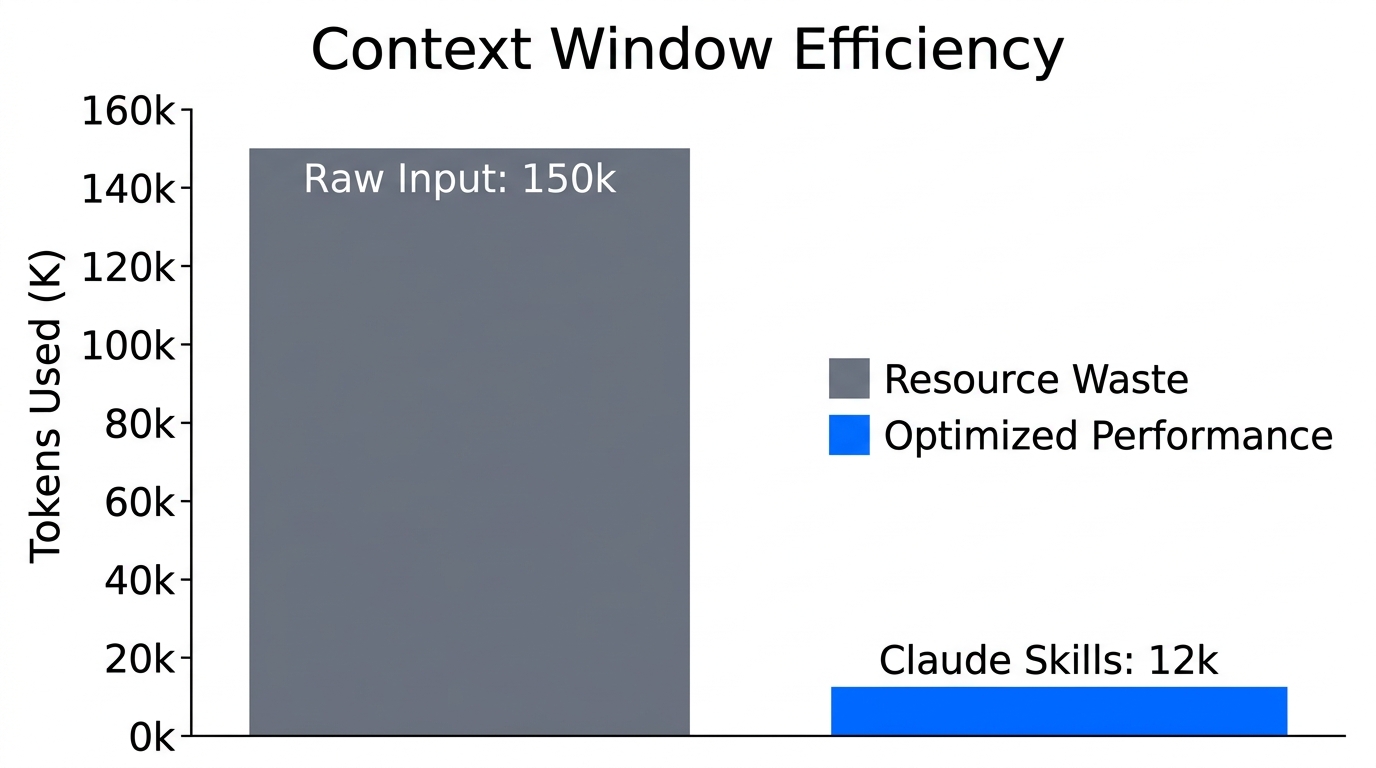

In the early days of AI agents, the standard advice was to build massive agent.md or claude.md files. These files acted as a "brain," containing every brand guideline, technical spec, and strategic preference the company owned. However, as we move through 2026, this approach has created a context window crisis. Every time you interact with an agent using a global instructions file, you are force-feeding it thousands of tokens before it even reads your current request.

Research suggests [source] that 95% of users don't actually need a permanent agent.md file. The latest models, such as those integrated into Google Ads and Meta Ads Manager, are already exceptionally good at general reasoning. Telling a model to "use a professional tone" or "format as a table" in every single turn is redundant—it already knows how to do those things. When you waste 7,000 to 10,000 tokens on a system prompt every time you hit "Enter," you aren't just wasting money; you're making the model statistically more likely to hallucinate.

"The models are exceptionally good now, but context still matters. You have the power to steer them toward quality or toward slop based on how you manage that context."

Understanding 'Progressive Disclosure': The Skill.md Revolution

Using skill.md files to drastically reduce token usage through progressive context disclosure.The solution for 2026 is a concept known as Progressive Disclosure. Instead of giving the agent everything at once, you use "Skills." In the context of Claude Code, a skill is a modular Markdown file that the agent only accesses when it specifically needs it.

When you define a skill, the agent only keeps the name and description of that skill in its active memory. This might take up 50 tokens instead of 1,000. If you ask the agent to "analyze our latest influencer campaign performance," it scans its list of available skills, sees one titled performance_report_analyzer.md, and only then pulls the full instructions into the context window.

| Feature | Global Agent.md | Custom Claude Skills |

|---|---|---|

| Token Usage | High (Constant overhead) | Low (On-demand only) |

| Model Accuracy | Lower (Context saturation) | Higher (Focused attention) |

| Scalability | Poor (One file gets too big) | Excellent (Infinite sub-skills) |

| Maintenance | Difficult (Version control hell) | Easy (Modular updates) |

Codifying Your Unique Brand Voice and Strategy

Generic AI output is the death of modern marketing operations. To stand out, your agents need to understand your proprietary methodology. However, this shouldn't be a 50-page PDF. It should be codified into specialized skill files. For example, if your brand uses a specific UGC sourcing framework to find creators for TikTok Ads, that logic belongs in a ugc_vetting.md skill.

By using platforms like Stormy AI, you can discover high-performing creators through natural language, and then use your custom Claude skills to instantly analyze their audience quality against your specific brand standards. This combination of AI discovery and codified vetting allows a single Marketing Ops manager to do the work of a 10-person agency.

Example Skill Structure

- Name:

brand_voice_harmonizer - Description: Use this when drafting social copy to ensure it aligns with our 2026 "Direct-to-Gen Alpha" tone guidelines.

- Content: Specific "Do's and Don'ts," vocabulary lists, and 3-5 high-performing examples from our Shopify store's best-performing product descriptions.

"Don't tell the model things it already knows. Tell it what makes your business unique, and store that in a skill it can call upon only when the task demands it."

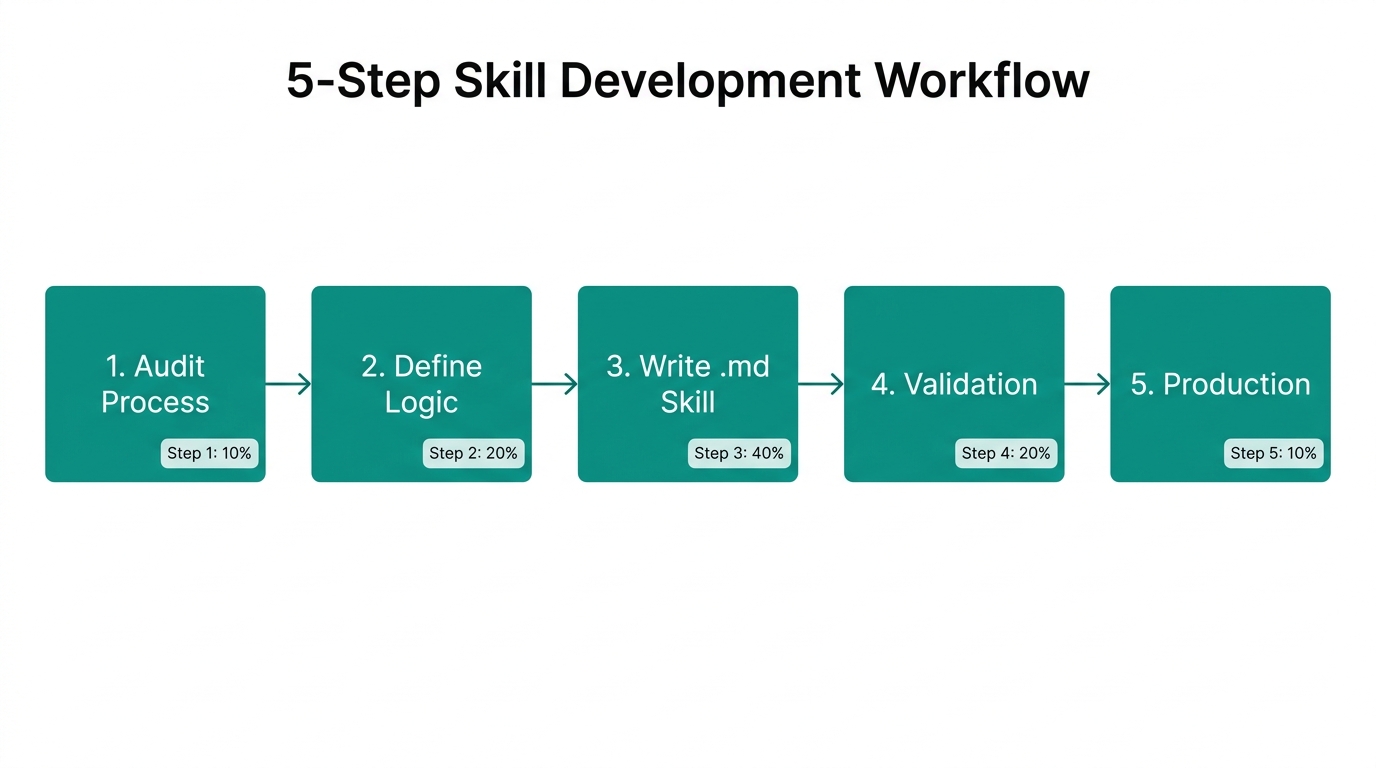

The 5-Step Process: From Manual Run to Permanent Skill

A proven framework for identifying workflows and mapping them to AI skills.

The biggest mistake Marketing Ops leaders make is trying to write a skill from scratch using their own imagination. AI agents don't think like humans; they are token predictors. To build a skill that actually works, you must walk with the agent through a successful execution first.

Step 1: Identify the Workflow

Start with a repetitive task. For instance, reviewing LemonSqueezy affiliate applications or vetting influencers from Stormy AI's search engine results.

Step 2: The Manual "Hand-Holding" Phase

Run the task manually with the agent. Don't give it a script. Give it a goal and correct it in real-time. If it misses a detail—like checking a creator's Trustpilot rating or their funding history on Crunchbase—point it out immediately. "You missed the engagement-to-follower ratio check. Go back and do that before proceeding."

Step 3: Reach a Successful Run

Once the agent produces the perfect output, you have your "Golden Record." This conversation now contains the exact logic the agent needs to succeed every time.

Step 4: Recursive Codification

Tell the agent: "Review our conversation. Extract the logic, steps, and criteria you used to reach this successful result and format it into a skill.md file." The agent is much better at documenting its own successful logic than you are at guessing it.

Step 5: The Feedback Loop

Skills are living documents. When the agent eventually messes up—and it will—don't get frustrated. Ask it: "Why did you fail? What information was missing from your skill file?" Then, tell it to update the skill file to prevent that error from happening again.

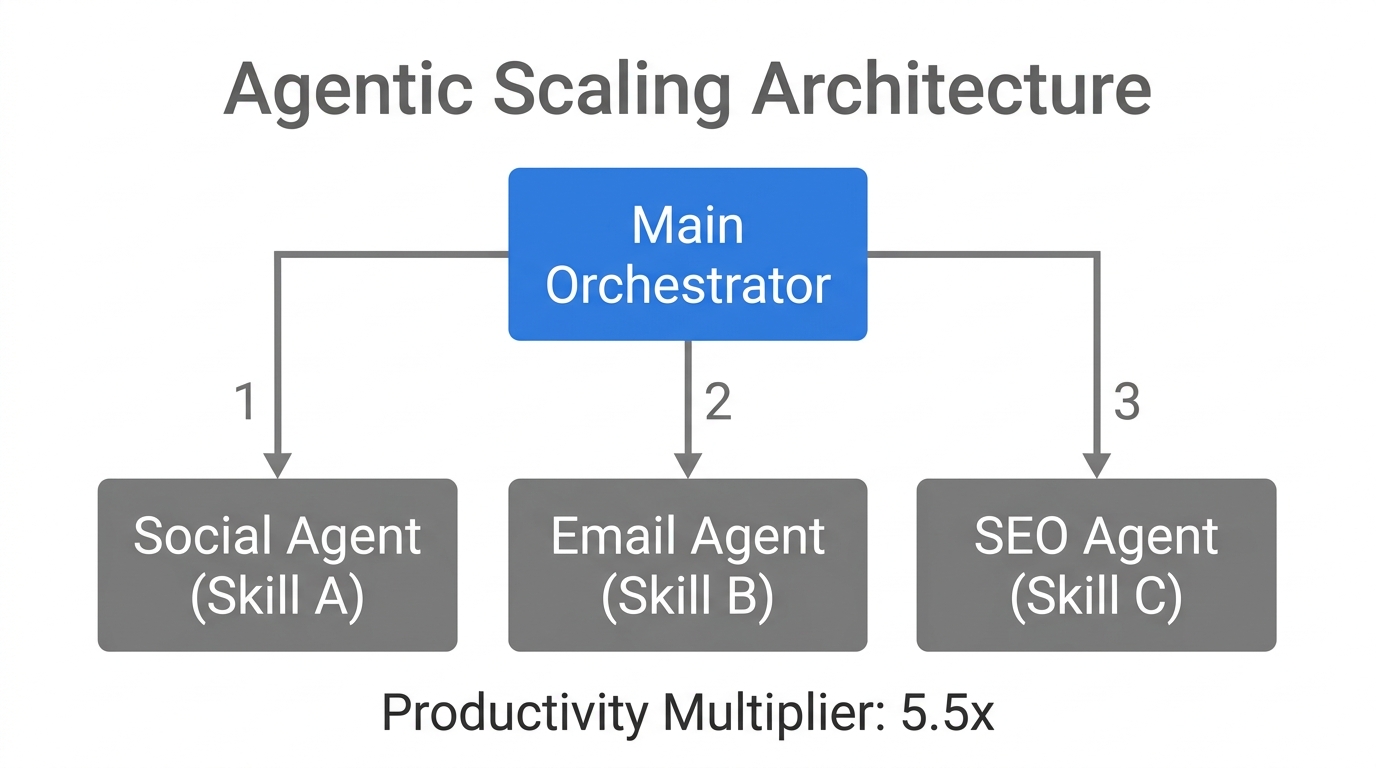

Scaling for Productivity: Managing a Fleet of Sub-Agents

When and how to deploy sub-agents to handle specific complex workflow steps.

In 2026, the goal isn't to have one giant "Marketing Manager" agent. It's to have a lead agent that manages specialized sub-agents. This is how you achieve true scaling marketing productivity.

By compartmentalizing tasks into sub-agents—one for Google Analytics deep-dives, one for contract review, and one for influencer outreach via Stormy AI—you keep each agent's context window pristine. Each sub-agent only has the skills relevant to its specific domain.

"Stop scaling for what looks cool and start scaling for productivity. One agent with fifty skills is more dangerous than fifty agents with no direction."

For example, you might set up a Social Listening Agent that uses skills to filter mentions on X/Twitter and Reddit. When it identifies a trending topic, it triggers a Content Creation Agent that already has your brand voice skills loaded. This entire chain can be automated using Zapier or Make, allowing your human team to focus on high-level strategy rather than execution.

The Future of Marketing Operations is Modular

As we navigate the marketing landscape of 2026, the competitive advantage lies with those who can manage AI context with precision. Moving away from bloated, static instruction files toward dynamic Claude skills is the only way to maintain accuracy and control costs as you scale.

Start by identifying one workflow today. Walk through it manually with an agent, turn it into a skill, and watch your productivity skyrocket. And when you're ready to fill those workflows with the world's best creators, let Stormy AI handle the discovery so your custom skills can handle the results. The age of AI slop is over—the age of the specialized marketing engineer has arrived.