In 2024, the marketing world shifted from simple AI assistants that draft emails to autonomous agentic AI that executes entire campaigns. At the heart of this revolution is OpenClaw, an open-source framework that has skyrocketed from niche project to a GitHub titan with over 247,000 stars. While the promise of running ads on autopilot is alluring, it introduces a terrifying new risk: the erosion of brand equity. If an agent hallucinates a discount code or uses a tone that alienates your core audience, the damage to your reputation can be permanent. Protecting your brand in this new era requires a shift from passive observation to active marketing AI risk management.

The Soul of the Machine: Hardcoding Brand Voice with SOUL.md

One of the most frequent complaints about AI-generated content is that it sounds "generic." When an autonomous agent manages your social replies or ad copy without guardrails, it defaults to the median tone of its training data. To prevent this, OpenClaw users have pioneered the use of SOUL.md and AGENTS.md files. These are markdown documents stored within your ~/.openclaw/workspace/ directory that act as the "genetic code" for your agent’s personality.

A robust SOUL.md file doesn't just list adjectives; it provides negative constraints. For example, instead of just saying "be professional," your file should state: "Never use emojis in LinkedIn copy," or "Always refer to our product as a 'solution' rather than a 'tool'." This is the foundation of effective AI brand voice management.

"The goal of an autonomous agent isn't just to work faster; it's to work with the same nuance and brand-awareness as your most senior creative director."

By defining these constraints in a centralized file, you ensure that every "Skill" the agent executes—whether it’s the Ryze AI Performance Auditor or a custom creative analyst—adheres to the same stylistic standards. This prevents the "fragmented brand" syndrome where different channels seem to be speaking in different voices.

SOUL.md file as a living brand bible. Update it after every campaign review to refine the agent's understanding of your evolving brand identity.Permission Management: Avoiding the 'Admin' Trap

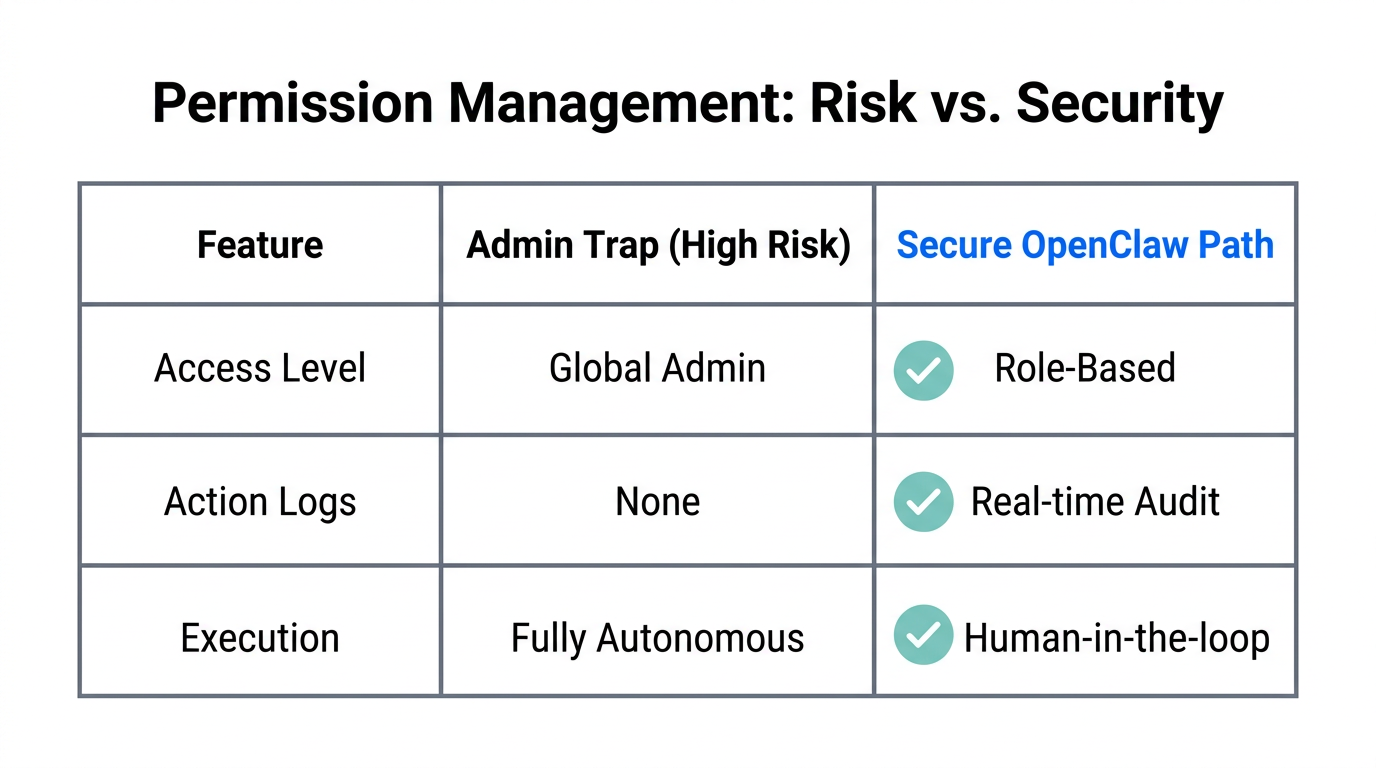

Perhaps the most dangerous mistake a growth team can make is giving an AI agent full "Admin" access to critical platforms like Meta Ads Manager or Google Ads. While it is tempting to grant broad permissions to avoid API errors, the security risks are astronomical. If an agent experiences a logic loop or a prompt-injection attack, an Admin-level agent could theoretically delete entire pixel configurations or dump your daily budget in minutes.

When implementing OpenClaw security best practices, always follow the Principle of Least Privilege (PoLP). Create a dedicated user account for your agent with "Standard" or "Editor" permissions. This allows the agent to adjust bids and pause underperforming ads without giving it the power to change billing settings or add new users.

| Permission Level | Agent Capability | Risk Profile |

|---|---|---|

| Read-Only | Auditing, Reporting, Data Export | Low - Safest for initial deployment. |

| Standard/Editor | Adjusting Bids, Swapping Creative | Moderate - Required for autonomous optimization. |

| Admin | Full Account Control, User Management | Critical - Never recommended for AI agents. |

According to IAB research, as 85% of marketing companies move toward agentic models, those who fail to segment autonomous agent permissions will be the first to suffer from catastrophic account errors. Protect your brand equity by building a "blast wall" between the agent and your core financial settings.

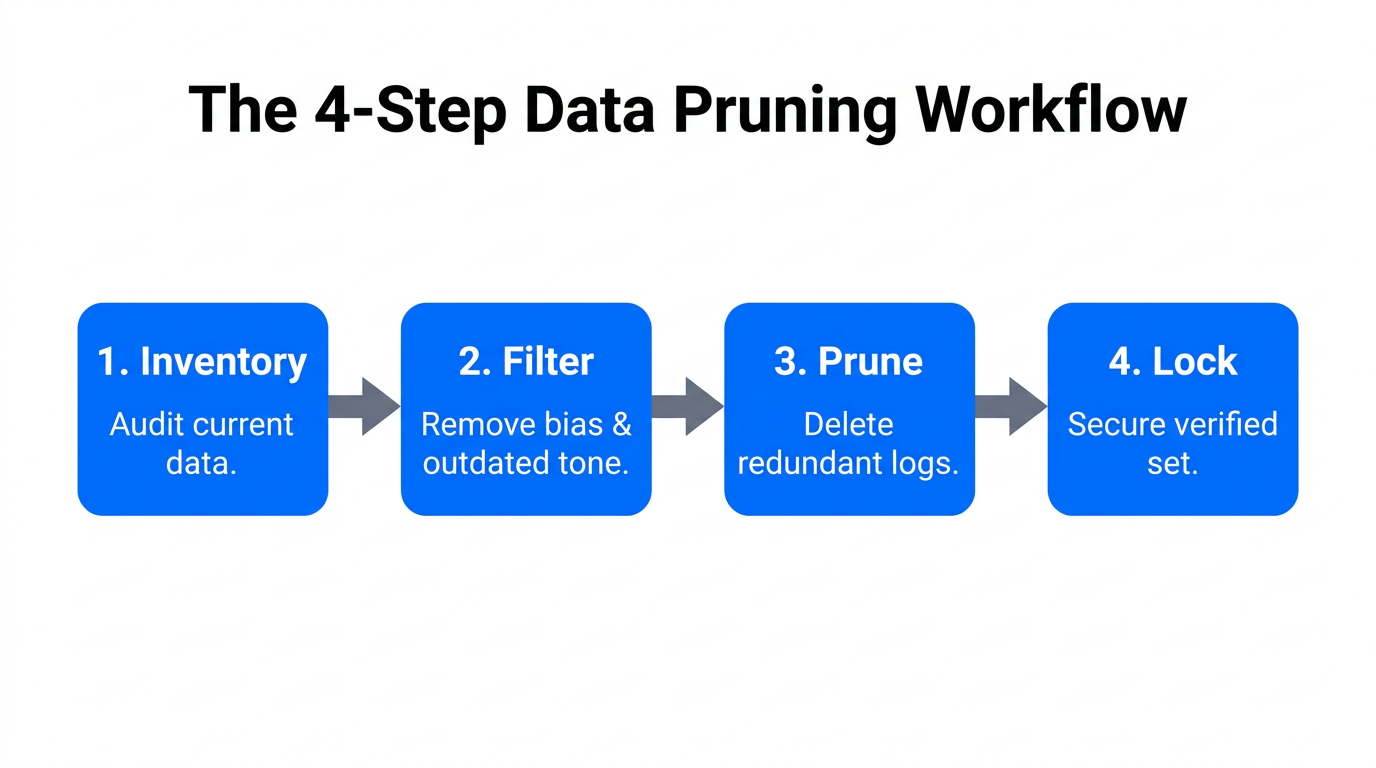

Preventing Data-Set Creep and Privacy Erosion

In an era where OpenClaw runs locally to protect data privacy, a new threat has emerged: Data-Set Creep. This occurs when an agent, tasked with optimizing ad performance, begins pulling data from sensitive internal fields it wasn't intended to touch—such as customer PII (Personally Identifiable Information) or internal margin data—to "improve" its decision-making.

To mitigate this, marketers should utilize the Ad Context Protocol (AdCP), a standardized way for agents to read ad structures without needing deep, unrestricted access to your entire database. Tools like Adspirer provide zero-config plugins that act as a filter, ensuring the agent only sees the metrics necessary for its specific "Skill."

"Privacy isn't just about where the data is stored; it's about who—or what—has the keys to the kingdom."

Limiting agent access to sensitive internal data fields isn't just about brand safety in AI advertising; it's a legal necessity. With the death of third-party cookies, maintaining strict control over your first-party data within your own local OpenClaw environment is the only way to remain compliant with GDPR and CCPA while still leveraging AI-driven growth.

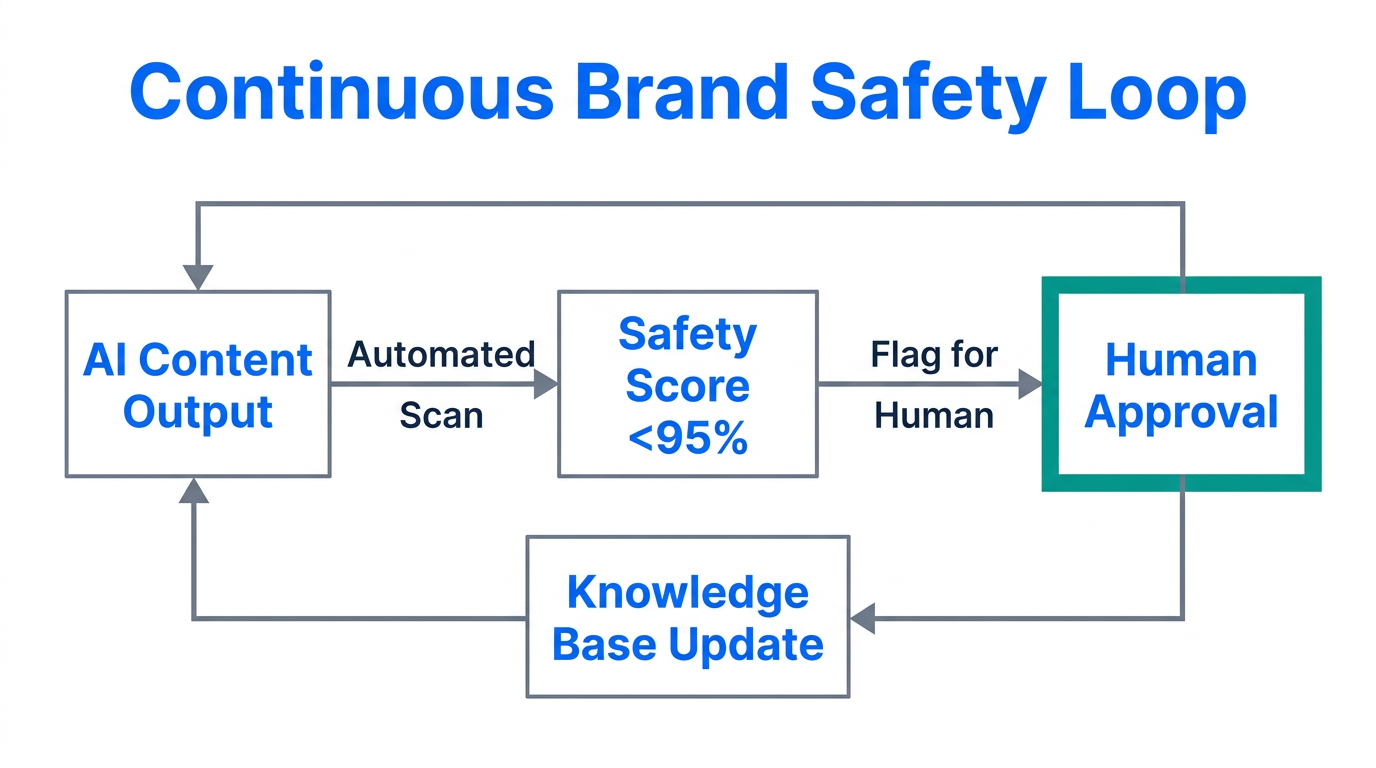

The 'Set and Forget' Trap: Establishing Monitoring Frameworks

The viral success of OpenClaw—which famously caused a global sell-out of Mac Mini units as marketers rushed to host their own agents—has led to a dangerous sense of complacency. Many agencies are treating these agents as "digital employees" that never need a performance review. This "set and forget" mentality is where brand equity goes to die.

AI can and will hallucinate. An agent might interpret a sudden market shift—like a holiday weekend—as a permanent trend and scale budgets incorrectly. To counter this, you must establish Human-in-the-Loop (HITL) gates. In your OpenClaw configuration, set the /think level to "High" for any budget-altering decisions and require a final confirmation via Slack or Telegram before the action is executed.

For brands working with influencers and UGC creators, the risk is even higher. Platforms like Stormy AI can help maintain brand equity by vetting the quality of the human creators your AI agents might be interacting with. While an AI can find a thousand creators in seconds, using a tool like Stormy AI to ensure those creators have real engagement and fit your brand's quality standards is a critical step in the marketing AI risk management workflow.

Conclusion: Building an Agentic Strategy with Integrity

The transition from "chat" to "execution" is the defining trend of the 2025 marketing landscape. As the AI in marketing sector continues its march toward a $217 billion valuation by 2034, the winners won't just be the ones with the fastest agents. They will be the ones who integrated brand safety into the very architecture of their AI workspace.

By leveraging SOUL.md for tone, enforcing strict autonomous agent permissions, and refusing the temptation of a "set and forget" workflow, you can harness the power of OpenClaw without sacrificing your brand’s soul. The future of advertising is automated, but the responsibility for brand equity remains human. Start by deploying your agent on a secure VPS like DigitalOcean, install your ad-specific skills, and always keep a hand on the kill switch.