In 2026, the digital landscape has shifted from the traditional "ten blue links" to a dynamic ecosystem of AI-driven answers. While Google still dominates discovery, a massive 30% of product research has migrated toward AI agents and voice assistants like ChatGPT and Perplexity, according to AIOSEO. For e-commerce brands, the challenge is no longer just ranking #1; it is becoming the trusted, cited source that these AI models use to build their responses. This is the era of Generative Engine Optimization (GEO), where programmatic SEO (pSEO) tools are being repurposed to feed the massive hunger of Large Language Models (LLMs) for structured, high-utility data.

Successful e-commerce scaling this year requires a hybrid approach. Brands must combine the scale of programmatic SEO tools with the precision of AI-ready metadata. By automating the creation of thousands of high-quality pages, brands are seeing an average 30%–80% surge in organic traffic and a 25%–35% increase in conversion rates. However, the stakes are higher than ever: nearly 1 in 3 pSEO implementations now suffer from a "traffic cliff" if they fail to provide unique value, as noted by Passionfruit.

Understanding GEO: Why Structured Data is the New SEO Currency

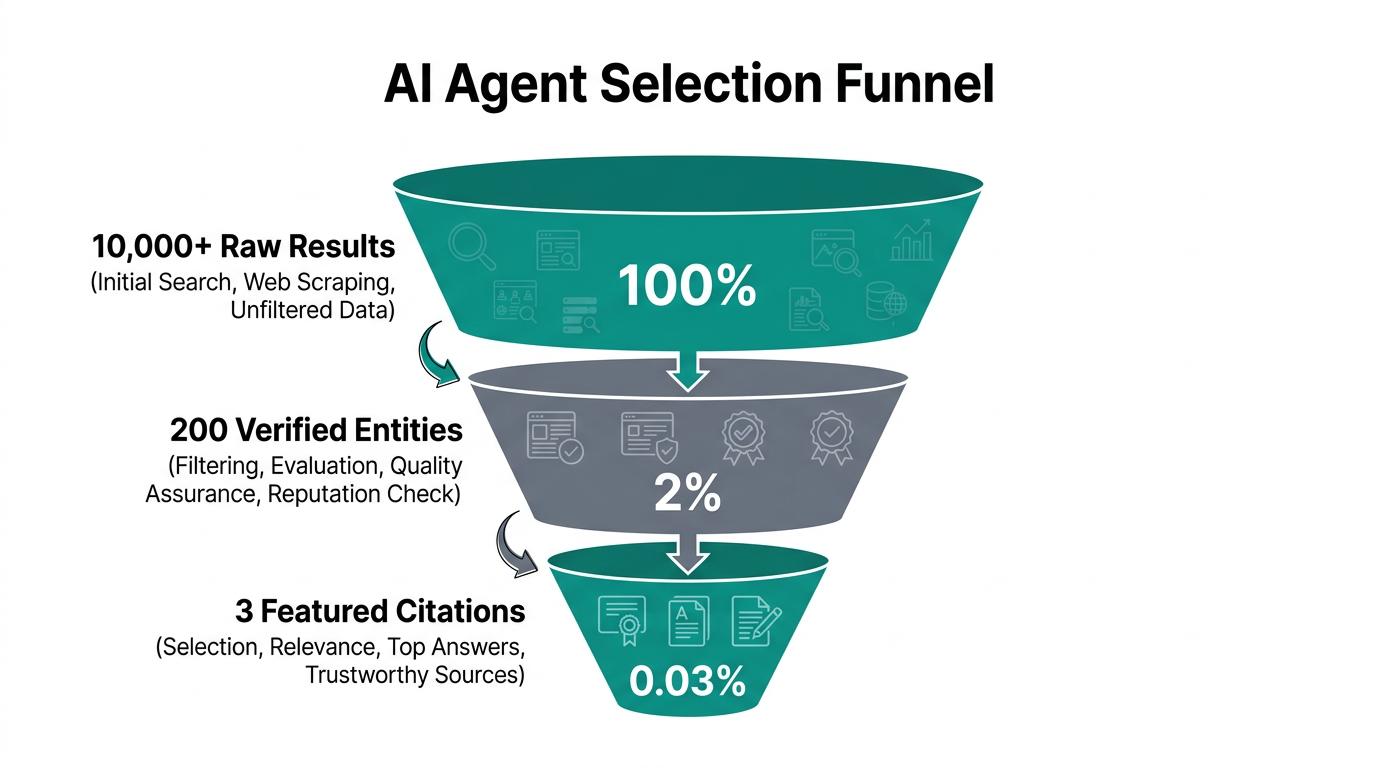

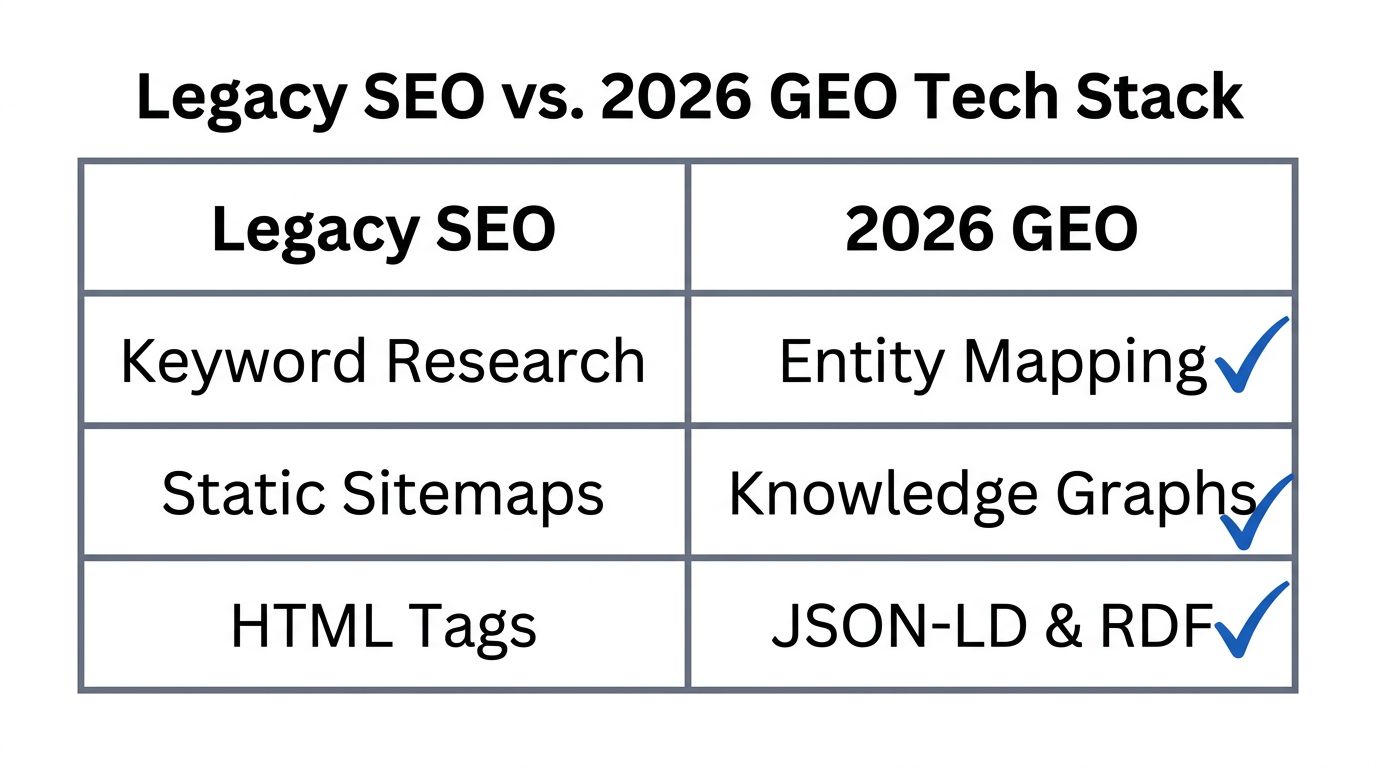

Generative Engine Optimization is the practice of optimizing content specifically for AI Overviews (formerly SGE) and standalone AI agents. Unlike traditional search engines that crawl and index keywords, AI agents prioritize entities and relationships. If your e-commerce site exists as a collection of unstructured blog posts, an AI agent may ignore it. If your site is a web of interconnected data points, you become the definitive source.

The currency of this new era is structured data. When you use pSEO tools to generate thousands of product or category pages, every single page must be wrapped in robust JSON-LD Schema. This allows LLMs to instantly understand your product’s price, availability, material, and customer sentiment without having to "guess" through natural language processing, a standard recommended by Schema.org.

"2026 is the year where search engines stop looking for keywords and start looking for answers backed by verifiable data sets."Implementing Robust JSON-LD Schema Programmatically

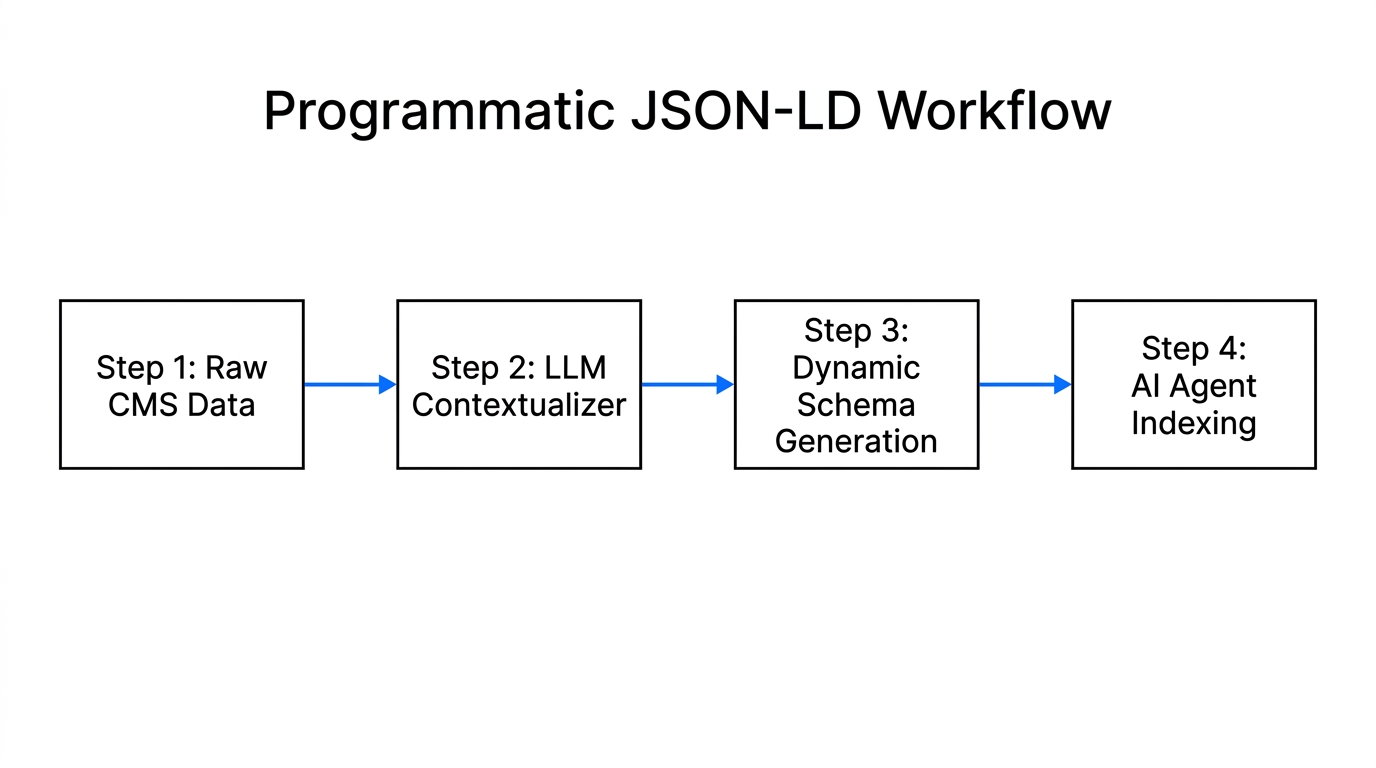

Scaling a handful of pages with structured data is easy; scaling 50,000 pages requires an automated architecture. In 2026, the Product, Offer, and Review schemas are non-negotiable for AI Overview compatibility. Your pSEO engine should be directly connected to your Product Information Management (PIM) system or inventory database.

Step 1: Map Your Data to Schema Fields

Use tools like Airtable to store your relational data. Ensure every product entry includes specific fields for brand, sku, price, priceCurrency, and aggregateRating. When your programmatic engine (like SEOmatic) generates the HTML, it should simultaneously inject a unique JSON-LD script into the <head> of the page.

Step 2: Handle Out-of-Stock (OOS) Data Dynamically

AI agents hate recommending products that aren't available. Your pSEO strategy must account for inventory shifts. If a product is temporarily out of stock, use your pSEO template to remove the 'Offer' schema but keep the page live for 'Product' info. For permanent removals, follow Yoast’s recommendation: use a 301 redirect to the most relevant category page to preserve backlink equity and prevent "soft 404" errors that drain crawl budget.

| Schema Type | Why AI Agents Love It | pSEO Application |

|---|---|---|

| Product | Defines the entity (Name, Brand, SKU). | Applied to all 10k+ unique SKU pages. |

| Offer | Provides real-time price and availability. | Dynamic syncing with Stripe or Shopify. |

| Review | Supplies "social proof" for AI summaries. | Aggregating data from Trustpilot or Reddit. |

Moving from Keywords to Entity-Based Content

Traditional SEO focused on `[Product Name] + [Best Price]`. GEO focuses on the context of how that product solves a problem. Growth advisor Kevin Indig suggests that 2026 is about Automation in Insights. This means using AI to mine forums like Reddit and Quora to understand the specific concerns users have, then feeding those concerns into your programmatic templates.

For example, if your e-commerce brand sells ergonomic office chairs, your AI agent search strategy shouldn't just target "buy ergonomic chair." Instead, build programmatic pages targeting [Job Title] + [Back Pain Type] + [Chair Feature] (e.g., "Software Engineers with Lower Back Pain needing Lumbar Support").

By creating high-utility, narrow-intent pages, you provide the LLM with a specific answer to a specific user prompt. To find the right influencers to validate these claims and build authority, many brands use Stormy AI to discover creators who can provide the "human voice" that AI-driven search results now prioritize.

"The most successful programmatic sites in 2026 integrate Reddit and forum insights directly into their templates to provide the 'Human-in-the-Loop' validation LLMs crave."Case Study: How Zapier Dominates the Long Tail of AI Research

Zapier is the gold standard for programmatic architecture. They didn't just write 25,000 articles; they built a system that generates a landing page for every possible integration pair. If you search for "How to connect Slack to Trello," Zapier’s programmatic page is the answer.

In 2026, when a user asks an AI agent, "What are the best automation tools for my marketing agency?", the AI looks for high-utility datasets. Zapier’s massive web of pages acts as a structured graph of "Product A + Product B" capabilities. E-commerce brands can replicate this by building comparison pages: [Your Brand] vs [Competitor] or [Product A] vs [Product B] for use-case specificities.

Expert Eli Schwartz argues that SEO should be built into the product. Just like Zapier, your e-commerce store should treat its category and product pages as dynamic data points rather than static content. This ensures that when a generative engine scans the web for a recommendation, your site is the most comprehensive and structured repository of information available.

Strategies for Becoming the 'Cited Source' in SGE

To be the source that ChatGPT or Perplexity links to, you must provide unique, non-commoditized data. If your programmatic pages simply scrape manufacturer descriptions, you will be ignored. As Emina Demiri-Watson notes, automation handles the scale, but humans (or advanced AI editors) must provide the last 20% of quality to avoid "slop" content.

- Dynamic Image Generation: Use tools like Orshot to generate unique product visuals for every programmatic page. Unique images are a massive signal for Google’s helpful content filters.

- Proprietary Benchmarks: If you sell fitness gear, include proprietary testing data (e.g., "Our testing shows this mat has 15% better grip than the average") in your programmatic templates. LLMs love quoting specific numbers.

- Local Authority: For omni-channel brands, build programmatic pages for

[Product] in [City]to capture high-intent local foot traffic, a strategy successfully used by Flyhomes to scale from 10k to 425k pages in mere months.

The 2026 Programmatic SEO Tech Stack

To implement GEO at scale, your marketing stack must be agile and data-first. You need to move beyond basic WordPress installs into a more modular setup.

| Category | Recommended Tool | Integration Use Case |

|---|---|---|

| Data Enrichment | Clay | Pulling unique product attributes from across the web. |

| Keyword Discovery | Ahrefs / Semrush | Identifying "Content Gaps" where AI agents lack answers. |

| Page Generation | Webflow + Whalesync | Syncing Airtable data to a high-performance visual CMS. |

| AI Writing | Byword.ai | Generating human-like, SEO-optimized copy at scale. |

| Tracking | Stormy AI | Monitoring creator engagement and post-tracking for authority. |

For Shopify users, ConvertMate remains a top choice for AI-driven pSEO assistance, while enterprise retailers often opt for Verbolia to create high-speed "vPages" outside their main infrastructure to maintain Core Web Vitals.

Common Mistakes: Preventing the "Traffic Cliff"

As you scale, quality control becomes your biggest bottleneck. Passionfruit warns that 33% of pSEO sites lose their traffic within 18 months due to simple errors.

- Keyword Cannibalization: Do not create pages for "Blue Running Shoes" and "Running Shoes in Blue." Programmatic rules must be strict to prevent duplicate intent.

- Orphan Pages: Every programmatic page must have dynamic internal linking. If an AI agent cannot find a path from your home page to your long-tail page through logical navigation, it may treat the page as low-value.

- Ignoring Page Speed: Large-scale sites often suffer from bloat. According to Scopic Studios, site speed is a critical ranking factor that influences how frequently an LLM's crawler will visit your site to update its internal "knowledge."

Conclusion: Winning the AI Agent Search Strategy

In 2026, the brands that dominate are those that stop viewing SEO as a list of keywords and start viewing it as a knowledge graph. By leveraging programmatic SEO tools, e-commerce stores can build the massive, structured architecture needed to feed generative engines.

Remember: the AI agent is your new customer. If you provide it with perfectly structured data, unique human insights, and high-utility content, it will reward you with citations that drive traffic long after the traditional SERP has changed. Start by connecting your inventory to a pSEO engine today, and ensure your brand is the first name cited by the AI assistants of tomorrow.