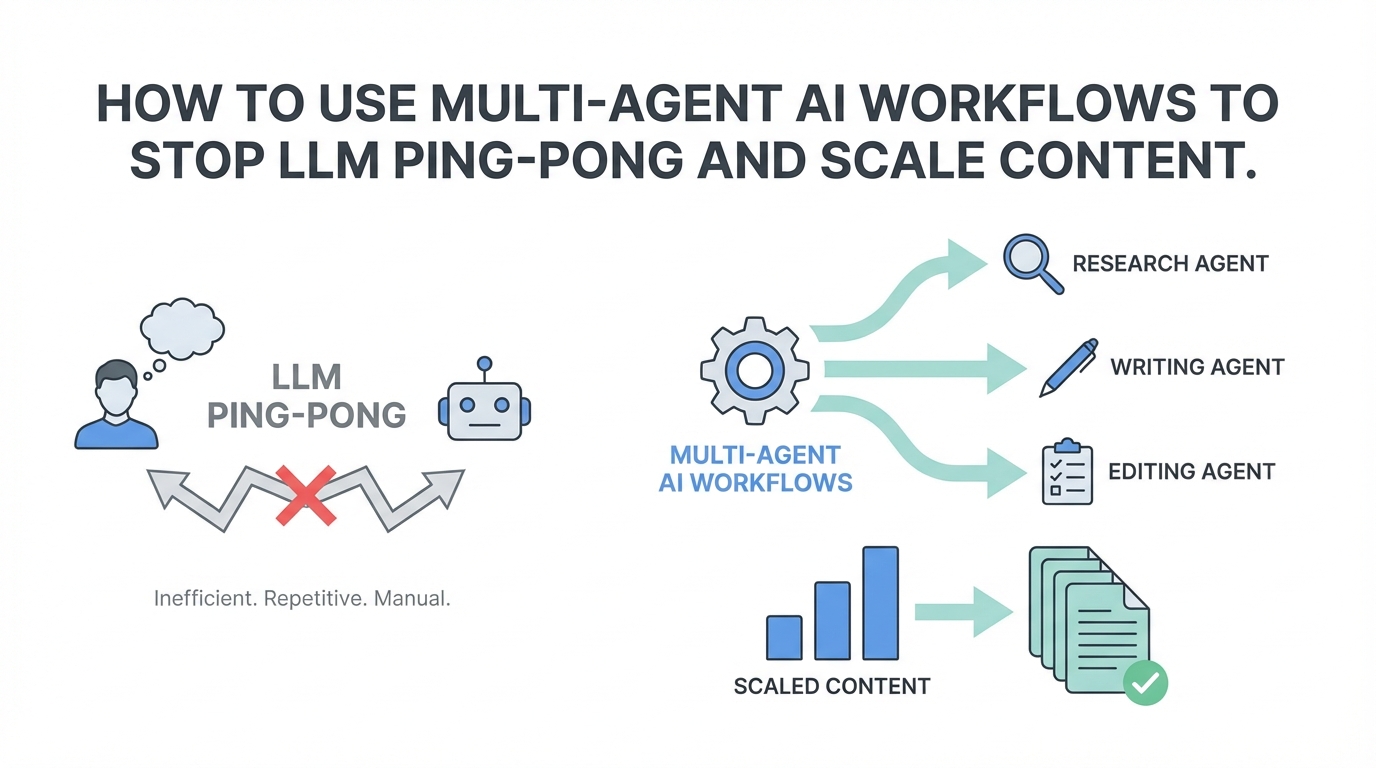

If you are a marketer or content creator today, you likely suffer from a productivity-killing habit I call LLM Ping-Pong. It starts with a prompt in ChatGPT. You aren’t quite happy with the tone, so you copy the prompt, open a new tab for Claude 3.5 Sonnet, and try again. Then, just to be sure, you head over to Perplexity or Gemini to see if they can offer a more factual perspective. By the time you’ve compared three different outputs, you’ve spent twenty minutes context-switching instead of actually creating. The solution to this friction isn’t working harder; it’s adopting a multi-agent ai workflow that pings every top-tier model simultaneously.

The Death of LLM Ping-Pong: Why Context Switching Kills Productivity

In the early days of generative AI, we were content with any output that sounded human. Today, the bar for quality is significantly higher. High-performing content—whether it is a YouTube script, a LinkedIn thought-leadership post, or a fundraising deck—requires a blend of creativity, factual accuracy, and specific stylistic nuances. Single-model workflows often fall short in at least one of these areas.

When you engage in LLM Ping-Pong, you are essentially acting as a manual router between different intelligence engines. This process is not just slow; it’s cognitively taxing. Research into digital productivity suggests that even brief mental blocks created by shifting between tasks can cost as much as 40% of someone's productive time. By moving toward a multi-agent workflow, you eliminate the manual labor of comparison. Instead of you going to the models, the models come to you, allowing you to focus on the critical task of curation rather than the tedious task of prompting.

Understanding the Mixture of Agents (MoA) Architecture

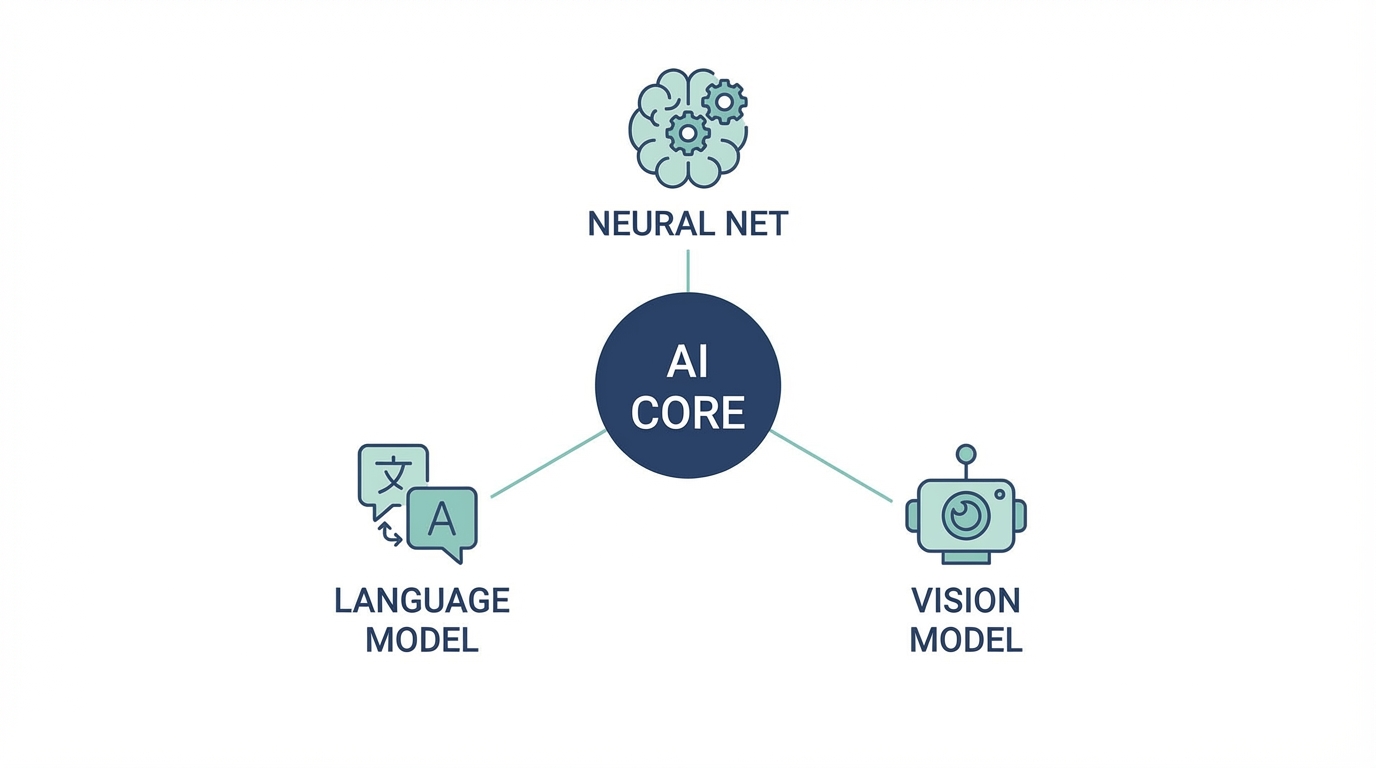

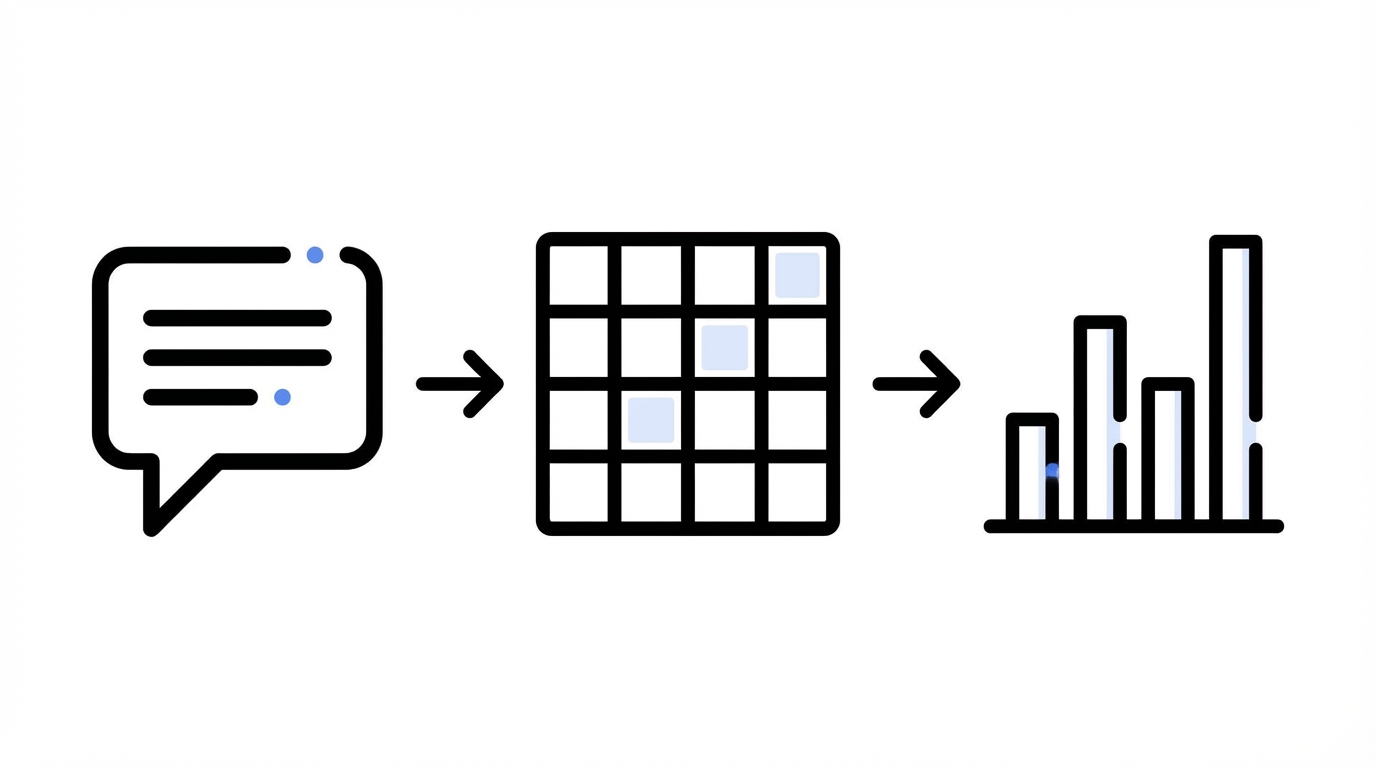

The technical term for this strategy is Mixture of Agents (MoA). Unlike a standard LLM which relies on a single neural network's weights, a multi-agent system uses a reflection layer. When you submit a prompt, the system sends it to multiple models—typically GPT-4, Claude 3.5 Sonnet, and Gemini 1.5 Pro—all at once.

The magic happens in the reflection phase. An orchestrator agent analyzes the outputs from all three models, identifies the strengths of each, and synthesizes a "best-of-all-worlds" response. For example, if GPT-4 provides a great structure but Claude offers a more conversational tone, the MoA system can remix them into a final version that is structurally sound and engaging. This content automation strategy ensures you aren't settling for the quirks of a single model.

Step-by-Step Playbook: Setting Up a Multi-Agent Prompt for Text

To get the most out of a multi-agent system like GenSpark or similar best ai writing tools, you need to follow a structured approach. Here is how to set up a high-performance workflow for a YouTube intro or blog post:

Step 1: Define the Persona and Context

Don't just ask for a script. Provide your profile. Tools that allow you to link your X (Twitter) profile or website can scrape your existing voice, ensuring the AI knows exactly who is speaking. As Greg Isenberg often notes in his breakdowns of Late Checkout workflows, giving the AI a reference point for your specific personality is the difference between generic and viral content.

Step 2: Execute the Multi-Model Ping

Enter your prompt once. A high-quality multi-agent system will show you the progress as it pings GPT, Claude, and Gemini. Watching the reflection agent work is key; it should tell you why it chose certain elements from each model to build the final response.

Step 3: The Remix and Refine

Once the multi-agent system provides the synthesized result, don't stop there. Use the remix feature to tweak the output. If the intro is too long, ask the agent to "keep the conversational tone of the Claude version but use the punchy hook from GPT."

Scaling Visuals: Comparing Flux, Nano Banana, and VEO3

The multi-agent revolution isn't limited to text. The same ai model comparison logic applies to image and video generation. In a standard workflow, you might spend hours in Midjourney trying to get a face right. In a multi-agent visual workflow, you can compare different engines in one interface.

For instance, when generating a specific brand asset, you can run the prompt through Flux, Nano Banana, and ByteDance SeaDream simultaneously. Flux is often superior for hyper-realistic textures, while Nano Banana might excel at stylized, high-contrast marketing images. Seeing them side-by-side allows you to pick the best base for your video content.

When moving from image to video, the VEO3 (Gemini) model has recently emerged as a leader for generating cinematic motion with integrated audio. By starting with a multi-agent image generation, you can select the most accurate frame and then use a video agent to animate it, saving thousands of dollars in traditional production costs.

Scaling Outreach: Connecting Workflows to Influencer Management

Once you have used your multi-agent system to generate the perfect scripts and visual assets, the next bottleneck is distribution and creator management. If you are running a UGC (User-Generated Content) campaign for a mobile app, you need a way to take those AI-generated briefs and get them into the hands of the right influencers.

This is where tools like Stormy AI become essential. While your multi-agent setup handles the creative heavy lifting, Stormy AI allows you to discover creators across TikTok and YouTube who match your niche. You can take the conversational scripts generated by your mixture of agents and use Stormy's AI-personalized outreach to contact hundreds of creators at once. Managing these relationships inside a creator CRM ensures that your scaled-up content production actually reaches the right audience without you drowning in manual emails.

Beyond Chat: Automating Sheets, Slides, and Lead Magnets

A true content automation strategy extends into the boring-but-necessary parts of business: data and presentations. Multi-agent systems are now being integrated into spreadsheet and slide environments, allowing for "agentic" workflows that do more than just summarize text.

- AI Sheets: You can prompt an agent to "find 50 YouTubers in the AI space with over 100k subscribers and find their contact emails." This replaces manual research tools that often cost hundreds of dollars, like TryShortcut, with a more lightweight, LLM-driven experience.

- AI Slides: Instead of staring at a blank PowerPoint, you can feed a startup idea from IdeaBrowser into a multi-agent slide generator. It won't just design the slides; it will perform competitive analysis and financial growth projections by querying different models for data and design.

- Lead Magnets: These decks can be instantly exported and used as lead magnets to convert social media followers into email subscribers using platforms like Kit.

Cost-Benefit Analysis: Unified vs. Individual Subscriptions

From a budget perspective, the multi-agent approach is a logical winner for small teams and solo founders. Maintaining individual Pro subscriptions for ChatGPT ($20), Claude ($20), and Gemini ($20) quickly adds up to $60/month or more. A unified multi-agent subscription typically costs around $20/month and provides access to the "best of" all three through a single API or interface.

Furthermore, these systems often use token optimization to reduce costs. Instead of paying full price for three separate long-form generations, the reflection agent only processes the most relevant parts of each output, effectively lowering your overhead while increasing the quality of the final content.

Conclusion: Embracing the Agentic Future

The goal of using multi-agent ai workflows is to reach a state of flow. When you stop switching tabs and start directing agents, your capacity to produce high-quality marketing assets scales exponentially. Whether you are generating a 30-second YouTube intro, building a fundraising deck, or automating creator outreach through Stormy AI, the strategy remains the same: use multiple models to verify, reflect, and remix.

Stop playing LLM Ping-Pong. Start building an agentic system that does the heavy lifting for you, so you can focus on the one thing AI still can't replicate: your unique vision and strategy. For more insights on how these tools are changing the landscape, listen to the latest trends on the My First Million podcast or experiment with these workflows yourself. The tools are ready; the question is whether your workflow is.