The artificial intelligence gold rush has hit a massive physical roadblock: hardware. As the world moves from the era of training massive models to the era of executing them—a process known as inference—the demand for GPU power has skyrocketed beyond anything the legacy cloud could have predicted. While Big Tech companies scramble to build massive data centers, a new movement is emerging at the intersection of crypto and AI. This shift isn't just about technical architecture; it's about decentralizing the power of AI and allowing anyone with a MacBook, a gaming PC, or even an iPhone to become a part of the global computing grid. By leveraging decentralized AI compute, the next generation of founders is bypassing the cloud giants to build at a scale previously reserved for billionaires.

The Compute Bottleneck: Why Inference Efficiency is the New Frontier

In the early days of generative AI, the focus was entirely on training. Startups needed massive clusters of NVIDIA H100s to teach models how to see, speak, and create. However, as the industry matures, the economic reality is shifting toward inference efficiency. Once a model is trained, it must be run millions of times per day to serve users. For viral mobile applications, this server cost can be catastrophic. Ben, the founder of the viral AI app Wombo, famously noted that during their peak virality, they faced million-dollar monthly server bills from traditional cloud providers like Amazon AWS.

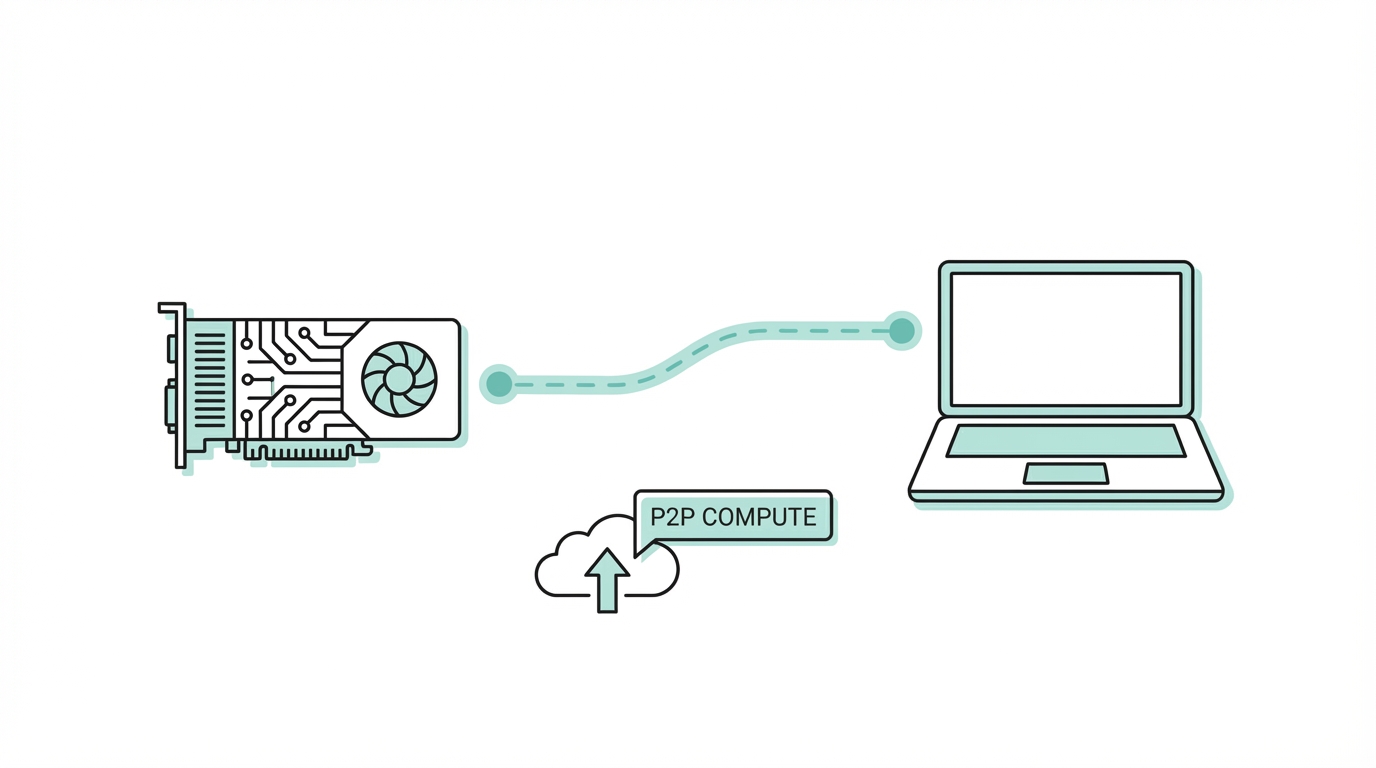

This "compute tax" is the primary reason why developers are looking for a GPU mining alternative that doesn't rely on centralized control. The solution lies in edge computing for AI—moving the workload away from central servers and onto the devices that people already own. If a model can run on-device, or be powered by a peer-to-peer network, the marginal cost of a new user drops to near zero.

For founders, the goal is now to create "stupid simple" interfaces that abstract away the complexity of these models. Apps like Reface and Wombo proved that if you make AI accessible to the layperson, the demand is infinite. But to sustain that growth without raising tens of millions in venture capital just to pay for servers, the infrastructure itself must change. This is where decentralized AI compute becomes a necessity rather than a luxury.

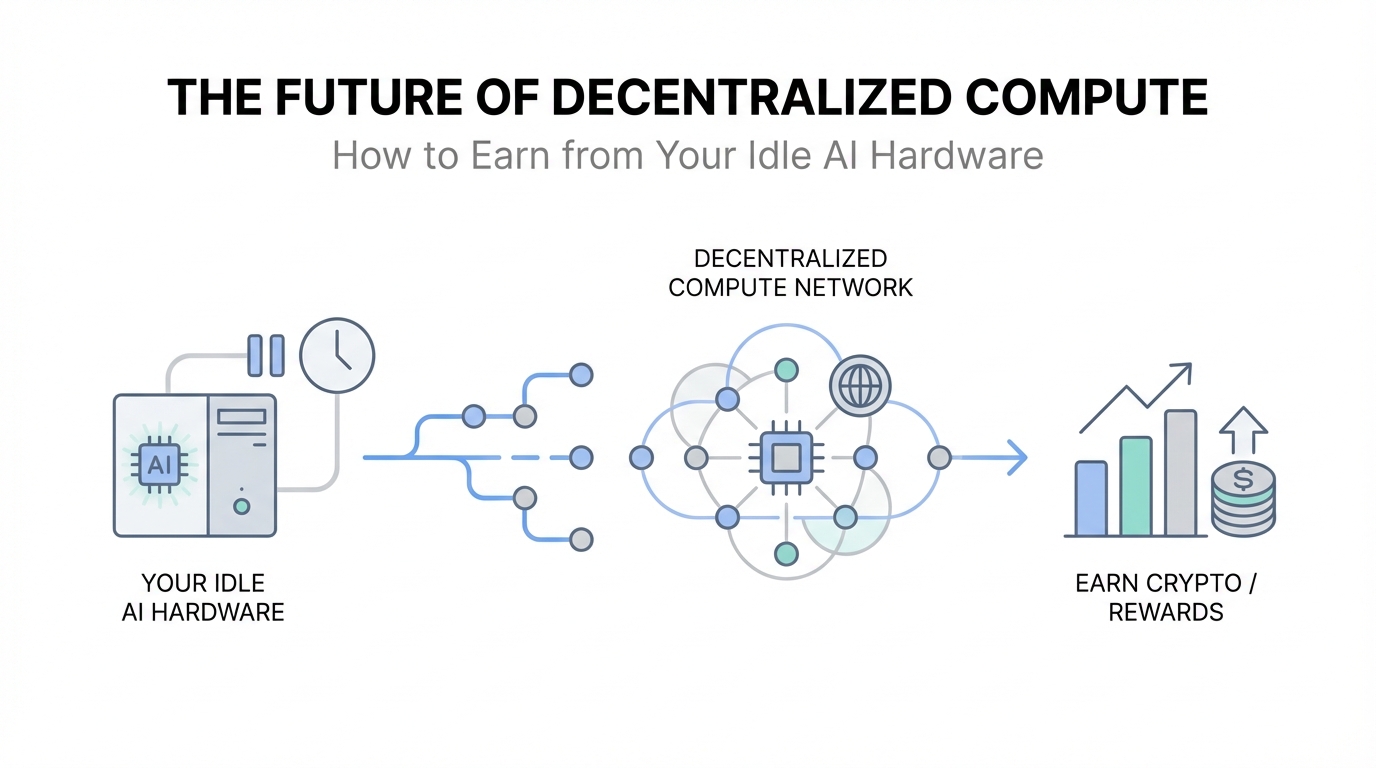

The 'Airbnb for GPUs' Model: Monetizing Your Idle Silicon

Imagine if your computer could earn money while you slept. This is the core promise of the 'Airbnb for GPUs' model. Modern consumer hardware—specifically the M-series chips in MacBooks and the high-end GPUs in gaming rigs—is often sitting idle for 20+ hours a day. Platforms like w.ai are building the software layer that allows these devices to contribute to a global pool of compute.

By installing a simple client, a user can turn their device into a "node" in a decentralized network. When a developer needs to run an AI task—like generating an image or processing a voice command—the network distributes that task to an available node. This peer-to-peer computing model offers several distinct advantages:

- Cost Reduction: By cutting out the middleman (Big Tech), developers can access compute at a fraction of the cost of Google Cloud or AWS.

- Resilience: A decentralized network has no single point of failure. It exists everywhere at once.

- Accessibility: It democratizes access to high-end hardware, allowing a developer in a developing nation to power their app using the idle power of a laptop in New York.

The success of these networks depends on rewarding contributors fairly. Currently, many of these platforms use point systems and tokenization to incentivize early adopters. A user might earn points for every inference task their machine completes, which can later be converted into liquid crypto tokens. This intersection of crypto and AI provides the economic incentive layer that was missing from previous attempts at grid computing. It turns hardware from a depreciating asset into a yield-generating one.

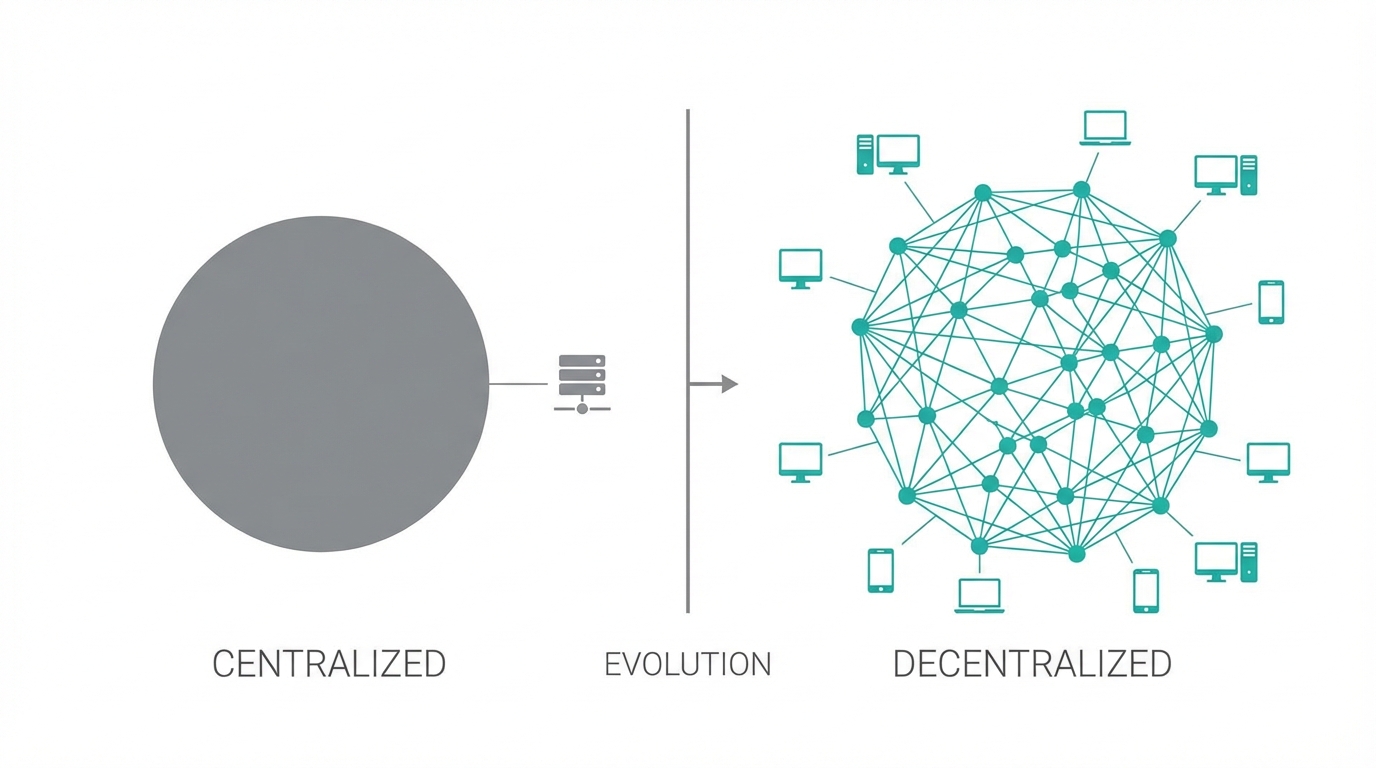

Decentralized AI vs. Big Tech: The Battle for Infrastructure

The future of AI technology is currently caught in a tug-of-war between centralization and decentralization. On one side, you have the "walled gardens" of Big Tech, which offer high reliability but at a high cost of entry and a high risk of censorship or platform dependency. On the other side, you have decentralized networks like Render, Bittensor, and w.ai.

Decentralized networks are not just cheaper; they are more aligned with the open-source AI movement. When you use a centralized provider, you are often locked into their proprietary models and APIs. In a decentralized ecosystem, developers are free to use any model—such as those found on Hugging Face—and deploy them across a global network without asking for permission.

This freedom is fueling what some call the "AI Mafia"—a wave of successful startups founded by early employees of AI pioneers. Because they understand how to optimize models for the edge, they can build multi-million dollar mobile apps in under seven months with tiny teams. They don't need a massive headcount because they aren't managing server farms; they are managing decentralized protocols.

The Rise of 'Popcorn Apps': Building for the Algorithmic Feed

One of the most effective ways to utilize decentralized compute is by powering what we call "Popcorn Apps." These are simple, highly entertaining mobile applications that provide immediate value or a "quick laugh." Think of apps that turn your friends into babies, or celebrities into funny characters. These apps thrive on viral content loops. A user creates a piece of content, shares it to a Meta Ads powered feed or a TikTok scroll, and that content drives new users back to the app.

To win in this space, you need two things: high-speed inference and effective influencer outreach. Since these apps live and die by their virality, getting your content into the hands of the right creators is essential. For developers looking to scale these viral loops, tools like Stormy AI can help source and manage UGC creators at scale, ensuring that your AI-generated content is being shared by the people most likely to trigger an algorithm spike.

The monetization strategy for these apps has also evolved. While many started as free tools, the industry has shifted toward weekly subscriptions ranging from $3 to $20. By providing a "bite-sized" entertainment experience, developers can achieve profitability quickly. The 98% of users who use the app for free drive the virality, while the 2% of power users pay for the compute, creating a sustainable ecosystem powered by decentralized AI compute.

Step 1: Identify the Viral Model

Look for open-source models that are gaining traction in technical circles but are still difficult for the average person to use. This could be anything from a new image synthesis tool to a real-time voice cloner. If it requires a Python environment or a complex setup, there is an opportunity to productize it.

Step 2: Optimize for Inference

Before launching, you must ensure your generation times are fast. In the world of mobile apps, a three-second delay is an eternity. This often involves pruning models or using specialized hardware optimization techniques to make the AI run efficiently on edge computing nodes or consumer GPUs.

Step 3: Engineer for Virality

Every piece of content generated by your app should be watermarked and formatted perfectly for Apple Search Ads or social media sharing. Your goal is to make the user feel like they've created something unique that their friends must see. This is where AI-powered creator discovery becomes your secret weapon, and using platforms like Stormy AI allows you to identify the specific niches where your content will most likely go viral.

Conclusion: Your Hardware is Your Future

The future of AI technology is not just in the hands of a few Silicon Valley corporations. It is distributed across the millions of GPUs sitting in living rooms and offices around the world. As decentralized AI compute matures, the barrier to entry for creating a world-class startup will continue to drop. We are entering an era where idle AI hardware is no longer just a cost of ownership—it is a ticket to participate in the most significant technological revolution of our lifetime.

Whether you are a developer looking for a GPU mining alternative to power your next "Popcorn App," or a hardware owner looking to earn from your silicon, the decentralized path offers a level of freedom and scalability that the traditional cloud simply cannot match. The infrastructure of the future is peer-to-peer, open-source, and powered by you.