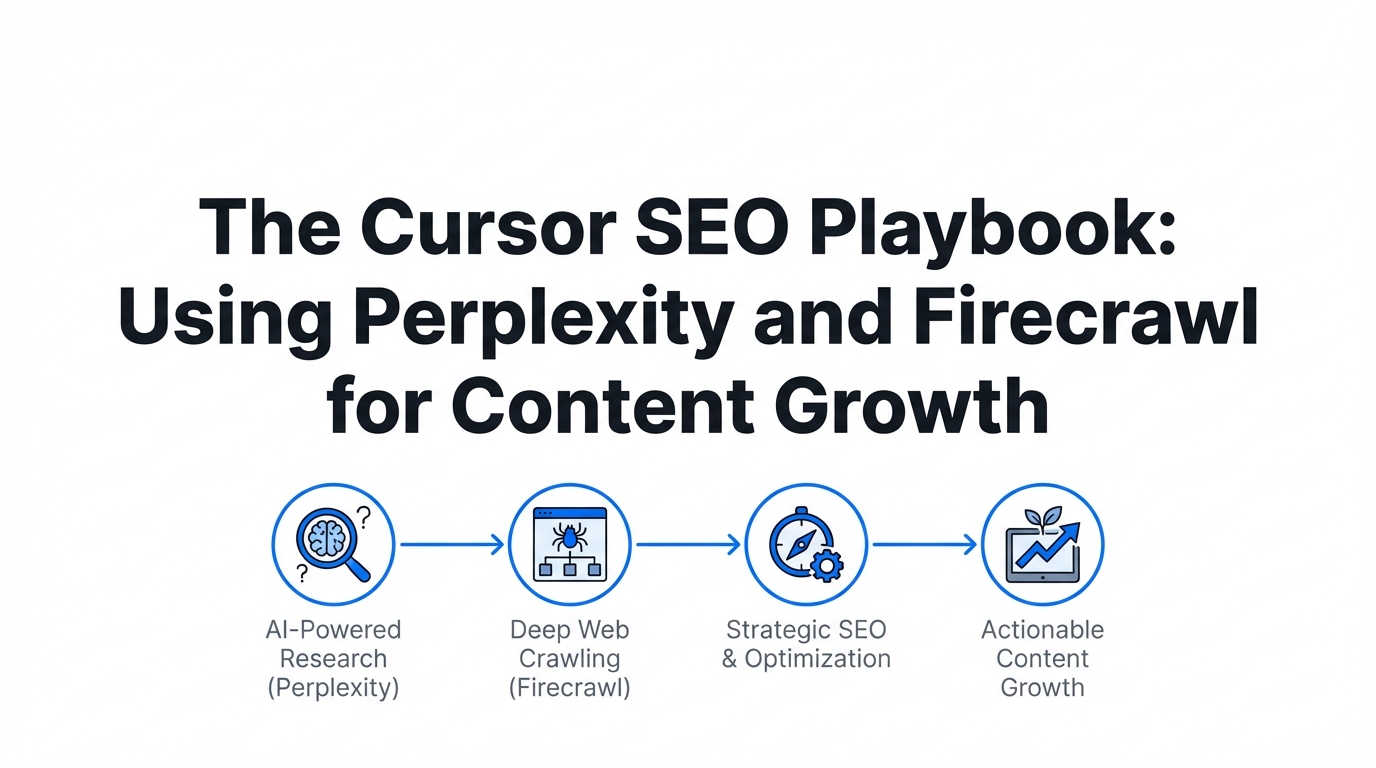

The era of the manual content calendar is ending. As search engines evolve and AI-generated content saturates the web, the competitive edge has shifted from those who can write the most to those who can build the smartest content engines. We are entering the era of the idea guy, where strategic architects use advanced development environments like Cursor to treat SEO not as a writing task, but as a programmatic challenge. By utilizing Model Context Protocols (MCPs) and agentic workflows, growth marketers are now capable of researching, structuring, and executing 100-page content clusters in the time it used to take to write a single blog post. This AI SEO strategy isn't just about speed; it's about precision, utilizing real-time data to fill content gaps that traditional tools miss.

The New Stack: Why Cursor is the Ultimate SEO Interface

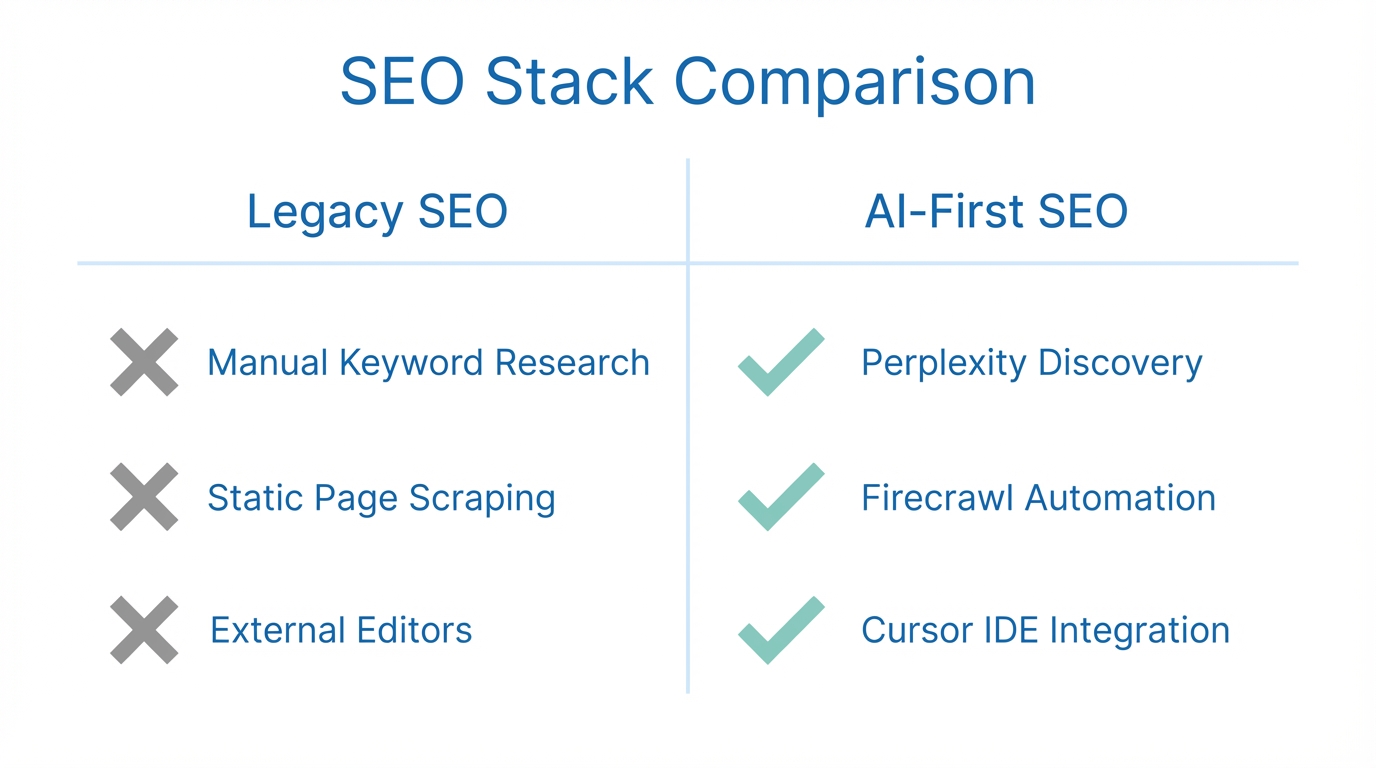

For years, SEOs lived in fragmented environments: one tab for Google Ads keyword planner, another for a CMS like Shopify, and a third for an LLM. Cursor changes this by providing a unified interface where data and execution live side-by-side. The breakthrough comes from Model Context Protocols (MCPs), which allow Cursor to interact directly with third-party apps and the live web. Instead of copying and pasting data, you are now programming your marketing stack. Whether you are managing your project database in Notion or scraping competitors with specialized tools, the IDE (Integrated Development Environment) becomes your command center.

"LMS are the new browser. Instead of navigating tabs, we are speaking to our data to perform actions in real-time."Keyword Discovery: Using Perplexity MCP for Deep Research

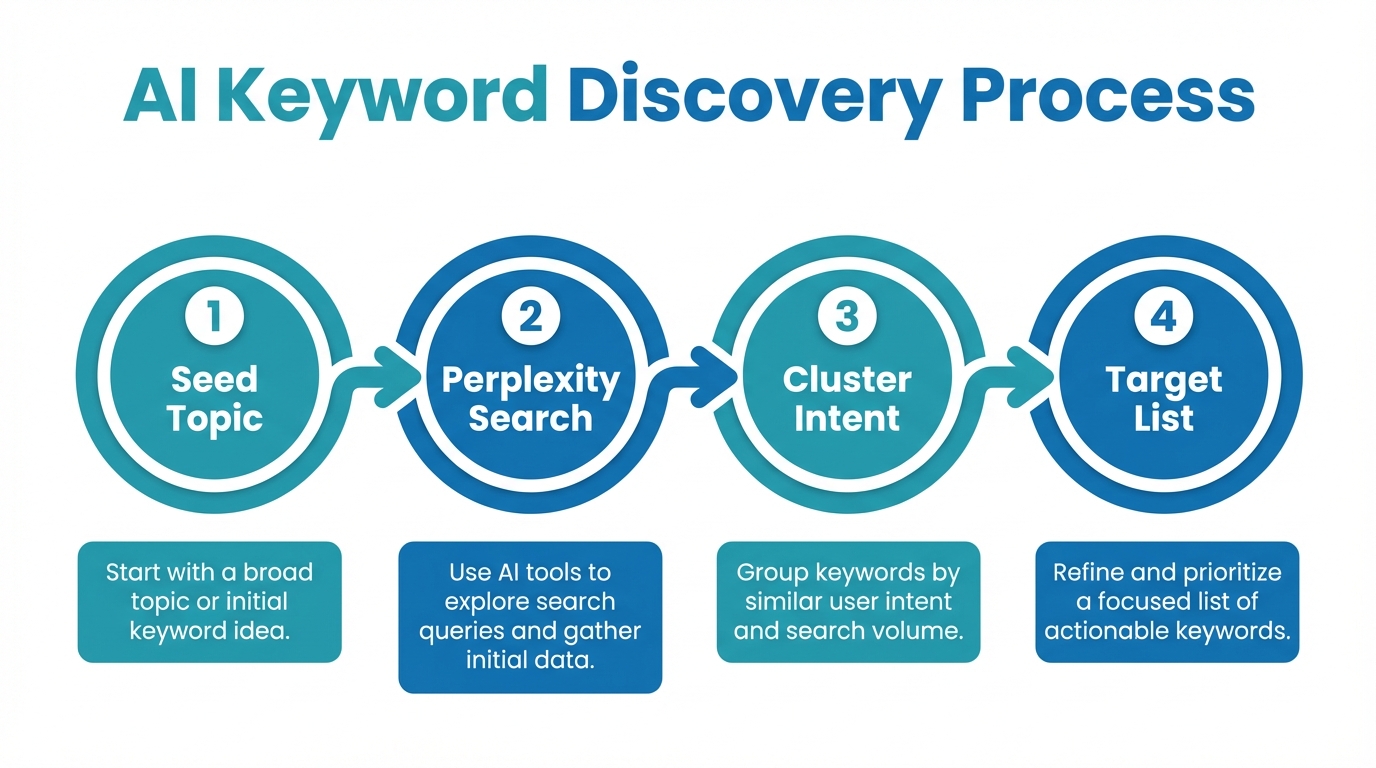

The first step in any successful keyword research workflow is identifying high-volume opportunities that your competitors haven't saturated. While traditional tools provide historical data, Perplexity offers a real-time pulse of the internet. By connecting the Perplexity MCP within Cursor, you can run deep-search queries that cross-reference current trends with your existing product data. This allows you to find "content gaps"—topics that users are searching for but no high-quality content exists to satisfy the intent.

- Real-time Trend Analysis: Identify emerging keywords before they show up in monthly volume reports.

- Competitor Gap Analysis: Search for what competitors lack in their documentation or blog posts.

- Intent Mapping: Use Perplexity to classify keywords into informational, transactional, or navigational intent automatically.

By using a thinking model like o3 or Gemini 2.5, you can ask Cursor to analyze a specific niche and generate a list of 50 low-competition, high-intent keywords. This moves you from guessing what to write about to having a data-backed roadmap based on live web scraping. Once you have this list, you can store it in a keywords.json file directly in your Cursor project, making it instantly accessible to your writing agents; meanwhile, platforms like Stormy AI streamline creator sourcing and outreach to help distribute that new content once it's live.

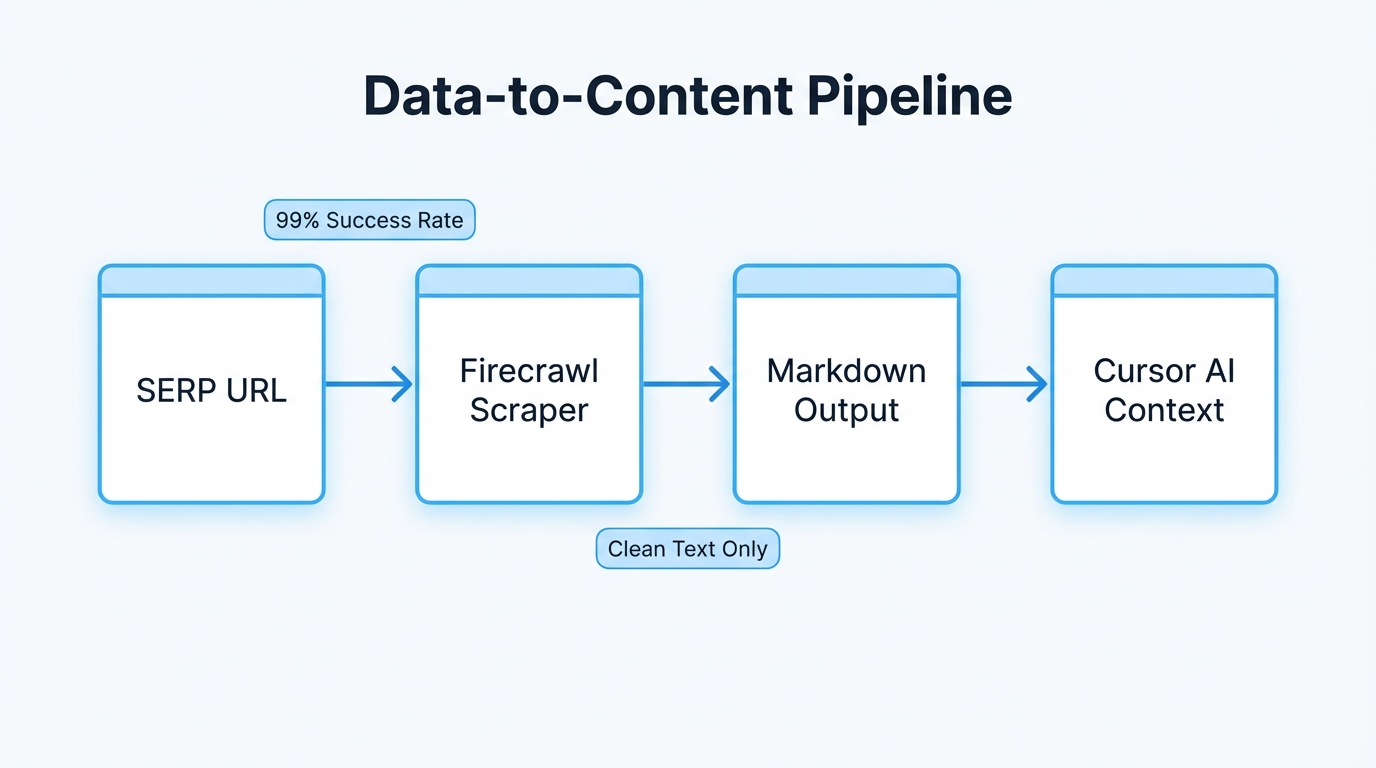

Analyzing the SERPs: Scraping with Firecrawl

Finding a keyword is only half the battle; you need to know exactly what the search engine wants to see. This is where Firecrawl comes into play. Firecrawl is a specialized tool designed to scrape web content and convert it into clean, LLM-ready markdown. In the Cursor for SEO workflow, you use Firecrawl to "crawl" the top 5 ranking results for your target keyword. This provides your AI agent with the exact structure, headers, and semantic keywords that Google is currently rewarding.

| Feature | Traditional SEO Method | Cursor Programmatic Method |

|---|---|---|

| Research Speed | Hours of manual browsing | Seconds via Firecrawl MCP |

| Content Structure | Guesswork based on intuition | Data-driven markdown analysis |

| Keyword Density | Manual checking | Programmatically optimized |

| Meta Data | Written one-by-one | Bulk generation via Agentic models |

When you scrape a top-ranking page, you aren't just looking for words; you are looking for context files. By generating a .txt or .md file of the top SERP results, you give your LLM a baseline of what search intent looks like. For teams looking to scale this even further, platforms like Stormy AI can help find the influencers who already rank for these terms on social platforms. By generating a .txt or .md file of the top SERP results, you give your LLM a