In the high-stakes environment of 2026, growth teams are no longer just running experiments; they are building autonomous acquisition engines. The global AI coding assistant market has surged to $8.5 billion, as reported by Bayelsa Watch and Gartner, reflecting a fundamental shift from simple autocompletion to agentic execution. For growth ops leaders, the choice between Claude Code and Cursor isn't just a matter of developer preference—it is a strategic decision that dictates the ROI of every internal tool, lead-gen scraper, and marketing automation workflow your team produces.

Performance Benchmarks for 2026: The Efficiency Gap

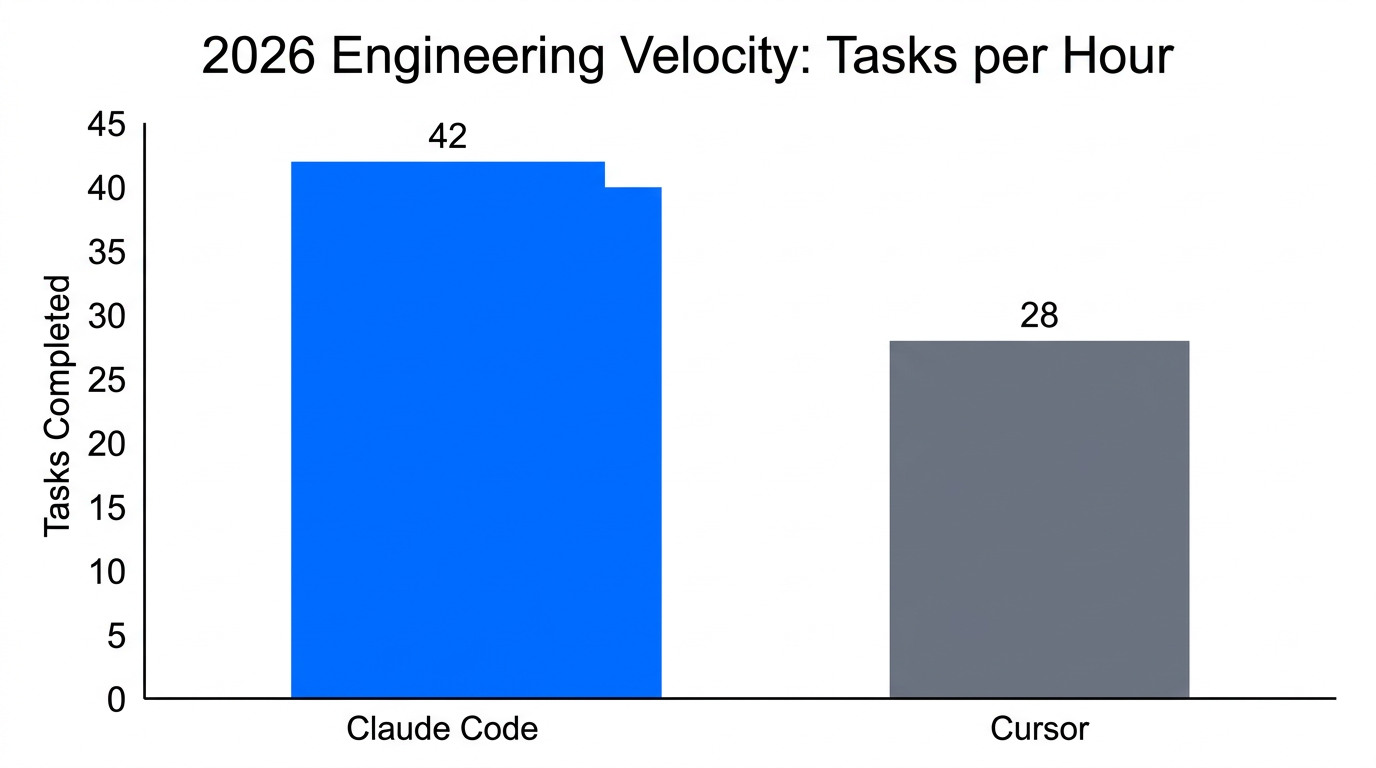

In 2026, the performance gap between top-tier agents has become measurable and significant. According to recent data from SitePoint, Claude Code achieved 78% correctness on complex feature implementations, notably outperforming Cursor’s 73%. This efficiency is particularly visible in growth engineering, where multi-file reasoning is required to integrate TikTok Ads Manager data with custom internal CRMs built on Next.js.

Claude Code’s dominance in "Full-Feature Implementations"—winning 68% to 54% in head-to-head tests—is attributed to its terminal-native architecture. While GitHub Copilot remains a staple for boilerplate, senior growth engineers are increasingly using Claude Code as an escalation path for hard problems like architectural refactors or unraveling subtle bugs in attribution logic, as noted by Faros AI.

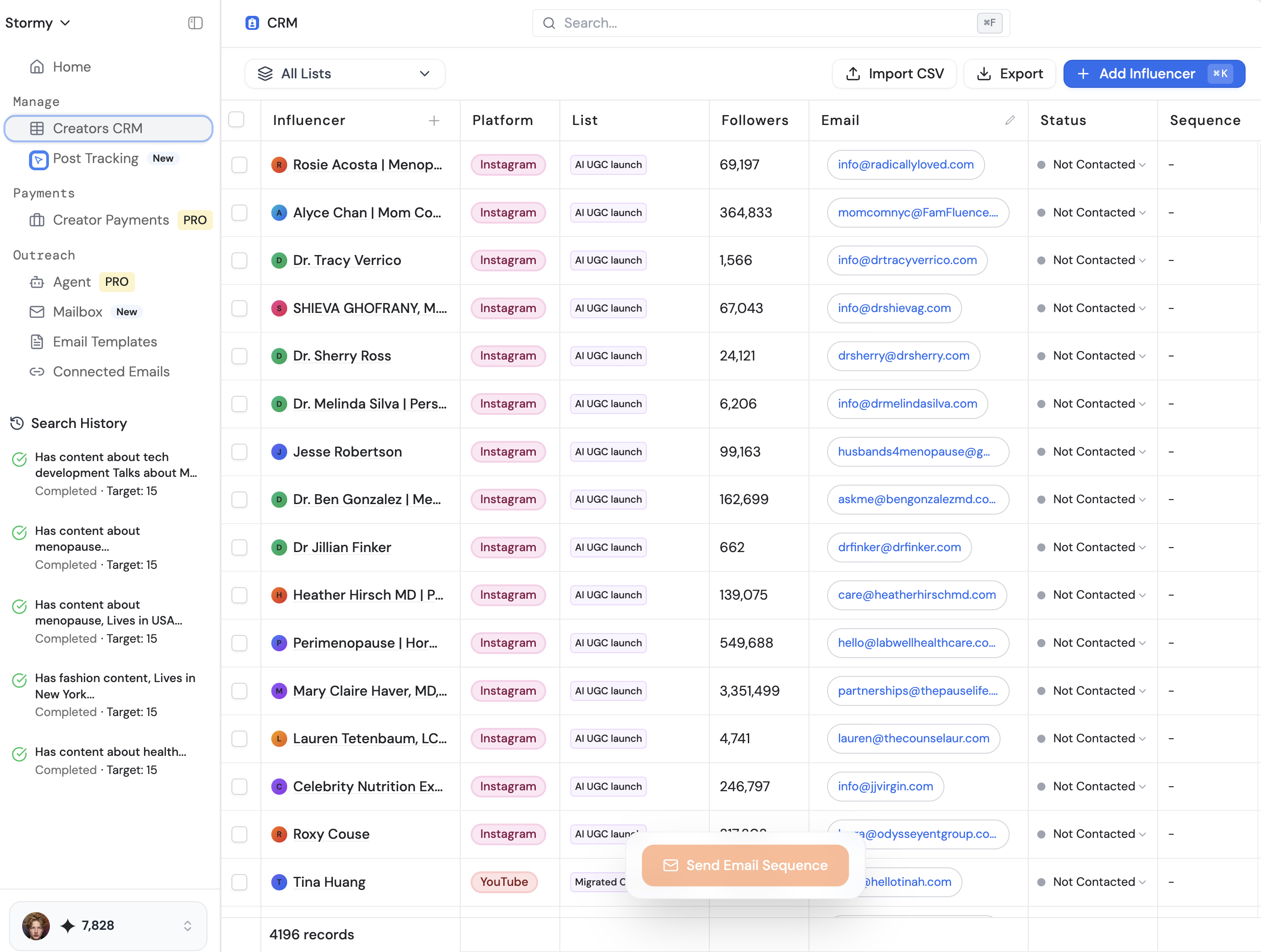

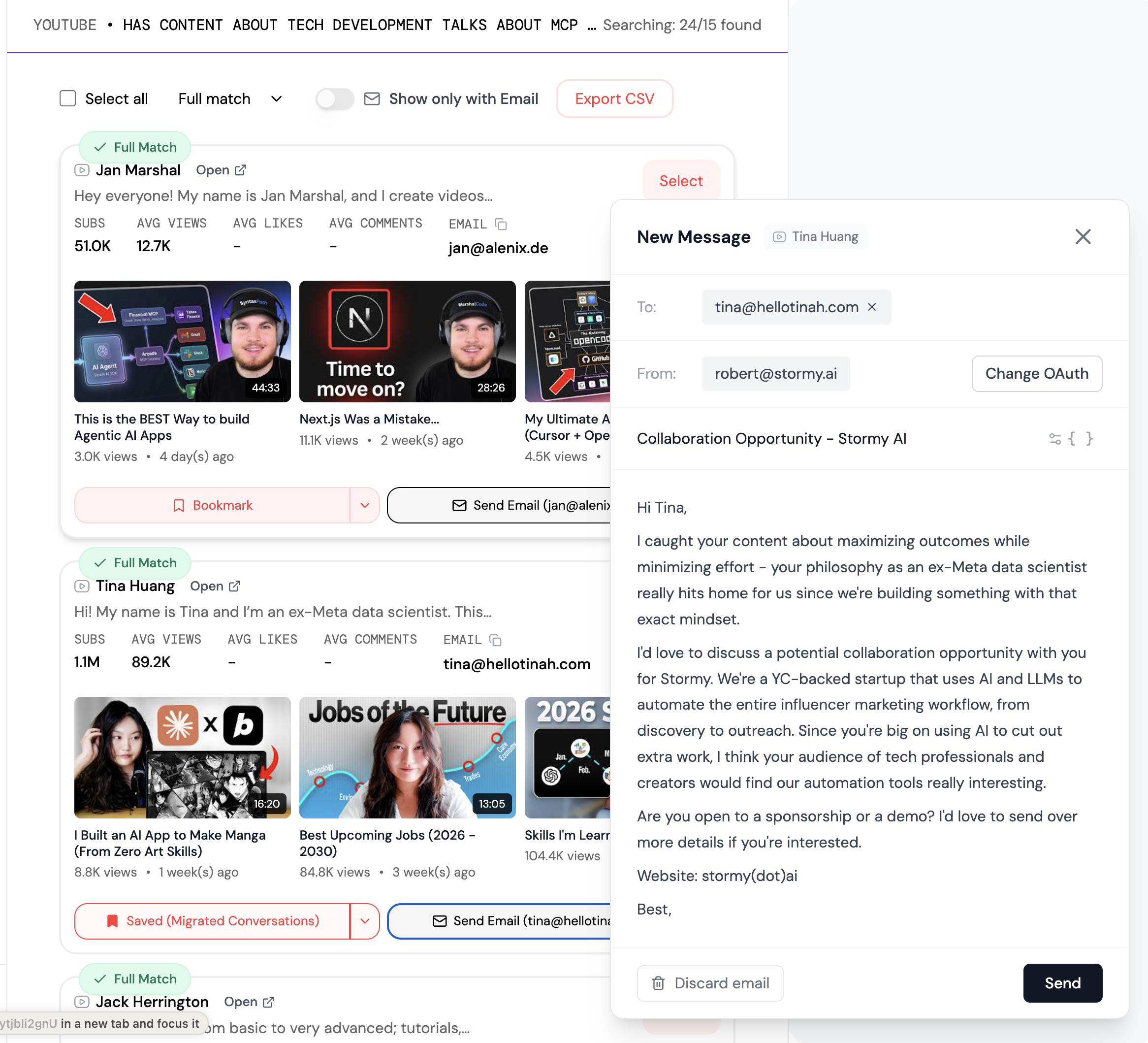

"Claude Code is the professional's choice for complex refactors, while Cursor remains the king of daily flow state iteration."When your team is building sophisticated software to scale customer acquisition, the ability to find and source the right influencers is just as critical as the code itself. This is where Stormy AI bridges the gap, allowing growth teams to discover 10K-100K follower creators via natural language prompts, instantly matching the efficiency of their AI coding stacks.

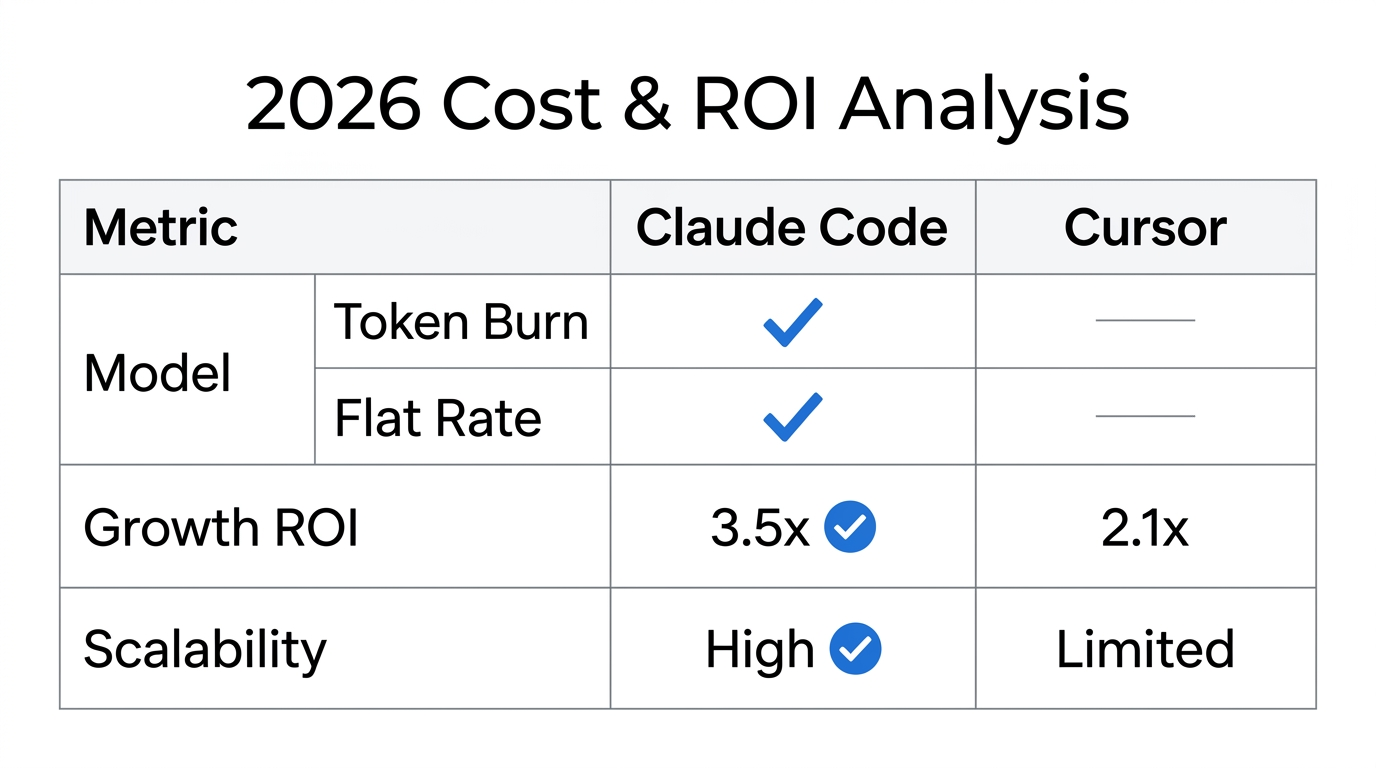

Financial Analysis: 'Token Burn' vs 'Flat Rate' Models

For a growth ops leader, the financial predictability of a tool is as important as its performance. The 2026 pricing landscape has diverged into two distinct models. Claude Code operates on a high-reasoning, pay-per-usage basis for its most advanced models like Opus 4.5, which costs approximately $75 per 1M output tokens according to O-mega.ai. Conversely, Cursor maintains a more traditional SaaS structure.

| Metric | Claude Code (The Delegator) | Cursor (The Accelerator) |

|---|---|---|

| Standard Pricing | $20/mo + Overages | $20/mo (Pro) |

| Cost (Simple Utility) | ~$0.13 | ~$0.10 |

| Cost (Complex Feature) | ~$0.87 | ~$1.14 |

| Correctness Rating | 78% | 73% |

While Claude Code carries a higher "token burn" risk, often costing 5-10x more than flat-rate IDE subscriptions for heavy sessions as per Morph LLM, it actually proves more cost-effective for complex features. Head-to-head tests on Next.js projects showed that Claude Code used 5.5x fewer tokens than Cursor for identical tasks by completing them faster with 30% less code rework, as validated by AtCyrus.

Addressing the 'Context Rot' Crisis

As sessions grow longer, both tools face the "Context Rot" crisis. Researchers from Medium and ETH Zurich have observed a 20-50% accuracy drop as context windows expand beyond 100k tokens. This "attention dilution" leads to agents ignoring later instructions and producing "hallucinated boilerplate" rather than project-specific logic, a phenomenon described by The New Stack.

To combat this, elite growth teams are adopting the "Document & Clear" Pattern. They never let a session exceed 60% of the token window. At that threshold, they use a `/catchup` command to summarize progress to a markdown file and then clear the session to reset context. Furthermore, using the Model Context Protocol (MCP) has become an industry standard for connecting LLMs to local files and APIs, with over 17,000 public MCP servers now available via Zuplo.

"The 'Bored 6-Year-Old' effect is real. If you don't reset your AI agent's context, it will eventually start ignoring your architectural rules in favor of generic patterns."Just as developers must manage context rot, marketing teams must manage creator relationships. Using Stormy AI's Creator CRM ensures that every interaction, negotiation, and payment is tracked in one place, preventing the "context rot" that often happens in messy email threads.

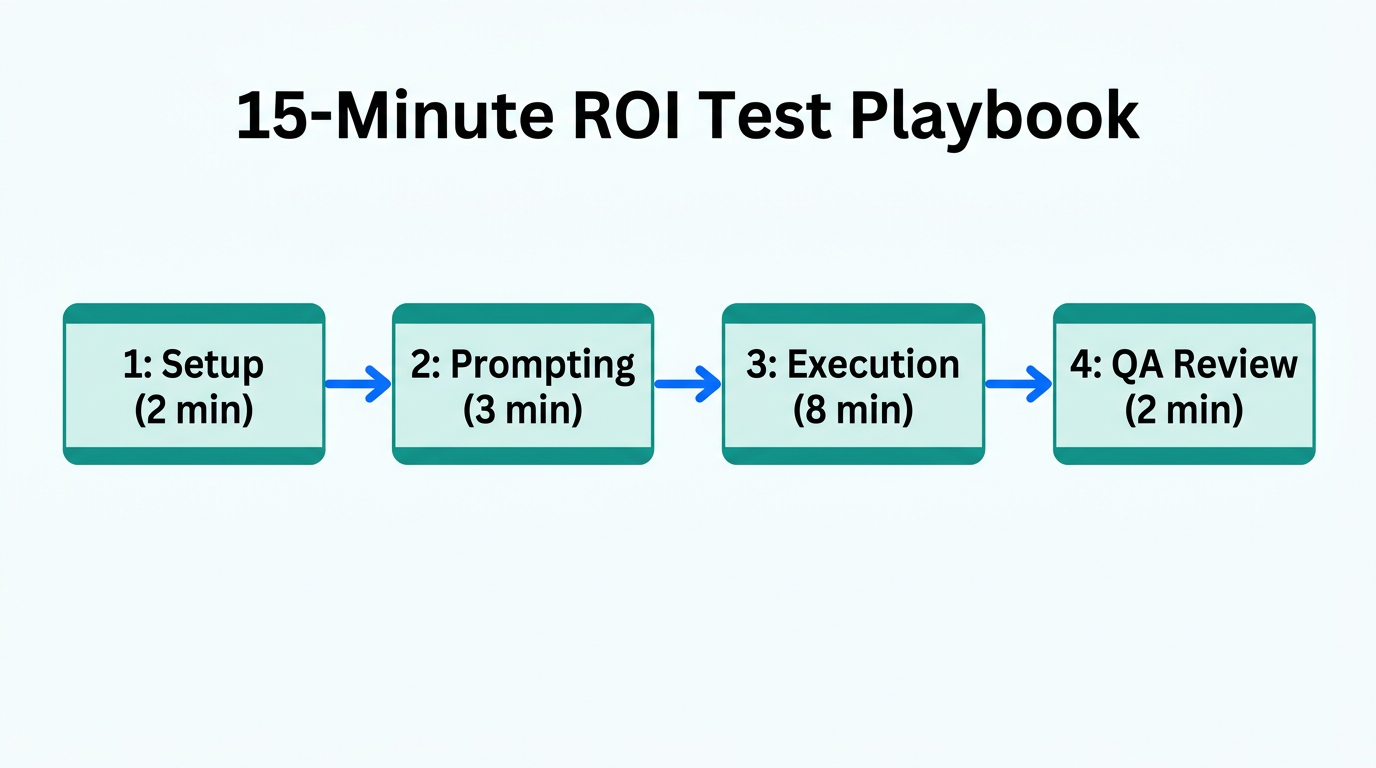

The 15-Minute Test Playbook for Growth Ops

To determine which tool fits your specific project, use the 15-Minute Test. This is a ritual to see if an agent can solve a non-trivial bug without human code-writing. It has been used by teams at Wix Engineering to reduce feature research rituals by 75%.

- Phase 1: Context Loading (3 Mins): Initialize a `CLAUDE.md` file in your root. It must contain the tech stack, naming conventions, and "do-not-touch" areas.

- Phase 2: Explore & Interview (4 Mins): Use Plan Mode. Ask the agent: "Analyze this feature. Interview me to find missing constraints." This prevents generic output, as recommended by Zen van Riel.

- Phase 3: The TDD Skill (5 Mins): Command the agent to write a failing test first. Do not allow it to write a fix until the test fails with a specific error.

- Phase 4: Gated Implementation (3 Mins): Move to Normal Mode. Let the agent fix the code and run the test. Follow this with an audit against architectural patterns, a best practice found on GitHub.

The 'Delegator' vs 'Accelerator' Philosophy

Choosing between Claude Code and Cursor depends on your team's workflow. Claude Code is The Delegator: it is execution-first and terminal-native. It is best for complex refactors, bug hunting, and when you want the AI to run the tests and builds autonomously. In 2026, it holds a 46% "Most Loved" rating among developers who prioritize autonomy, according to NxCode.

Cursor is The Accelerator: it is an editor-first, AI-native IDE. It is the gold standard for daily flow state, rapid iteration, and developers who prefer a visual interface. While it struggles more with context rot in massive multi-file refactors, its "Composer" mode has been developed specifically to handle broader changes, as highlighted by Cursor CEO Michael Truell via Point Dynamics.

For growth teams building their own tools, outreach is the next logical step after the software is built. Stormy AI acts as your AI outreach agent, handling hyper-personalized emails and follow-ups while you sleep, much like Claude Code handles your terminal tasks.

The 2026 Verdict: Which Tool Wins?

In 2026, the winner isn't a single tool, but a stack. For growth team efficiency, the optimal setup involves using Cursor for 90% of daily feature development and switching to Claude Code for the 10% of tasks that require deep architectural reasoning or complex bug fixing. This hybrid approach ensures you leverage the flat-rate costs of Cursor while maintaining the high correctness of Claude for critical infrastructure.

As you build these tools, remember that the software is only as good as the audience it reaches. By combining a world-class AI coding stack with Stormy AI for influencer discovery and outreach, growth teams can achieve true end-to-end automation in their customer acquisition efforts. Whether you are building in Framer or a full-stack Next.js environment, the integration of agentic AI is no longer optional—it is the baseline for 2026.