In the hyper-competitive market of 2026, the traditional go-to-market (GTM) cycle has been replaced by a relentless sprint of agentic iteration. For product and growth teams, the bottleneck is no longer human typing speed but computational orchestration. As the global AI coding assistant market swells to $8.5 billion this year, the winners are those who treat AI capacity as a strategic resource rather than just a utility. Enter the Claude Code GTM strategy: a methodology that leverages high-intelligence agentic tools and tactical scheduling to out-ship competitors by a factor of ten.

The 2026 GTM Landscape: From Autocomplete to Agentic Planning

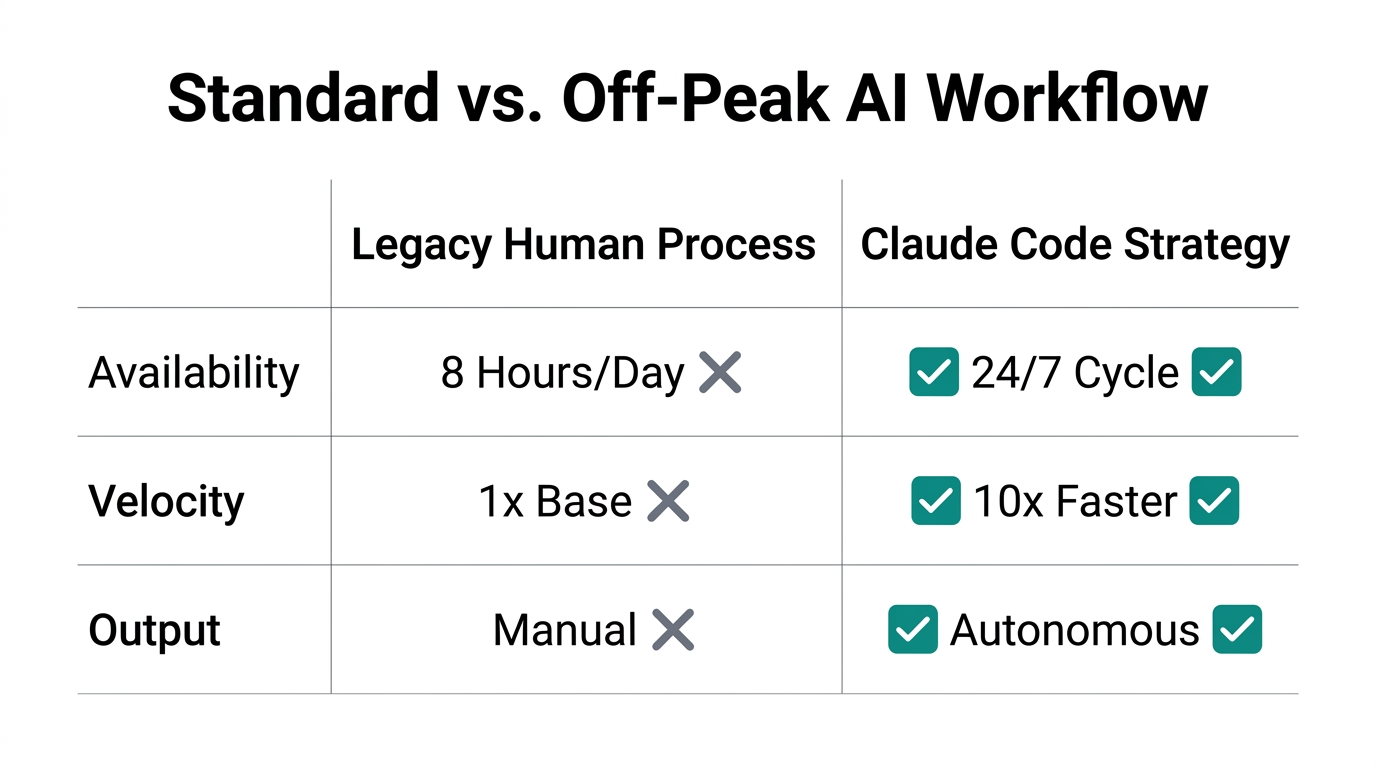

We have officially moved past the era of simple AI autocompletion. In 2026, 84% of developers are utilizing agentic tools that don't just suggest lines of code but execute multi-file refactors autonomously. According to the Stack Overflow 2026 Survey, daily usage among professionals has crossed the 51% threshold, signaling a fundamental shift in how software is built and brought to market.

Revenue figures reflect this shift. While GitHub Copilot maintains a lead with over $2 billion in ARR, specialized tools like Claude Code and Cursor are capturing the high-end "power user" segment. Engineering leaders are now earmarking up to $3,000 per developer annually specifically for AI tooling, recognizing that these platforms are the primary drivers of fast product iteration in 2026.

"The ROI for agentic coding has shifted from theoretical speed to verifiable capital savings, with some tasks seeing a 4,868x ROI compared to human-only development."

The 'Off-Peak' Advantage: Doubling Output via Capacity Scheduling

One of the most overlooked tactics in the Claude Code GTM strategy is the utilization of "off-peak" compute capacity. In a strategic move to manage global server loads, Anthropic recently doubled usage limits for all tiers during weekends and non-peak hours (weekdays outside 8 am–2 pm ET). Smart GTM teams are now scheduling their heaviest architectural refactors and tech-debt cleanups for Saturday mornings.

By timing these compute-heavy tasks during off-peak windows, teams can effectively bypass the standard rate limits that stifle mid-week progress. This allows for a "Weekend Refactor Sprint" where months of technical debt are cleared in 48 hours, leaving the workweek open for customer-facing features and marketing launches. This isn't just about saving money; it's about maximizing uninterrupted flow state for your AI agents.

SWE-bench Benchmarks: Why Claude 3.7 Changes Everything

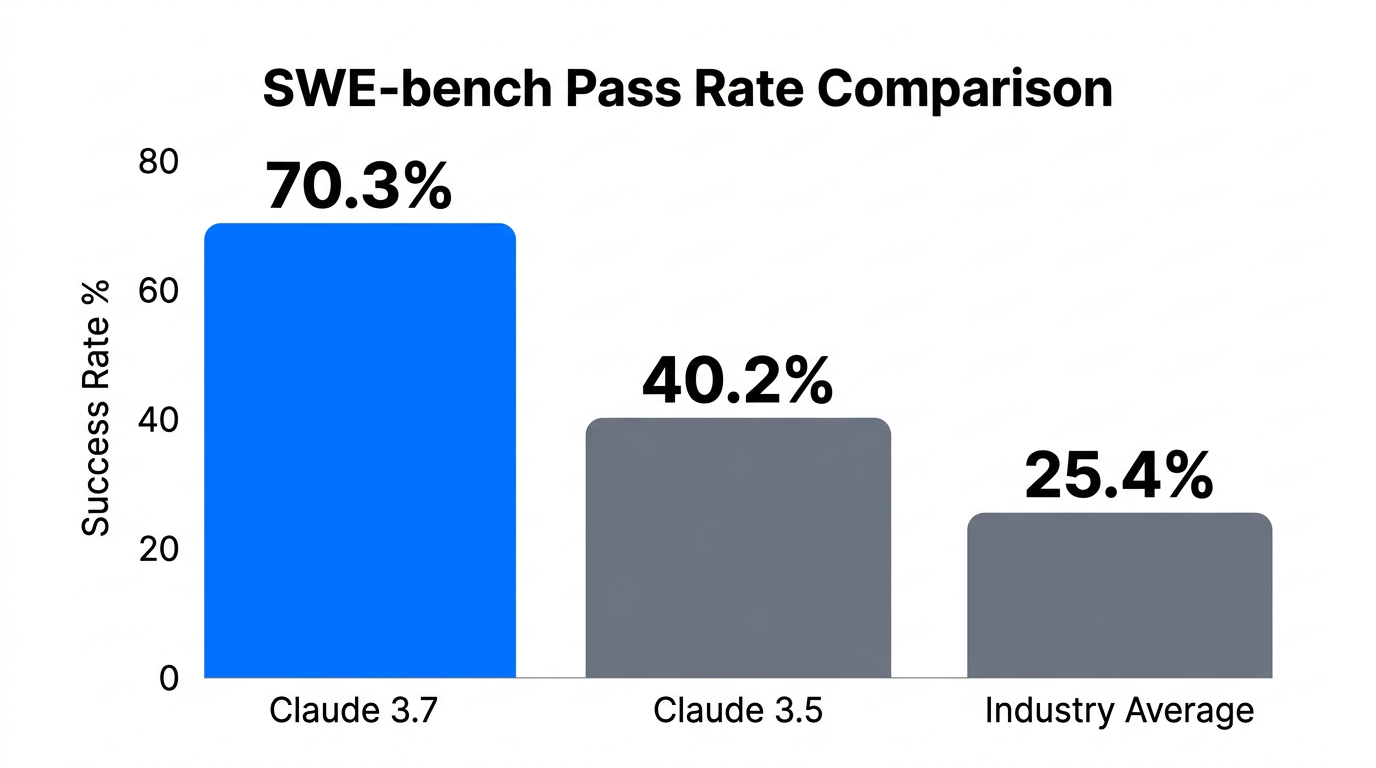

To understand why Claude Code is the current gold standard for Claude 3.7 vs 3.5 for business, one must look at the SWE-bench Verified scores. Claude 3.7, equipped with sophisticated scaffolding, achieved a 70.3% score, a massive leap from the 49% seen in earlier iterations. This leap represents more than just accuracy; it represents the model's ability to handle complex, real-world software engineering tasks without constant human hand-holding.

| Tool | SWE-bench (Verified) | Primary Benefit | Best For |

|---|---|---|---|

| Claude Code | 70.3% (3.7 Sonnet) | Terminal-native agents | Deep Refactors |

| Windsurf | ~62% | High-speed "Flow" | Solo Developers |

| Cline (BYOK) | 80.8% (with Opus 4.6) | Ultimate Customization | Power Users |

| GitHub Copilot | ~55% | Enterprise Stability | Legacy Teams |

For a GTM team, this 70.3% benchmark means that seven out of ten complex technical tickets can likely be solved by the agent on the first try. This drastically reduces the time between a product manager's "What if?" and a live feature in production. While tools like Windsurf offer incredible speed for solo developers, Claude Code's terminal-native approach makes it the superior choice for deep, multi-file architectural changes.

The 'Thinking Trap': Managing Your Thinking Budget

With great power comes the risk of ballooning costs. Claude 3.7 introduced a hybrid "Thinking Mode" that allows the model to trade speed for reasoning depth. However, this has led to what some call the "Thinking Trap"—where the AI over-engineers a simple solution, consuming thousands of unnecessary tokens and potentially adding technical debt.

To prevent this, high-performance teams are implementing a thinking_budget. By setting a budget of less than 1,000 tokens for routine boilerplate and reserving 8,000+ tokens for complex logic, you can keep your Claude Code GTM strategy lean. This prevents a $20 "surprise" prompt from occurring during a routine UI update as seen in recent Anthropic API benchmarks.

"Setting a hard limit on thinking tokens isn't just about cost—it's about forcing the AI to find the most elegant, simple solution rather than an over-engineered one."

Case Study: How Faros AI Refactored Weeks in an Afternoon

A prime example of fast product iteration in 2026 comes from Faros AI. Faced with a massive technical debt cleanup involving over 200 files and bloated Docker images, the team utilized Claude Code’s "Plan-Execute" loops. Instead of manually editing files, they directed the agent to analyze the architecture and execute the refactor autonomously.

The result? They reduced Docker image sizes by 50% and modernized their entire backend in a single afternoon—a task that would typically consume weeks of senior developer time. This agility allows the GTM team to pivot their technical stack at the speed of market trends, a crucial advantage in the 2026 landscape.

/compact command when your session reaches 70% capacity. This summarizes history and reduces the "input tax" on every subsequent message, preventing your $400/week burn from spiraling.The Optimization Stack: Scaling to $15/Week

For most users, raw API usage can lead to costs exceeding $400 a week. To bring this down to a sustainable $15/week, savvy teams are adopting a specific "Optimization Stack":

- Tarmac-Cost: A predictive billing tool that intercepts prompts to provide cost estimates before they run.

- Cortex-TMS: A file-tiering system that prevents Claude from reading your entire codebase (like

node_modules) on every prompt, reducing session costs by up to 94.5%. - Hybrid Subscription Models: Moving from the $20 Pro plan to specialized enterprise tiers for power users, which caps the cost of what would otherwise be $1,600+ in API fees.

By implementing these tools, you turn the "infinite cost potential" of raw API access into a predictable fixed expense, allowing for better budget forecasting during GTM planning.

Integrating AI Efficiency Across GTM

The efficiency gains seen in technical development are now bleeding into other areas of the GTM motion. Just as developers use Claude Code to source and fix bugs, marketing teams are using Stormy AI to source and manage creator relationships. The logic is identical: use high-intelligence AI agents to handle the manual discovery and outreach, so the human team can focus on creative strategy.

Whether you are automating a backend refactor or using an AI agent to handle creator outreach while you sleep, the goal is the same: compress the time to market. In 2026, the competitive edge belongs to the "AI-First" organization that treats every workflow as an opportunity for agentic optimization across platforms like TikTok and LinkedIn.

"If your GTM team isn't iterating on product and marketing daily using agents, you're essentially racing a Ferrari on a bicycle."

The Final Playbook for Fast Iteration

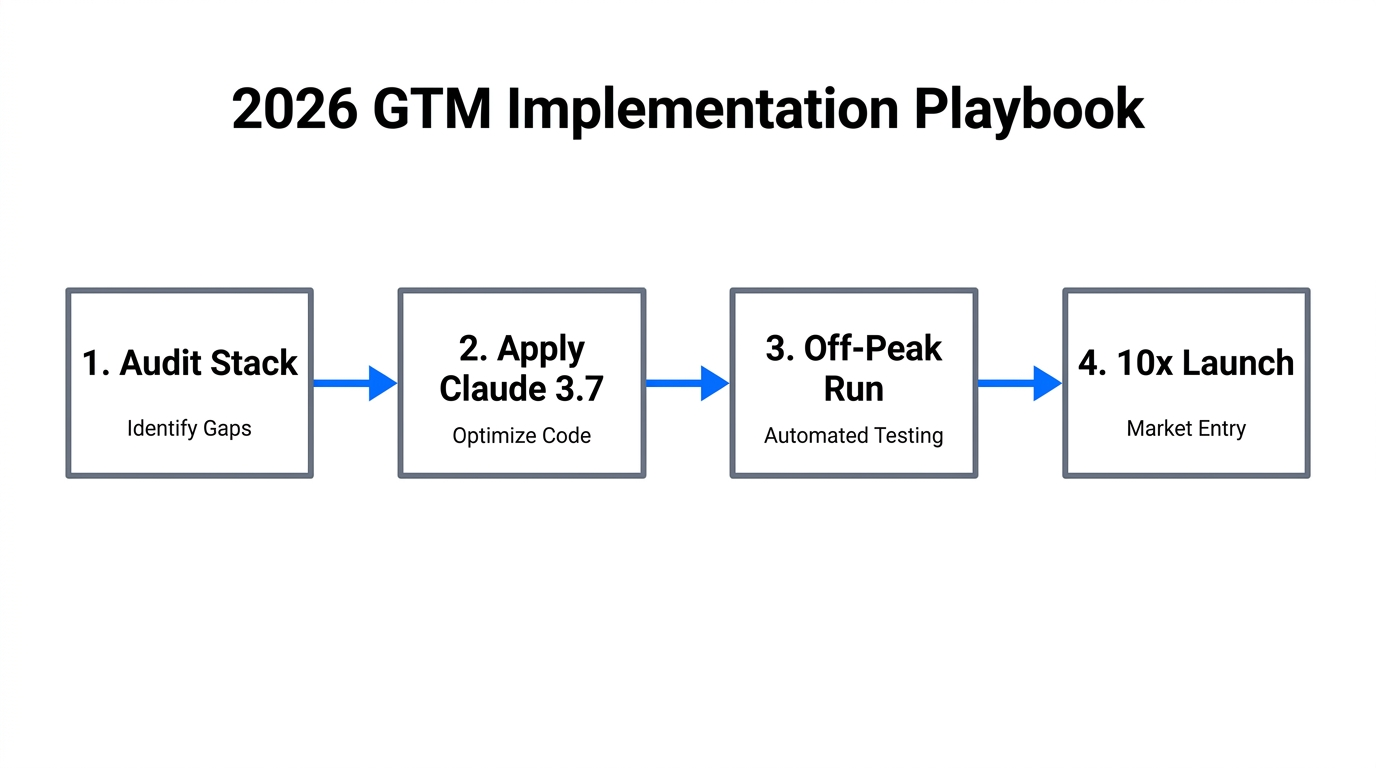

To implement the Claude Code GTM strategy successfully, follow this sequence:

- Audit your technical debt: Identify the 200+ file refactors that have been stalled for months.

- Subscribe to Claude Max: Move past the Pro rate limits to ensure your team has the "message runway" needed for complex tasks.

- Schedule a Weekend Sprint: Execute your largest architectural changes during Anthropic's off-peak windows to take advantage of doubled capacity.

- Set Thinking Budgets: Use the

thinking_budgetparameter to keep the AI focused on simplicity and cost-efficiency. - Extend to Marketing: Pair your streamlined dev cycle with tools like Stormy AI to ensure your outreach and creator discovery move as fast as your code.

By leveraging off-peak AI efficiency and high-intelligence models like Claude 3.7, you aren't just shipping code faster—you're building a resilient GTM machine that can adapt to the 2026 market in real-time. The era of the three-month roadmap is dead; the era of the afternoon refactor is here.