By mid-2026, the era of the "generic prompt" has officially died. If you are still asking an LLM to "write a LinkedIn post for a SaaS founder," you aren't just behind the curve; you are practically invisible in a market flooded with AI-generated noise. The industry has pivoted from Conversational AI to Operational AI, where the most valuable skill in Go-To-Market (GTM) engineering is no longer prompting, but Context Engineering [source: Forbes].

With Anthropic’s Claude surging to a 32% enterprise market share this year, the focus has shifted toward building a persistent "GTM Context Stack." This isn't just about feeding the model a few PDF brand guidelines. It’s about creating a real-time data loop where Claude Code (the terminal-native agentic tool) has direct access to your Slack conversations, CRM logs, and customer call transcripts. When your internal data becomes the model's environment, the output moves from "robotic fluff" to surgical, revenue-driving feedback.

"Context Engineering is the act of turning your company's entire historical memory into a living database that Claude can query, reason over, and act upon in real-time."

Why Context Engineering is the Most In-Demand GTM Skill Set of 2026

In 2026, the primary differentiator for high-growth companies is the quality of their "Context Stacking." Anthropic’s annualized revenue run-rate reached $14 billion in February 2026, driven largely by specialized tools like Claude Code, which alone reached a $2.5 billion run-rate. Companies are no longer buying chatbots; they are building GTM Agents.

The shift to AI Revenue Ops means that growth teams are abandoning traditional UIs for API-first workflows. Instead of clicking through a dashboard, a 2026 Growth Engineer interacts with their stack through a terminal or a database. This allows for "Agentic Performance Marketing," where swarms of agents monitor Meta Ads or TikTok Ads Manager CPMs in real-time, auto-pausing creative based on brand alignment detected through deep context.

The Scaleport Case Study: Replacing Strategy Departments with a Context Stack

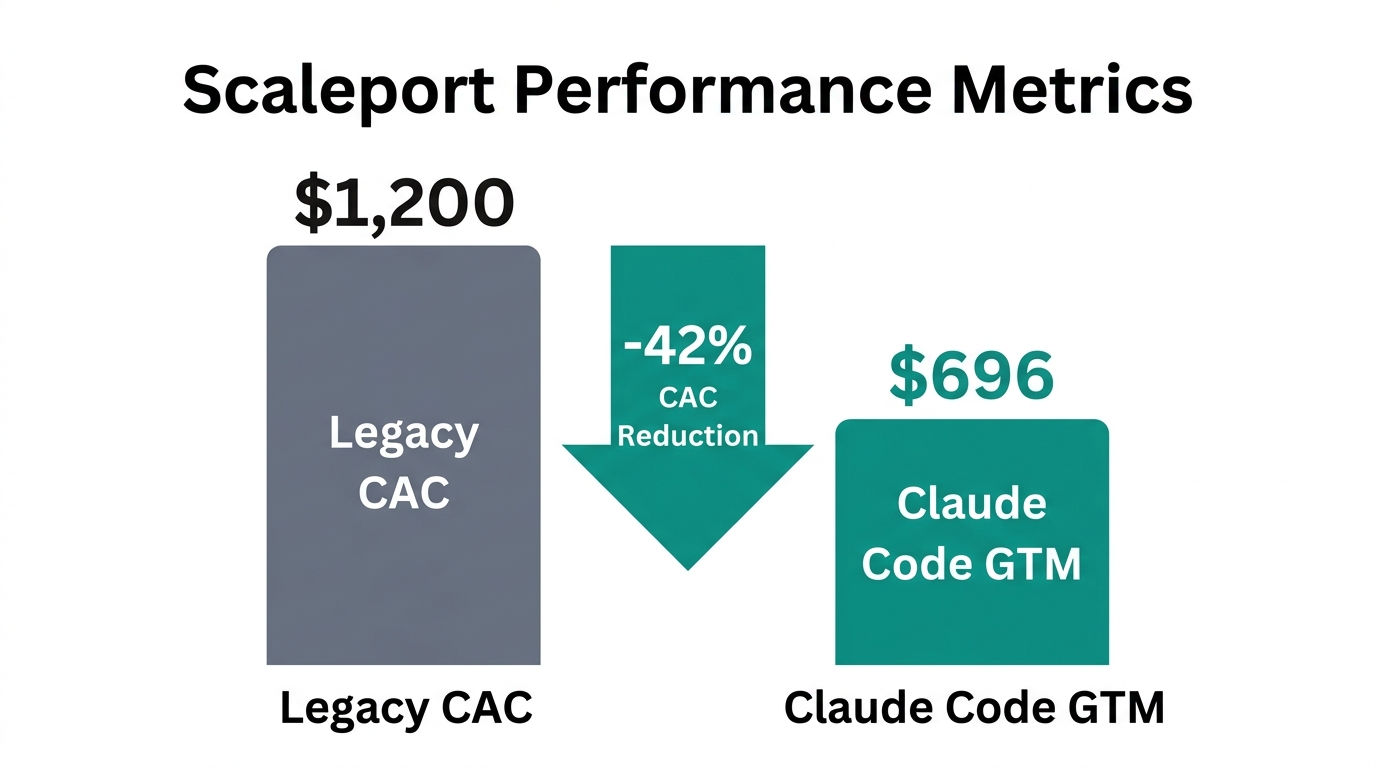

One of the most radical examples of this shift comes from Scaleport, an e-commerce agency that effectively replaced its strategy department with a Claude-native context stack. They recognized that human account managers often lose institutional memory—forgetting a specific client request from six months ago or missing a subtle shift in market sentiment within a Slack channel.

The Scaleport team built a system that used Make and n8n to scrape every Slack message, Asana task, and Zoom transcript into a PostgreSQL database hosted on Railway. By connecting this database to Claude via the Model Context Protocol (MCP), they created an agent named "Orion."

Orion wasn't just a bot; it was a participant. It managed meeting agendas, flagged when a GTM plan contradicted previous client goals, and even handled accountability by cross-referencing Slack promises against Asana deliverables. The result? A 94% client retention rate. The AI quite literally knew the business better than the account managers did because it possessed the perfect context of every interaction.

The 'Wade Foster' Warm-Up: A Blueprint for Strategic Onboarding

Before launching a single campaign, the most successful GTM engineers in 2026 use a technique known as the "Wade Foster" Warm-Up (named after the Zapier CEO's focus on strategic depth). This is a prompting and stacking blueprint that feeds the model up to five years of ICP (Ideal Customer Profile) data before asking for a single recommendation.

A 2026 Sonnet model has a 200,000-token context window, allowing you to upload nearly three years of meeting transcripts into a single Claude Project. The blueprint involves three stages:

- The Historical Dump: Feeding the agent every successful (and failed) pitch deck, win/loss report, and customer feedback survey.

- The Belief Friction Mapping: Asking Claude to identify exactly where customers are hesitant based on call transcripts—a concept known as "Belief Friction" detection.

- The Execution Protocol: Only after the model understands the *why* do you allow it to access Stormy AI for influencer sourcing or Instantly for cold outreach.

"AI works best when you feed it call transcripts, not generic prompts. Primary sources in, quality content out. That’s where the magic happens." — Maja Voje, GTM Strategist

Technical Workflow: Building Your GTM Context Stack with Railway and N8N

To implement this, you need more than just a Claude Pro subscription. You need a persistent infrastructure. In 2026, growth teams are using Railway to host persistent Claude agents that function as "always-on" market researchers.

| Feature | Claude (Opus 4.6) | OpenAI (GPT-5.2) | Google (Gemini 3 Pro) |

|---|---|---|---|

| Best For | Strategic reasoning, brand voice | Data analysis, complex math | Ecosystem integration |

| Context Window | 200K - 1M | 128K - 400K | 1M - 2M |

| Core Philosophy | Operational/Agentic | Performance/Logic | Distribution/Multimodal |

| GTM Sentiment | "Better Copywriter" | "Better Calculator" | "Best for Bulk SEO" |

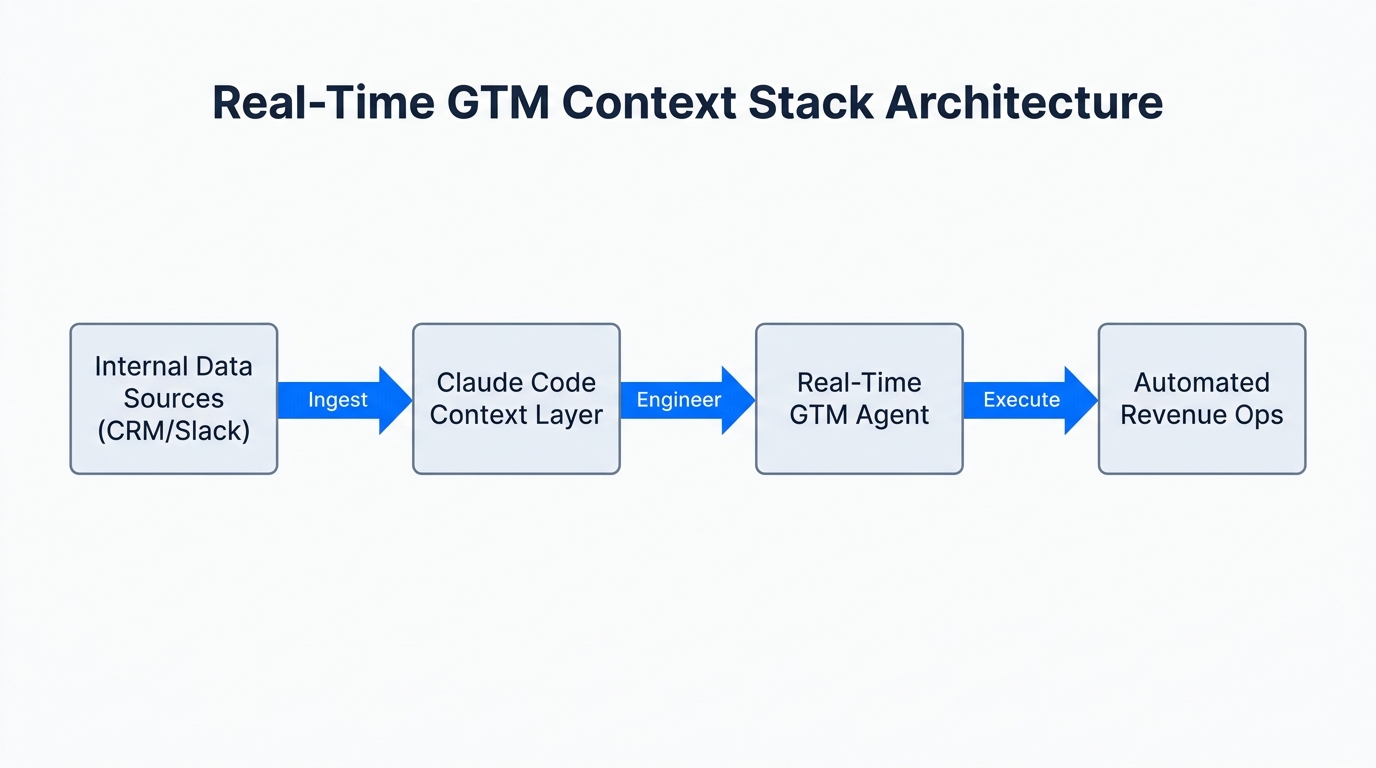

The workflow for 2026 GTM Engineering generally follows this path:

Step 1: Data Ingestion

Use N8N to create a webhook that listens to your HubSpot or Salesforce updates. Every time a deal is moved or a note is added, push that text into a Postgres database on Railway. This creates a centralized "Context Warehouse."

Step 2: MCP Server Integration

The Model Context Protocol (MCP) has become the industry standard. By adding an MCP server to Claude Code, the agent can read and write to your CRM without intermediate tools like Zapier. This reduces latency to under four seconds for complex GTM tasks.

Step 3: Terminal-Native Action

Using Claude Code, you can run terminal commands like /plugin marketplace add gtmagents/gtm-agents to pull in open-source libraries of GTM Skills. These include 137 specific sales triggers and outbound automation scripts found on GitHub.

Troubleshooting 'Context Poisoning' and the Sea of Sameness

While Context Engineering is powerful, it is not without risks. The most common failure in 2026 is "Context Poisoning." This occurs when a team uploads conflicting GTM strategies—for example, a 2024 aggressive growth plan and a 2026 profitability-focused plan—into the same context window. Claude often gets confused, hallucinating mid-funnel tactics that are two years out of date.

Furthermore, there is the "Sea of Sameness." Because SociallyIn reports 88% of enterprises are now retaining Anthropic for their creative needs, B2B ads are beginning to sound identical—a phenomenon critics call "The Anthropic Voice." To combat this, you must use "Primary Source Stacking." Instead of asking Claude to "be creative," feed it raw, unpolished transcripts of your founders talking or customer rants from Reddit. This ensures the output remains grounded in unique human perspective.

5-Minute Agent Deployment Playbook

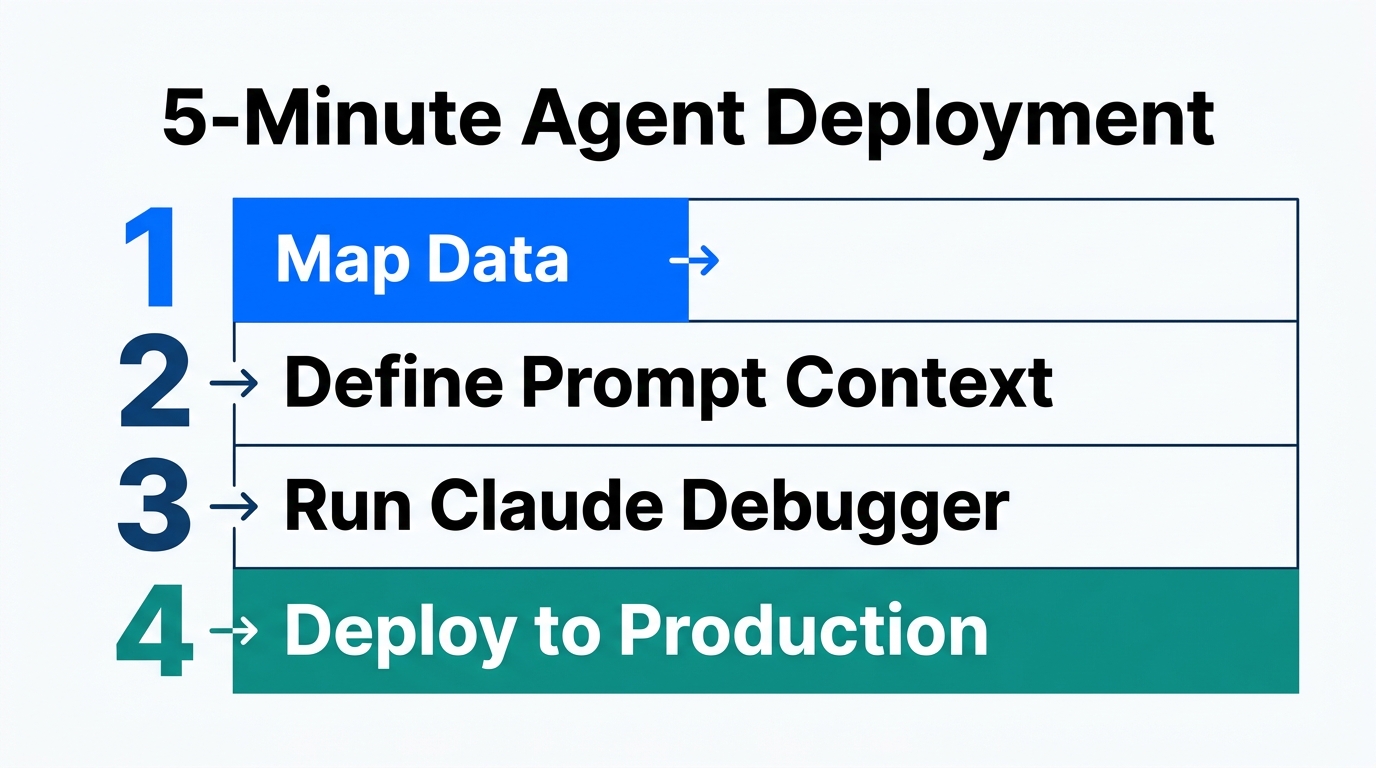

If you are ready to move beyond the browser and into GTM Engineering, follow this terminal-native setup:

- Install Claude Code: Run

npm install -g @anthropic-ai/claude-code. - Authenticate: Use

claude auth loginto connect your Max plan (the $100/mo tier is required for high-volume context windows). - Bootstrap Your Brand: Run the

/bootstrapcommand to onboard Claude to your specific brand voice, ICP data, and 2026 revenue goals. - Connect Your Stack: Use

claude mcp add hubspot-server --api-key=YOUR_KEYto bridge the gap between your CRM and your agent.

Once deployed, your agent can begin executing "The Competitor Switch"—a play where Claude monitors live feeds for mentions of competitors and prepares a counter-angle in a Slack memo before the prospect has even finished their coffee. Teams using this type of agent-driven outbound, personalized via Claude’s "Creative Hook" mapping, are seeing a 4.8% meeting-booked rate, compared to the 0.6% industry average for templated automation, according to campaign data from Stormy AI.

Conclusion: The Future of AI Revenue Ops

The transition from marketing to GTM Engineering is complete. In 2026, the winners aren't those with the biggest budgets, but those with the cleanest context. By utilizing tools like Claude Code and platforms like HockeyStack for pipeline prediction, brands are reducing labor costs by shifting from human-heavy BDR teams (costing ~$4.50 per lead) to agent swarms (costing ~$0.12 per qualified lead).

Whether you are using Stormy AI to find the perfect niche creators for a contextual campaign or building custom MCP servers on Railway, the goal is the same: Operationalize your context. Don't just prompt Claude; build a stack that allows Claude to live inside your business. That is the only way to achieve surgical feedback and sustainable growth in the age of Operational AI.