The generative AI gold rush is in full swing, but behind every viral video generator and AI-powered tool is a complex, high-stakes engineering challenge. For technical founders, the shift from a traditional SaaS model to a generative ai infrastructure model isn't just a matter of swapping databases; it’s a fundamental change in how compute is consumed and how margins are managed. Building a building ai video generator requires more than just a clever prompt—it requires a deep understanding of GPU orchestration, latency optimization, and the brutal reality of hardware costs. In this guide, we’ll take a technical deep-dive into the stack used by successful founders like Nico of Neural Frames, who scaled to $100,000 in monthly revenue by mastering the intersection of computer vision and scalable cloud architecture.

The Modern AI SaaS Tech Stack

The speed of development in the AI space is unprecedented. Founders are no longer spending months on boilerplate code; they are using ai development tools to ship MVPs in weeks. The core of a modern ai saas tech stack typically splits into three distinct layers: the rapid-response frontend, the orchestration backend, and the specialized AI editor.

For the frontend, Next.js has become the industry standard. Its ability to handle server-side rendering (SSR) and edge functions is critical for AI apps that need to display high-resolution media quickly. The backend is almost exclusively dominated by Python. Whether you are using FastAPI or Django, Python remains the gateway to the AI ecosystem, providing direct access to libraries like PyTorch and TensorFlow that power generative ai infrastructure.

The real secret weapon for solo founders and lean teams is Cursor. By integrating an AI-native coding environment into the development cycle, founders are seeing a "superhuman" boost in productivity. When you are building niche viral apps, the ability to refactor an entire backend or implement a new API integration in minutes rather than hours is what allows a tiny team to outcompete VC-backed giants. This speed is essential when you need to discover creators using Stormy's AI search across TikTok, YouTube, and Instagram who can take your tool viral before the competition even finishes their documentation.

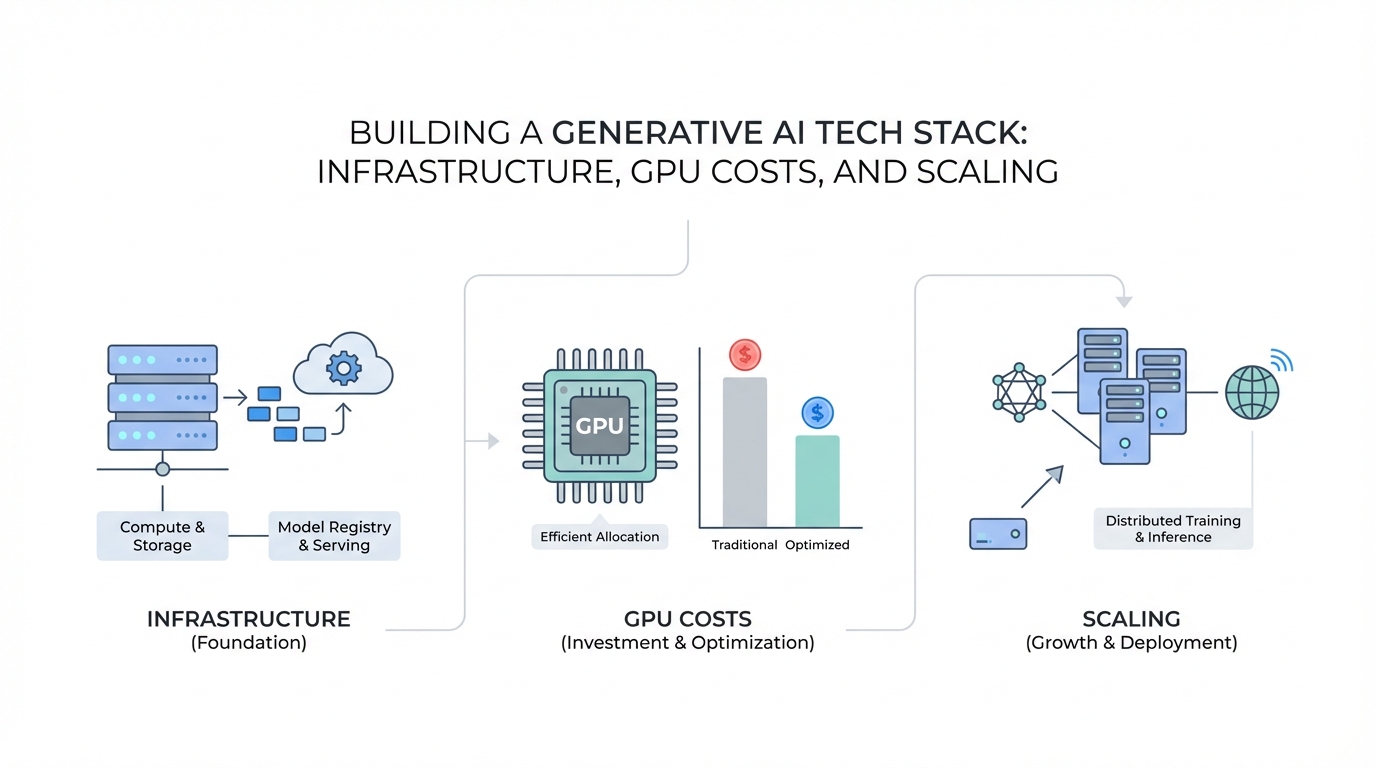

Infrastructure Management: RunPod vs. Managed APIs

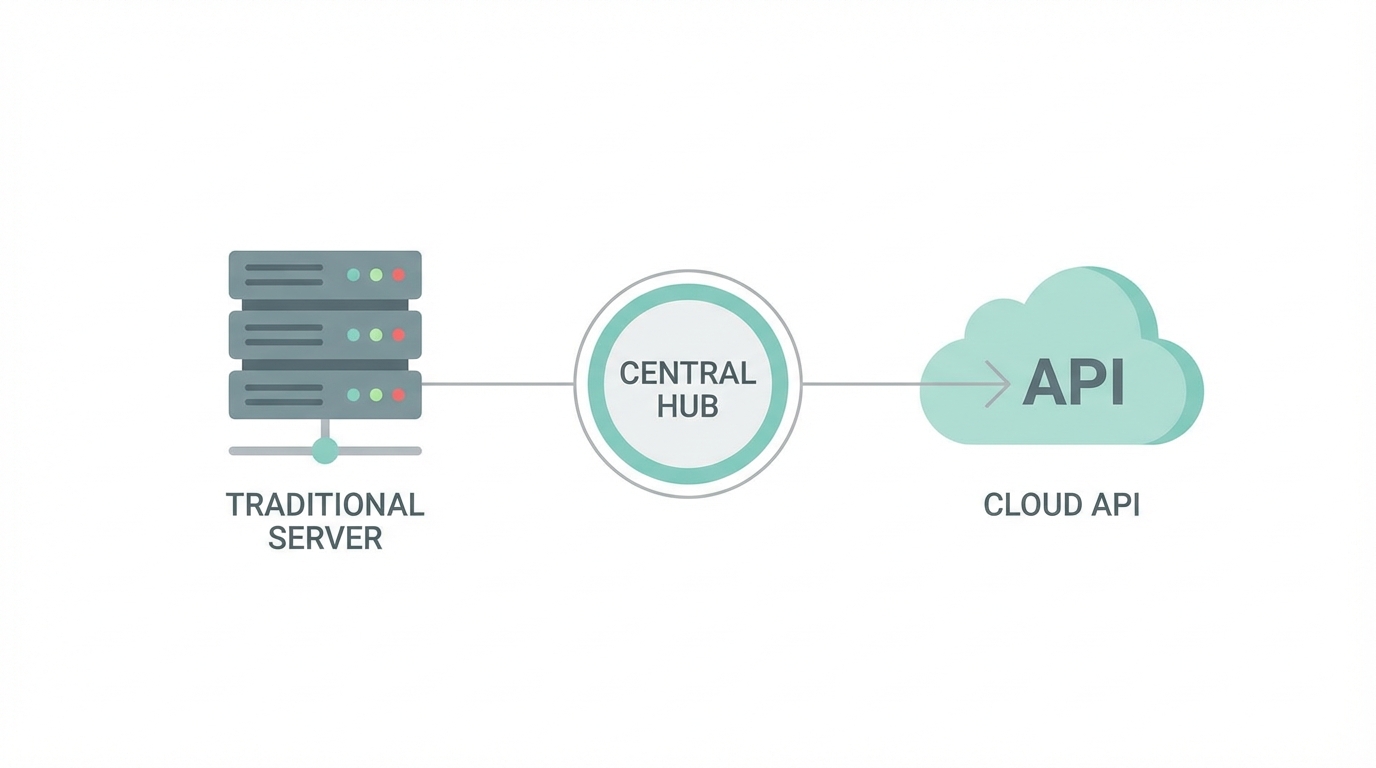

One of the most critical decisions in gpu hosting for startups is the "build vs. buy" debate regarding compute. Do you host your own models on raw GPUs, or do you leverage managed APIs? The answer usually involves a hybrid approach known as GPU orchestration.

Founders building high-traffic platforms like Neural Frames often use RunPod for their raw compute needs. RunPod allows you to rent high-end GPUs (like the NVIDIA A100 or H100) on a per-second basis, which is ideal for custom-trained models or specialized workflows that require direct hardware access. However, the downside of raw gpu hosting for startups is the management overhead. You are responsible for handling "cold starts," scaling worker nodes, and ensuring the health of the hardware.

On the other hand, managed providers like Fal.ai offer text-to-video and image generation APIs that handle the scaling for you. This is the fastest way to get a building ai video generator off the ground. By offloading the inference logic to a third party, you can focus on user experience and marketing. The trade-off is often cost; managed APIs typically carry a premium over raw compute. As your traffic grows, you can use Stormy AI for influencer vetting and fake follower detection to ensure your expensive GPU cycles aren't being wasted on bot-driven views from low-quality creator audiences.

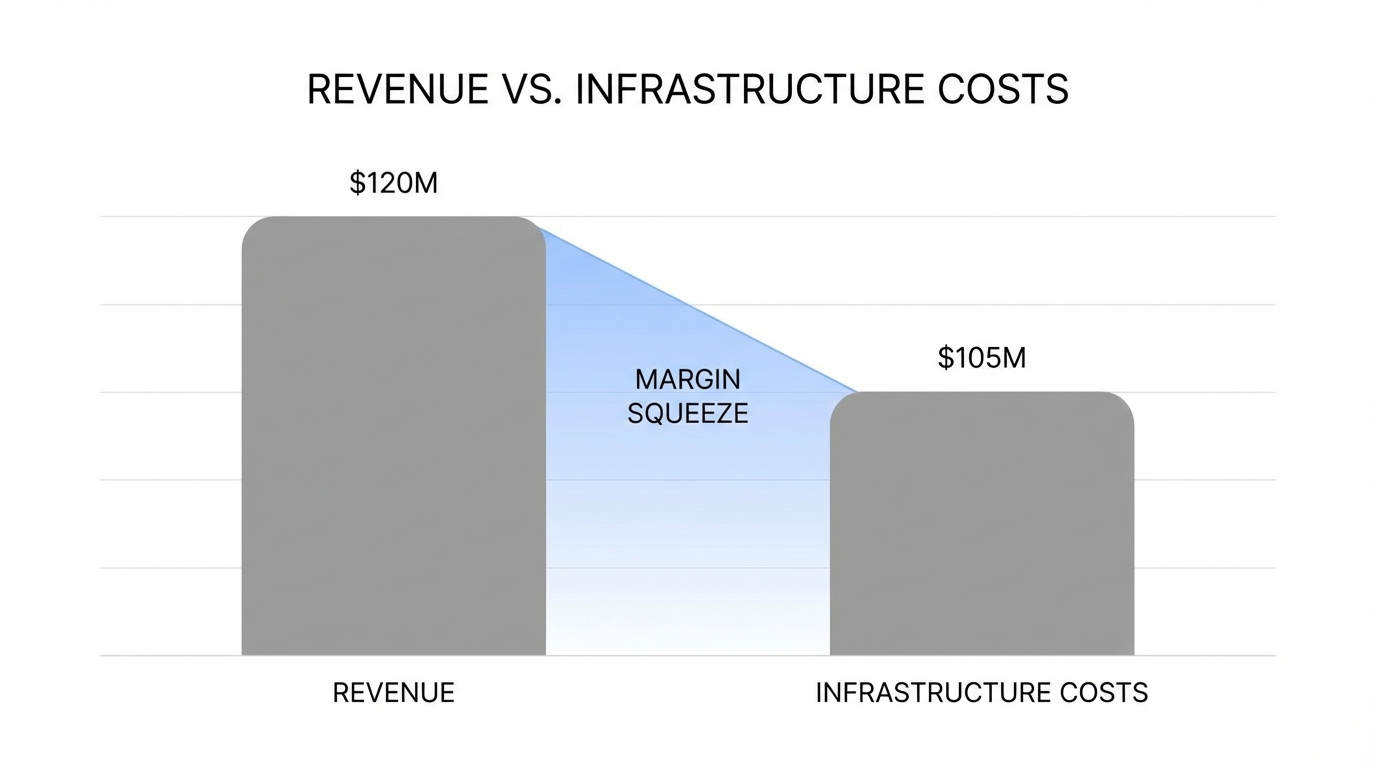

Cost Analysis: The 45% Margin Squeeze

Unlike traditional SaaS, where gross margins often hover around 80-90%, generative AI businesses operate in a high-cost environment. For a platform generating $100,000 in monthly revenue, it is not uncommon to see $45,000 spent on GPUs and APIs alone. This 45% margin squeeze is the single biggest hurdle for AI startups.

To survive this squeeze, your generative ai infrastructure must be ruthlessly optimized. This includes:

- Dynamic Batching: Grouping multiple user requests together to maximize GPU utilization.

- Model Distillation: Running smaller, faster models for less complex tasks to save on compute.

- Tiered Pricing: Ensuring your subscription tiers accurately reflect the underlying compute costs.

Beyond compute, other operational costs add up. Tools like Ahrefs for SEO, Intercom for support, and Email Octopus for retention are essential but contribute to the burn. To keep these costs manageable, founders must ensure their user acquisition is efficient. Using Stormy AI's personalized outreach allows you to automate hyper-personalized communication with creators and set up AI agents to follow up automatically, cutting down the manual time spent on marketing and allowing you to reinvest those savings into your core technology.

The 'Superhuman' Workflow: Integrating AI Assistants

The shift from "coder" to "architect" is facilitated by what many call the superhuman workflow. This involves using AI not just as a tool, but as a teammate. Nico, the founder of Neural Frames, describes ChatGPT as his "therapist" and constant collaborator for technical troubleshooting and strategic planning.

In this workflow, the developer provides the high-level logic and constraints, while AI handles the syntax and implementation. This is particularly effective when working with complex ai saas tech stack components like video rendering pipelines or real-time analytics. For example, setting up PostHog for deep user behavior tracking can be automated through AI assistants, ensuring you understand exactly which features are driving retention.

This efficiency shouldn't stop at development. Once the product is built, the same AI-native mindset should be applied to growth. Instead of manually searching for influencers to promote your new app, you can use Stormy's AI search engine to find creators across TikTok Shop, LinkedIn, and newsletters who specifically match your niche, such as indie musicians or digital artists.

Monitoring and Reliability: The Custom Alert System

When you are running a high-traffic AI platform, server uptime isn't enough; you need to monitor GPU health and inference latency. A sudden spike in traffic can easily overwhelm your worker nodes, leading to failed requests and churned users. Successful founders often build custom internal tools to bridge the gap between their generative ai infrastructure and their team communications.

A common solution is a custom Telegram bot that hooks into your GPU provider's API. This bot can send instant alerts if a server goes down, if GPU temperatures exceed safe limits, or if the inference queue exceeds a certain threshold. Combined with task tracking tools like Linear and documentation in Notion, this creates a resilient operational loop.

To ensure your app remains compliant as it scales into the enterprise space, platforms like Vanta can automate security audits like SOC 2 or HIPAA. This is crucial because enterprise customers will demand proof of security before integrating your AI tools into their workflows. Once your infrastructure is stable, you can use Stormy's post tracking and analytics to monitor views, likes, and engagement on individual videos in real-time, providing feedback on the health of your viral growth loops.

Conclusion: Solving Problems Over Tech

The ultimate lesson from founders who have successfully built an ai saas tech stack is that technology is a means to an end, not the end itself. Users don't pay for the complexity of your GPU orchestration or the elegance of your Python backend; they pay for a problem solved. Whether it's helping a musician create a viral video or helping a brand automate their UGC, the focus must remain on the user experience.

By leveraging modern ai development tools, optimizing your gpu hosting for startups, and utilizing an all-in-one platform like Stormy AI for creator CRM and payment management, you can navigate the technical and financial challenges of the generative AI landscape. The opportunity to build niche, impactful applications has never been greater. Start by identifying a specific pain point, choosing a stack that allows for rapid iteration, and staying ruthlessly focused on solving that problem for your community.