In 2026, the landscape of B2B networking has undergone a radical transformation. With OpenClaw surpassing 247,000 stars on GitHub, the era of "Agentic AI" is no longer a futuristic concept—it is the standard for high-growth enterprises. However, as millions of monthly visitors flock to autonomous agent frameworks, LinkedIn has responded with the most sophisticated session-level detection algorithms in its history. For growth teams, the challenge is clear: how do you leverage the power of autonomous agents without ending up in 'LinkedIn Jail'?

Scaling your LinkedIn operations this year requires more than just a clever prompt. It demands a strategic framework that blends intent-based prospecting with rigorous safety guardrails. Whether you are using a self-hosted OpenClaw agent on a private Hostinger VPS or running complex multi-agent workflows, the following guide outlines the mandatory protocols for safe, sustainable growth.

The 3-Week Warm-Up Protocol: Conditioning for 2026

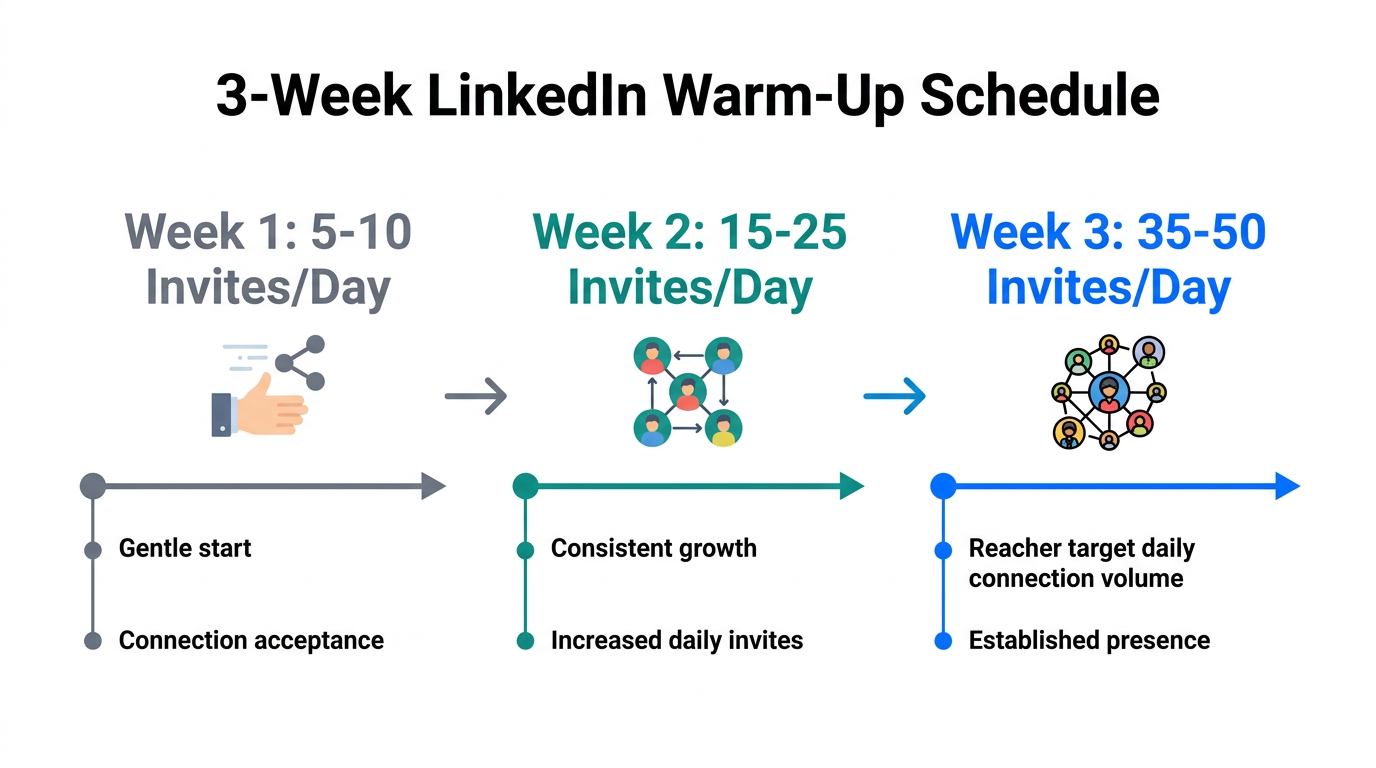

The most common mistake marketers make in 2026 is introducing full automation to a "cold" or even a moderately active account. LinkedIn’s AI monitors velocity changes with extreme precision. If an account that typically sees 5 profile views a day suddenly jumps to 100 via an autonomous agent, it triggers an immediate security flag based on LinkedIn's professional community policies. To ensure LinkedIn automation safety 2026, you must follow a mandatory 21-day conditioning period.

During the first week, your OpenClaw agent should be restricted to passive activities. Start with 5–10 profile visits and no more than 2–3 connection requests per day. By the second week, you can introduce engagement—having your agent like or comment on three posts from high-value prospects. Only by the third week should you begin scaling toward your target volume. This slow ramp-up mimics organic human growth, making the agent's behavior indistinguishable from a power user's manual activity.

"OpenClaw isn't a model; it's the car. You plug the engine (LLM) into it, and it does the driving." — Peter Steinberger, Founder of OpenClawThe Death of Browser Automation: Why BeReach is Non-Negotiable

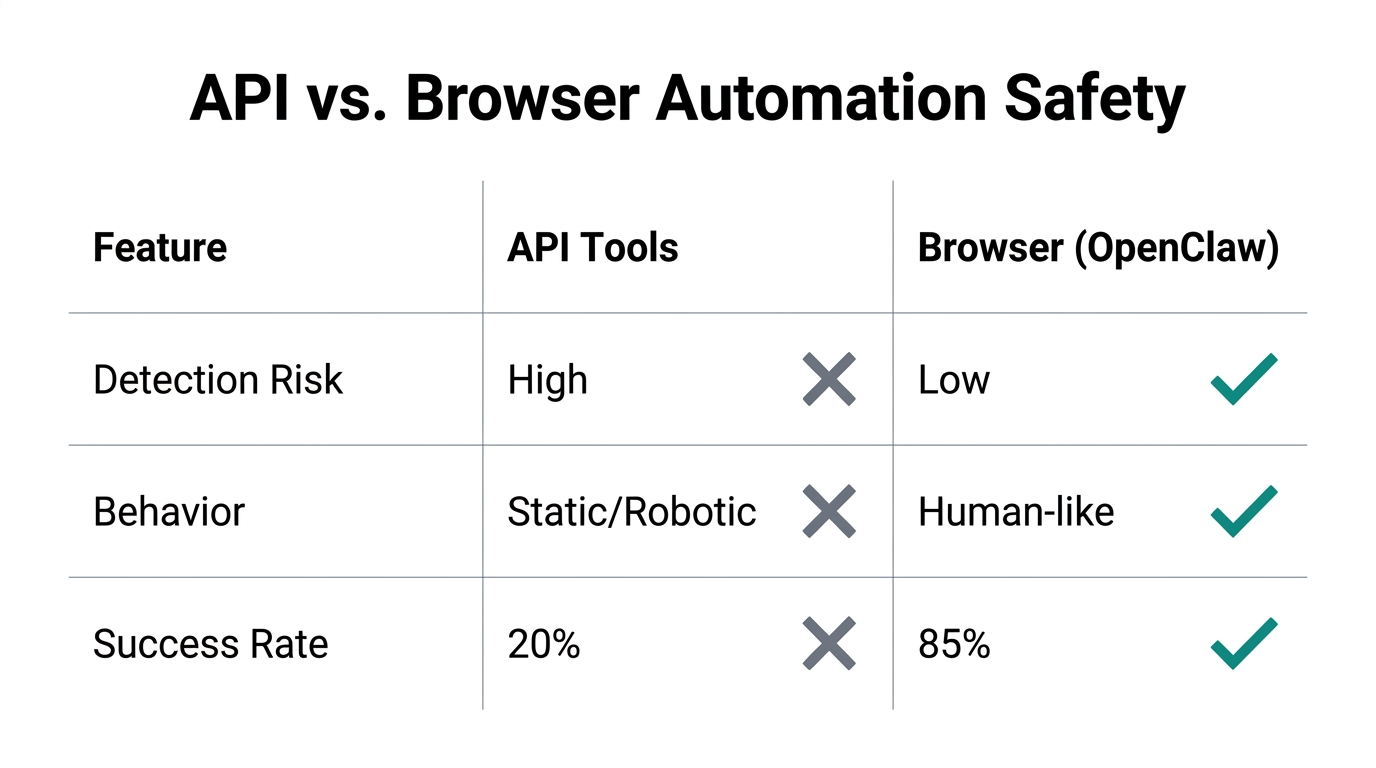

Historically, automation tools relied on "clicking" buttons in a browser window. In 2026, this approach is essentially a one-way ticket to a permanent ban. LinkedIn's current detection systems can identify the subtle, non-human timing of browser-level scripts. This is why OpenClaw BeReach integration has become the gold standard for enterprise scaling.

Instead of brittle browser interactions, BeReach provides a stable "Agentic API" layer. This allows your OpenClaw agent to communicate directly with LinkedIn's infrastructure through a secure, authenticated channel. When paired with LinkdAPI for deep data enrichment and intent-based signals, you can analyze a prospect's last 10 posts to create intent-driven hooks that significantly boost reply rates while keeping your session fingerprints clean.

| Feature | Standard Browser Automation | OpenClaw + BeReach/LinkdAPI |

|---|---|---|

| Detection Risk | Extremely High (Pattern-based) | Minimal (API-level stability) |

| Scalability | Low (Single session lock) | High (Multi-agent support) |

| Data Quality | Scraped (Often incomplete) | Structured (Deep intent signals) |

| Maintenance | Frequent breaks on UI updates | Robust (Stable API connection) |

Anti-Loop Rules and Safety Guardrails

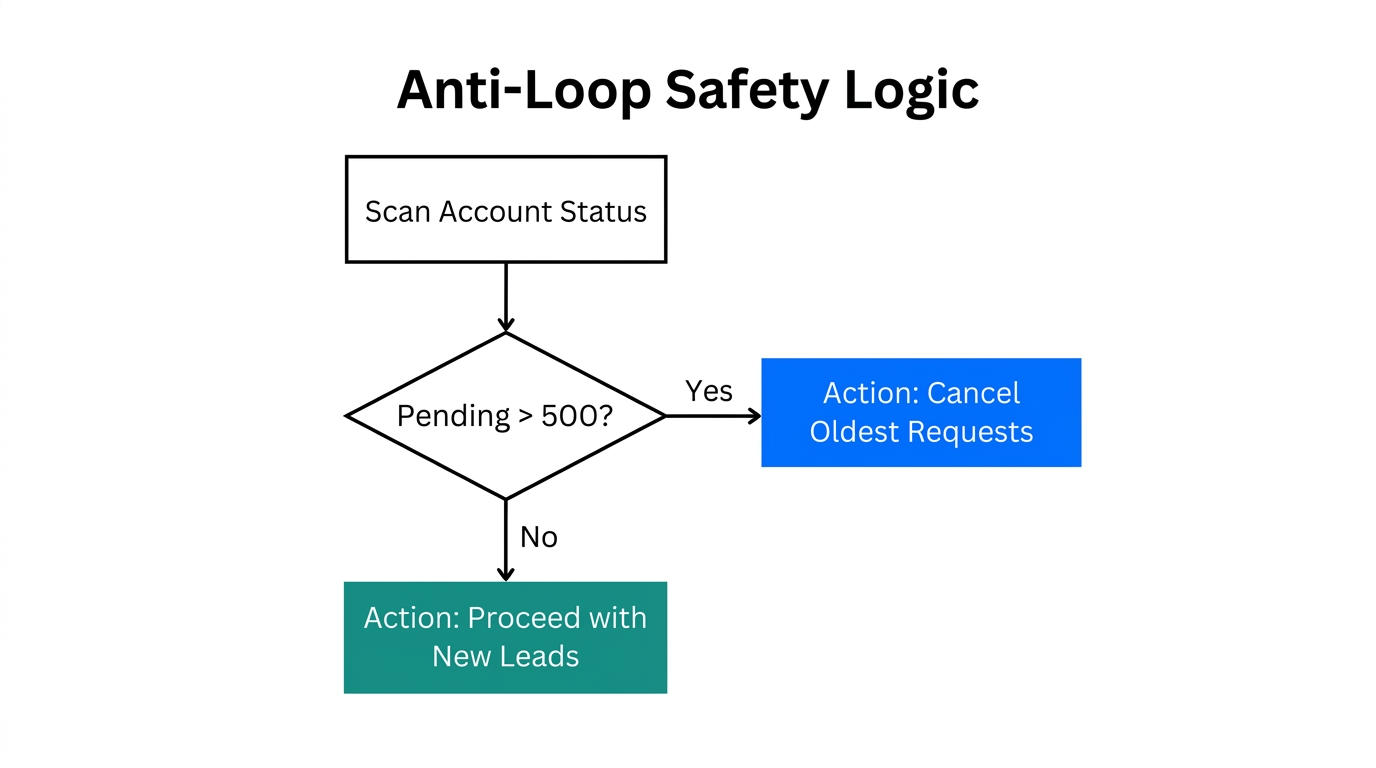

One of the most dangerous risks of autonomous agents is the "infinite loop." Without explicit instructions, an agent that encounters an error (like a locked profile or a captcha) might attempt to bypass it repeatedly, exhausting your API tokens and alerting LinkedIn to bot-like persistence. Security researchers at Cybernews warn that running these agents without strict sandboxing is a massive operational risk.

To prevent this, every OpenClaw system prompt must include Anti-Loop instructions. A simple rule such as "If a task fails twice, STOP and log the error for human review" can save you hundreds of dollars in token drain and protect your account reputation. Additionally, implement model routing: use cheaper models like GPT-4o-mini for initial research and data cleaning, reserving premium models like Claude 3.5 Opus only for the final, high-stakes copy drafting.

Vetting Community Skills: A Security Checklist

The ClawHub registry has revolutionized the way we add functionality to our agents, offering modular "skills" for everything from lead scoring to automated follow-ups. However, because these skills are un-sandboxed code, they represent a potential security vulnerability. Malicious scripts can be designed to exfiltrate your OpenAI or Anthropic API keys.

Before installing any skill from a public registry, follow this 2026 security checklist:

- Verify the Source: Does the skill come from a reputable developer with a history of contributions?

- Review the Code: Check the

SKILL.mdand underlying Python files for any unauthorized network calls. - Test in Sandbox: Run new skills in a local Docker container before deploying them to your primary VPS.

- Check Permissions: Ensure the skill only has access to the specific directories and APIs it needs to function.

"The key to 2026 growth is moving from B2B to B2A—marketing to the autonomous agents that now curate information for human decision-makers."The 'Human-in-the-Loop' Mandate for Safe Scaling

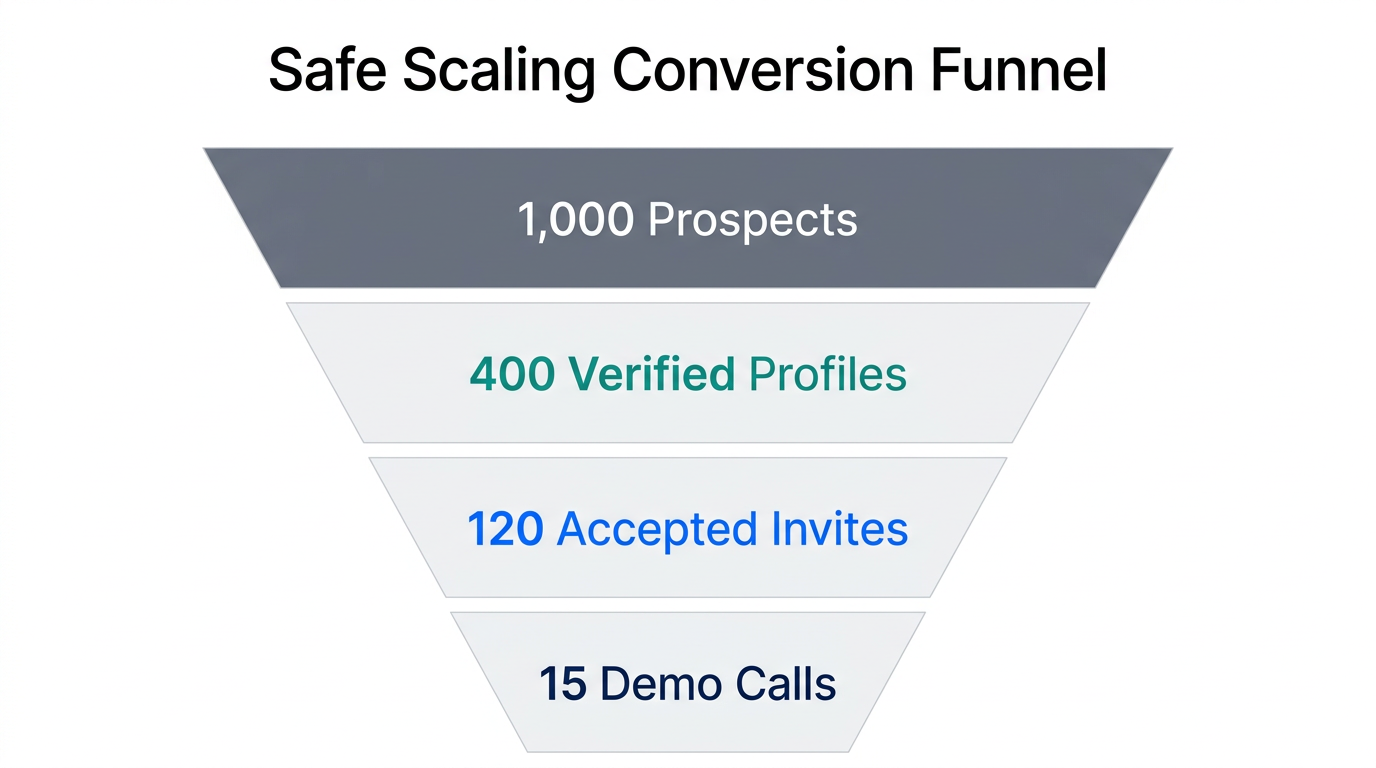

Despite the high accuracy scores on GLUE benchmarks, safe LinkedIn growth 2026 still requires a human touch. The most successful teams use a Hybrid Human-Agent Loop. In this model, OpenClaw handles the 80% of work that is repetitive—finding leads based on hiring signals, analyzing profiles, and drafting personalized messages. The human then handles the final 20%: reviewing the drafts, refining the tone, and clicking "Send."

This "Human-in-the-Loop" approach is particularly effective for intent-based prospecting. For example, if an agent detects a target company has posted a new job for a "Head of Sales," it can immediately draft a message for you to review. This ensures high relevancy—which LinkedIn's algorithms reward—while maintaining the quality control that AI often lacks in nuanced social contexts. For those managing massive volumes of creators or influencers, platforms like Stormy AI provide a similar balance, using AI to discover and vet creators while allowing users to maintain direct control over the relationship management.

Real-World Success: Intent-Driven Results

We are seeing incredible results from founders who treat LinkedIn like a dynamic ecosystem rather than a static database. One SaaS founder recently utilized ClawDBot logic to monitor competitors' engagement. By identifying users who commented on a competitor's post and having OpenClaw draft a value-driven response, they achieved a 25% cold reply rate—a figure nearly unheard of in the pre-AI era.

Scaling these efforts requires a sophisticated tech stack. By combining the discovery capabilities of tools like Stormy AI with the execution power of OpenClaw, businesses can create a seamless pipeline from discovery to conversion. Remember, the goal of automation in 2026 is not to replace human interaction, but to amplify the human's ability to be relevant at scale.

Final Thoughts: The Future of Responsible Automation

Avoiding "LinkedIn Jail" in 2026 is entirely possible if you respect the platform's boundaries and leverage the right technology. By implementing a LinkedIn account warm-up guide, utilizing stable API layers like BeReach, and maintaining a human-in-the-loop, you can scale your operations to heights that were previously impossible. The age of "spray and pray" is dead; long live the age of the intelligent, agentic outreach. Protect your assets, vet your skills, and always prioritize the quality of the connection over the quantity of the messages.