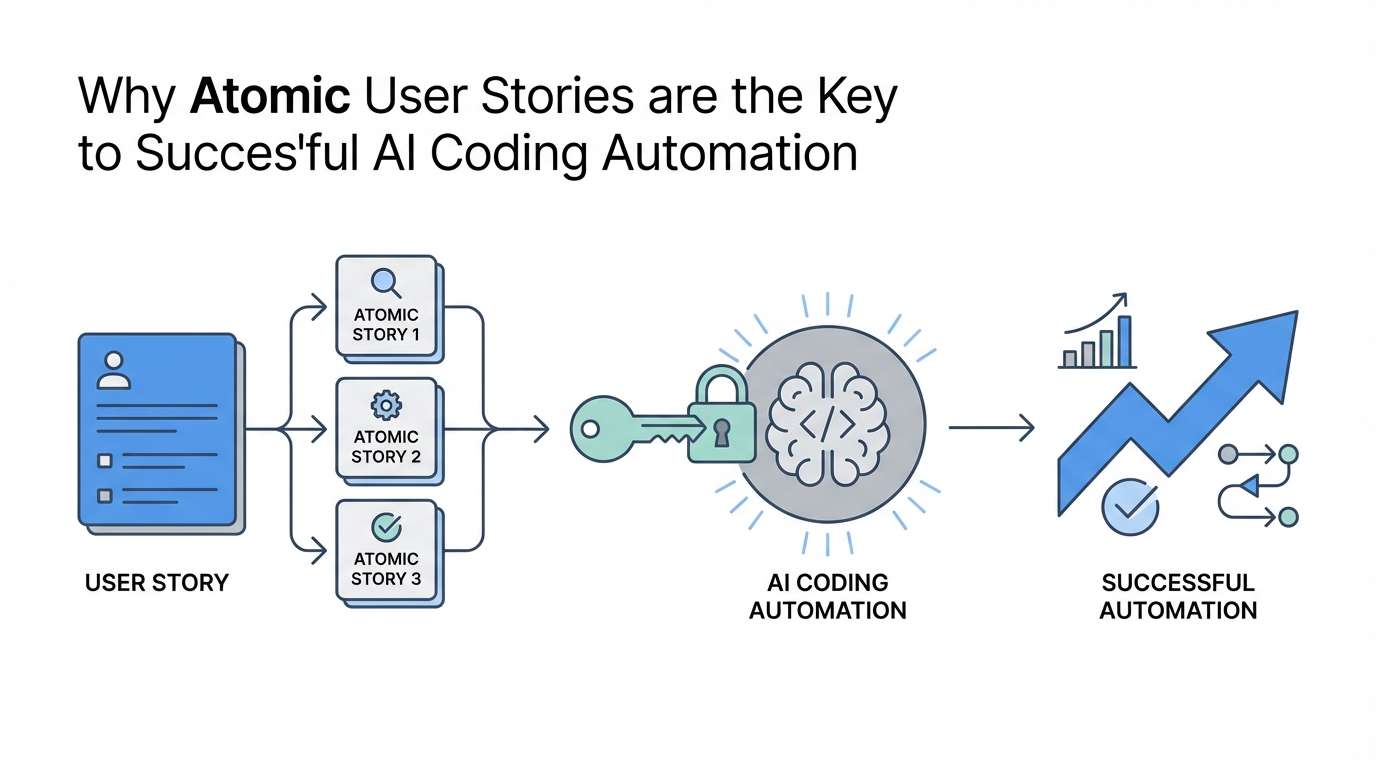

Imagine waking up to find a complex new feature fully implemented, tested, and committed to your codebase while you slept. This isn't a futuristic dream; it is the reality of modern AI agent workflow automation. However, most developers fail to achieve this because they treat AI like a human senior engineer who can intuit context from a 50-page document. To truly unlock the power of Claude Code best practices, you must shift your strategy toward atomic user stories AI development. By breaking down features into microscopic, verifiable tasks, you prevent the common pitfalls of context drift and hallucination that plague traditional prompt engineering.

The Context Window Trap: Why Large PRDs Fail

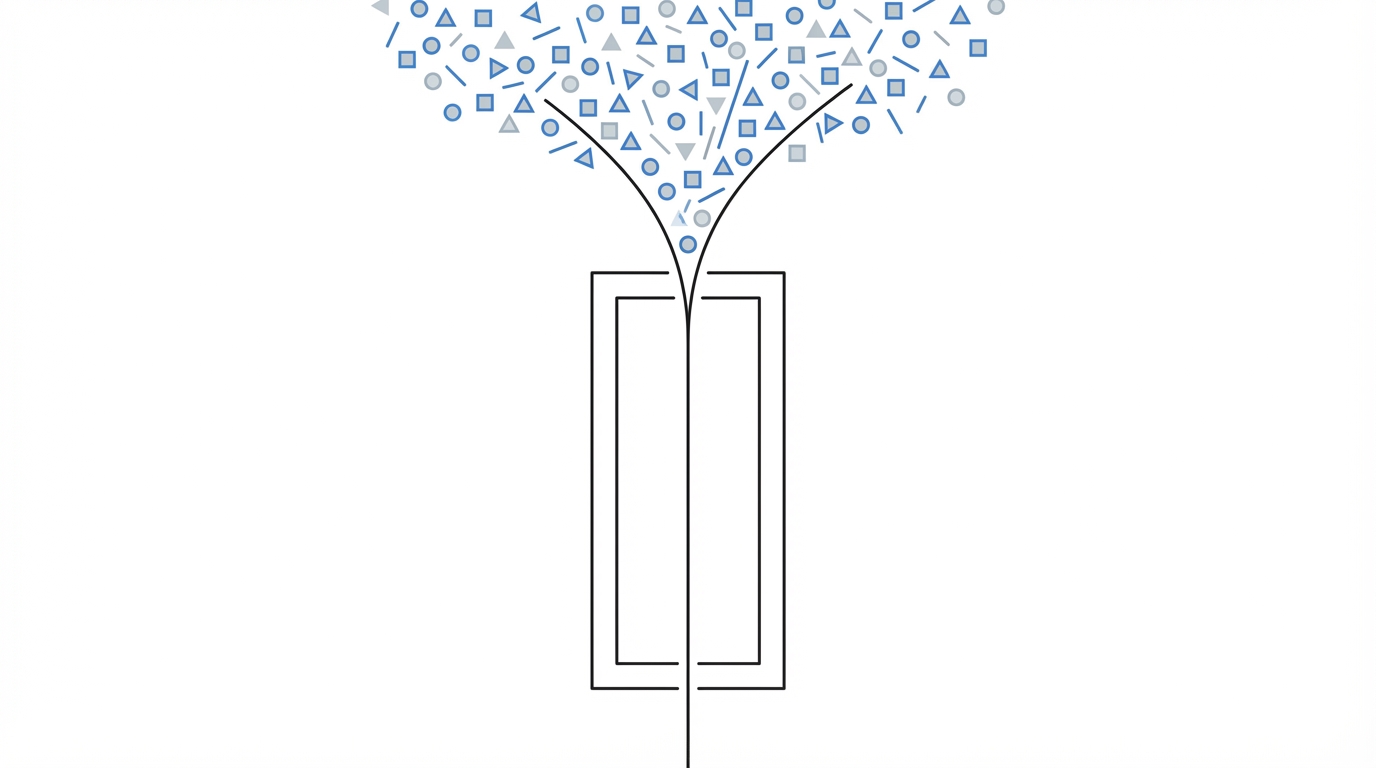

The primary reason AI agents fail at complex tasks is the context window. Even with advanced models like Claude 3.5 Sonnet offering upwards of 200,000 tokens, the density of information required for a multi-file feature often exceeds the agent's ability to maintain perfect logic. When an agent is forced to process too much at once, it begins to 'forget' edge cases or misinterpret how different modules interact. This is where the 'One-Iteration' rule becomes vital: every task given to an AI agent must be small enough to be fully completed, tested, and verified within a single execution cycle.

Traditional Product Requirement Documents (PRDs) are designed for humans who can hold a high-level vision in their heads while working on details. AI agents, however, thrive on computational precision. Using a tool like Ampt or a custom bash script to manage these cycles ensures that the agent never bites off more than it can chew. If a feature is too large for one prompt, it must be subdivided into atomic user stories that fit the specific constraints of the model's logic gate.

The Ralph Workflow: Automating the PRD to JSON Conversion

One of the most effective frameworks for this type of automation is known as Ralph. Named after the recursive nature of its coding loop, Ralph operates on a simple but profound principle: give an agent a list of tiny tasks, and it will implement, test, and commit them one by one. To initiate this, you shouldn't manually write code; you should start by automating the PRD to JSON conversion. By taking a markdown-based PRD and running it through a Ralph PRD Converter, you transform vague human desires into machine-readable JSON instructions.

Step 1: Generate the PRD with Voice

Start by describing your feature using a tool like OpenAI Whisper or a standard voice-to-text input. Instead of typing for hours, speak your requirements for three to five minutes. Use a PRD Generator skill to ask clarifying questions about database schemas, user permissions, and edge cases. This ensures the initial document is robust before the AI prompt engineering for developers phase begins.

Step 2: Convert to Structured JSON

Once you have a markdown PRD, you must convert it into a PRD.json file. This file acts as the 'brain' of the autonomous agent. Each object in the JSON should represent a single user story with a title, a small story size, and—most importantly—verifiable acceptance criteria. This structure allows the agent to track its own progress, marking stories as "passes: true" or "passes: false" as it progresses through the build.

Verifiable Acceptance Criteria: The Only Way to Automate QA

In a standard engineering workflow, a human QA tester verifies that a feature works. In an AI agent workflow automation, the agent must be its own tester. This requires verifiable acceptance criteria. Instead of saying "the search bar should work," you must define criteria like "the search results dropdown should display at least 5 results when the query matches the database." These act as automated tests that the agent can run against its own code.

If the agent doesn't have a feedback mechanism, it cannot self-correct. For frontend tasks, this often involves giving the agent access to a browser. Using a dev browser skill allows the agent to actually render the UI and check if a button exists or if a CSS class is applied correctly. Without this, the agent is flying blind, and the risk of shipping broken code increases exponentially. When building data-heavy interfaces, such as those used to discover creators on Stormy AI, having these verifiable steps ensures that complex filters and API calls return the expected data types every single time.

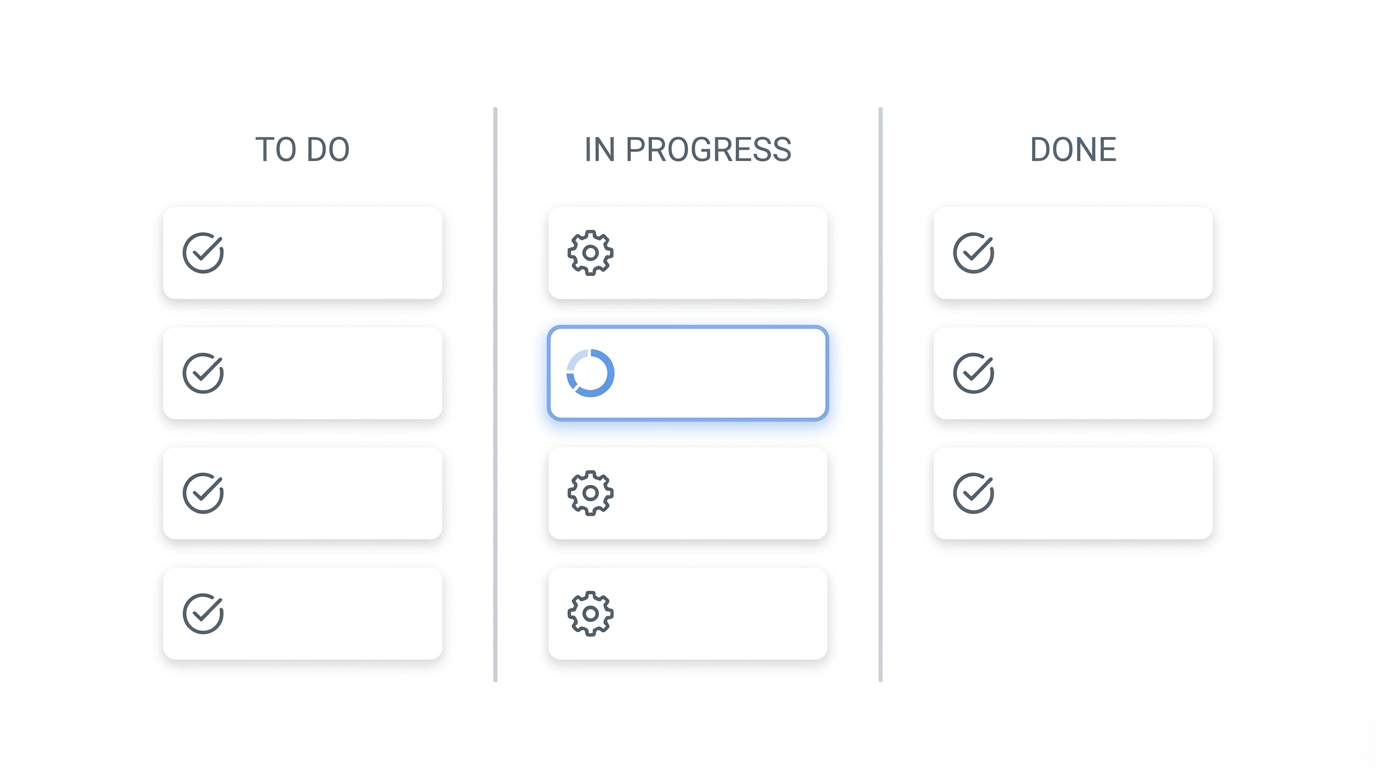

The Psychology of the Kanban Board for AI

Human developers have used Kanban boards and sticky notes for decades because they manage cognitive load. We can apply this same human engineering workflow to AI agents. When the Ralph script runs, it looks at the JSON file like a digital Kanban board. it picks the first story, moves it to 'in progress,' executes the code, and moves it to 'done.' This modular approach prevents the agent from getting lost in the 'spaghetti' of a large codebase.

This loop is incredibly cost-effective. While a senior developer might cost $150 an hour, running a cycle of Claude Code through an automated script might only cost $3 to $30 depending on the complexity of the feature. This allows for rapid prototyping where the cost of failure is essentially the price of a latte. For startups building niche tools, such as influencer tracking platforms or UGC sourcing engines, this atomic user stories AI strategy allows for the kind of shipping velocity that was previously reserved for well-funded enterprises.

Long-Term vs. Short-Term Memory: Agents.md and Progress Logs

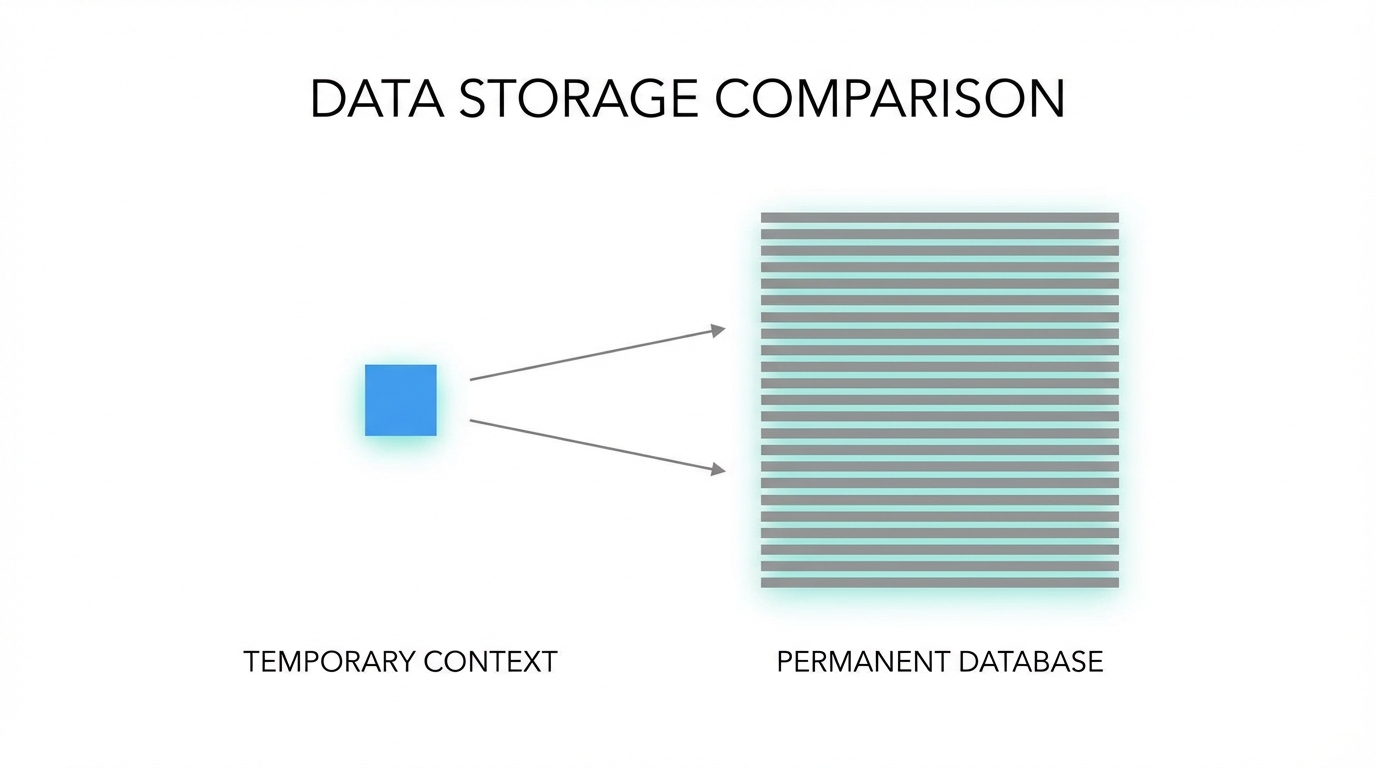

One of the biggest mistakes in AI prompt engineering for developers is treating every iteration as a blank slate. To build successfully, your agent needs two types of memory. First, a progress.txt file acts as short-term memory, logging what happened in the last three iterations so the agent knows which files it just touched. Second, agents.md files act as long-term memory. These are markdown files placed in specific folders that contain 'sticky notes' for the agent about that specific part of the codebase.

If the agent discovers a particular quirk in your database schema or a specific way your CSS framework handles padding, it should record that in the agents.md file. This prevents the agent from making the same mistake twice. This compound engineering approach means that your AI agents actually get smarter the longer they work on your specific project. It mimics the way a new hire eventually learns the 'unwritten rules' of a repository.

Common Pitfalls in Multi-File Prompt Engineering

Even with a perfect PRD to JSON conversion, things can go wrong. The most common pitfall is the dependency chain. If Story B requires code from Story A, but Story A wasn't committed correctly, the agent will spiral. Always ensure your Ralph script includes a mandatory commit after every successful iteration. This allows for an easy rollback if the agent takes a wrong turn in the logic. Claude Code best practices dictate that you should also manually review the code after every 5-10 iterations to ensure the architectural integrity remains intact.

Another pitfall is overscoping the atom. If an 'atomic' story takes more than 10-15 minutes for the AI to process, it is not atomic. Break it down further. For instance, if you are building a dashboard to monitor influencer campaign performance, don't make the story "Build the dashboard." Make the stories "Create the database schema for campaign metrics," followed by "Build the API endpoint for fetching metrics," and then "Render the line chart for views over time." This granularity is what makes AI agent workflow automation reliable.

Conclusion: Building the Future While You Sleep

The transition from manual coding to AI-powered automation is not about finding a smarter model; it's about building a better workflow. By utilizing atomic user stories AI, automating your PRD to JSON conversion, and enforcing verifiable acceptance criteria, you turn an unpredictable chatbot into a world-class engineering team. Whether you are building the next big SaaS or a specialized tool like the influencer discovery engine at Stormy AI, the secret to scale is small, repeatable, and verifiable units of work. Start small, iterate often, and let the agents do the heavy lifting while you focus on the vision.