In 2026, the traditional search bar is no longer the primary gateway to commerce. For the modern mobile shopper, the camera has become the new keyboard. As visual AI matures, leading retailers are moving beyond simple keyword matching toward immersive, image-driven discovery. This shift isn't just about aesthetics; it is a data-driven response to a consumer base that processes visual information 60,000 times faster than text. Brands like ASOS and IKEA have set the gold standard, proving that when you bridge the gap between inspiration and purchase through visual search, engagement metrics and conversion rates skyrocket.

The visual search market has reached a fever pitch this year. With the global sector projected to hit $151.60 billion by 2032, according to Envive.ai, the technology has transitioned from an "innovative extra" to a core revenue engine. Today, Google Lens alone facilitates over 20 billion searches monthly, with a staggering 4 billion of those searches specifically tied to shopping intent.

The $151 Billion Shift: Visual Search in 2026

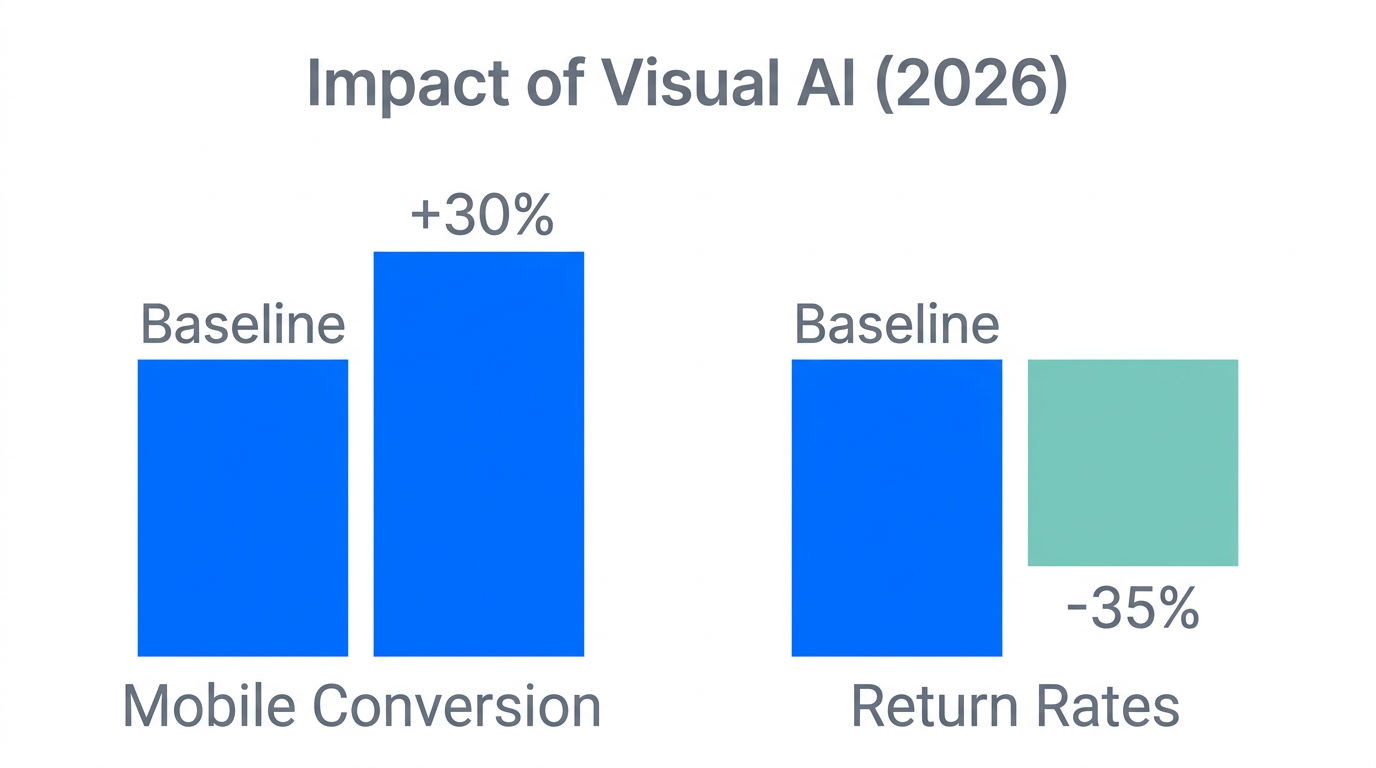

The acceleration of visual AI tools has fundamentally changed the 2026 e-commerce landscape. We are seeing a move toward multimodal search, where users don't just upload a photo—they refine it with text. Imagine a customer photographing a vintage chair and typing "available in velvet?" This nuance is driving a 30% increase in conversion rates for early adopters. Retailers are no longer just selling products; they are providing a visual concierge service that understands intent better than a text string ever could.

| Metric | Traditional Text Search | AI Visual Search (2026) |

|---|---|---|

| Average Conversion Rate | 2.5% - 3.5% | Up to 30% Lift |

| Customer Engagement | Baseline | 25% Increase |

| Primary Input | Keywords / Text | Images / AR / Multimodal |

| User Intent Accuracy | Moderate (relies on correct terms) | High (matches visual attributes) |

"Visual search is the bridge between the physical inspiration of the world and the digital convenience of the store—it turns every smartphone into a doorway to your inventory."ASOS Style Match: A Masterclass in Mobile-First Retention

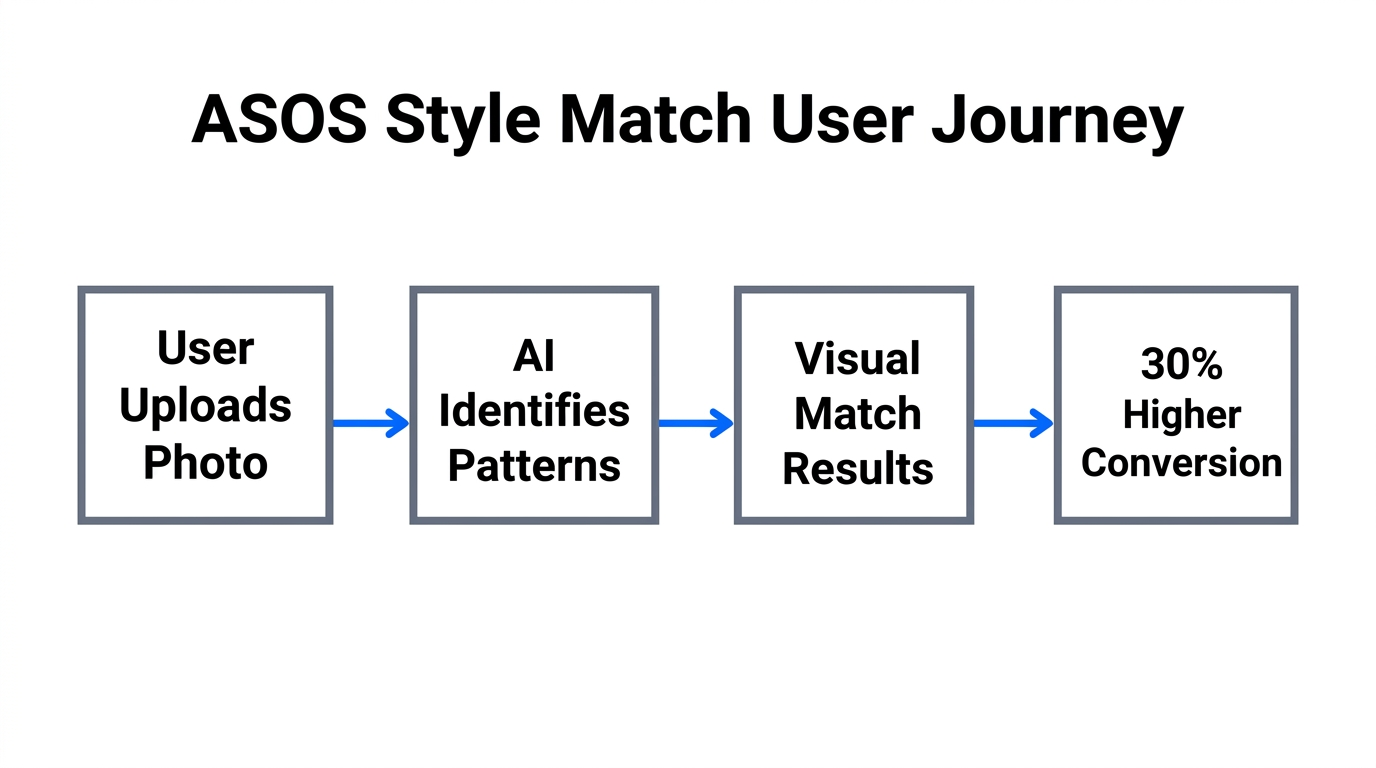

ASOS was one of the first major fashion retailers to recognize that their audience lives on platforms like Instagram and TikTok. Their Style Match tool allows users to upload screenshots or real-world photos directly into the ASOS app to find visually similar items. This feature isn't just a gimmick; it addresses the "paradox of choice" in a catalog that features over 850 brands and 85,000 products.

By leveraging Netscribes data, we see that Style Match has significantly boosted mobile engagement. When a user sees a creator wearing a specific outfit on social media, the friction of finding that item is reduced to a single upload. This instant gratification is what keeps Gen Z and Millennial shoppers within the ASOS ecosystem rather than bouncing to a competitor's site to hunt for keywords.

The IKEA Place Playbook: Solving 'Spatial Doubt'

While ASOS focuses on style, IKEA addresses one of the biggest hurdles in furniture e-commerce: spatial doubt. Will this sofa fit? Does the oak finish clash with the floor? The IKEA Place app uses AR-powered visual search to allow customers to place true-to-scale 3D models of furniture in their actual homes. In 2026, this has evolved into a sophisticated tool that doesn't just "show" the product but analyzes the room's lighting and dimensions to ensure a perfect match.

The impact on the bottom line is undeniable. According to research from Xictron, IKEA reported a 35% reduction in return rates since the widespread adoption of AR visual tools. By allowing customers to "try before they buy" in a digital-physical hybrid space, IKEA has effectively slashed the costs associated with reverse logistics—a multi-billion dollar problem in the industry.

"Reducing ecommerce returns by 35% isn't just a logistical win; it's a sustainability and profitability revolution powered by AR visual search."UX Best Practices: Why the Camera Icon Matters

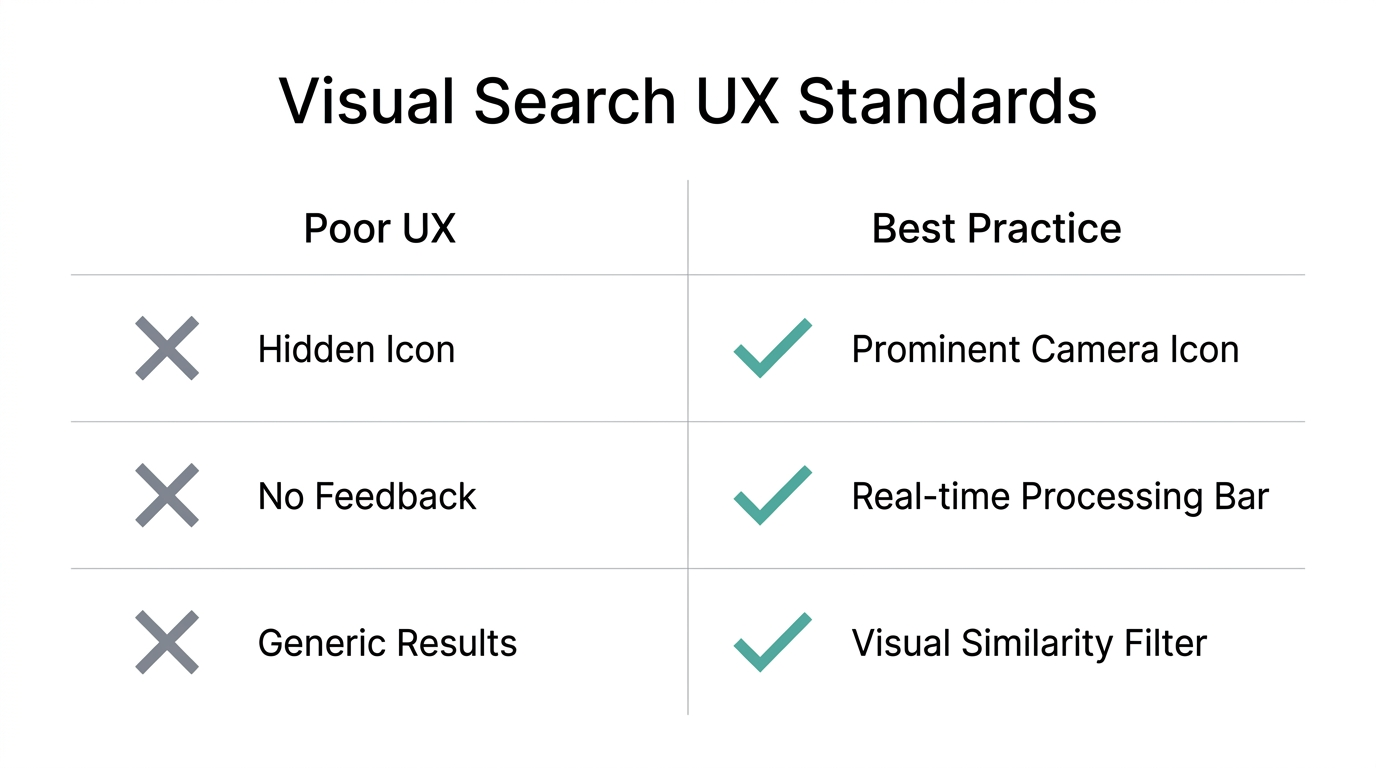

Implementation is as important as the technology itself. A common mistake for many retailers is hiding the visual search feature deep within menus. Data from Fast Simon suggests that if users cannot find the "camera" icon within 1.5 seconds of opening the search bar, adoption rates drop by over 50%. The icon should be placed directly inside the primary search field, styled in a way that clearly communicates its function.

Furthermore, mobile performance is the silent killer of visual search success. With 71% of visual searches occurring on mobile devices, a slow-loading experience will destroy conversion. Brands must prioritize mobile technical optimization to ensure that heavy AI processing doesn't lead to latency.

Leveraging Visual AI for 'Complete the Look'

One of the most powerful applications of visual AI in 2026 is the "Complete the Look" engine. By analyzing the visual attributes of what a customer is currently viewing, AI can suggest complementary items that match in color, texture, and style. This hyper-personalization leads to an average 25% lift in session value.

Platforms like Layers now allow brands to create these recommendation loops in real-time. Instead of showing "People also bought," the AI shows "Items that visually complement this." For instance, if a user is looking at a pair of hiking boots, the AI identifies the rugged aesthetic and suggests a matching waterproof backpack and technical socks, effectively upselling without being intrusive.

A Playbook for Mid-Sized Brands

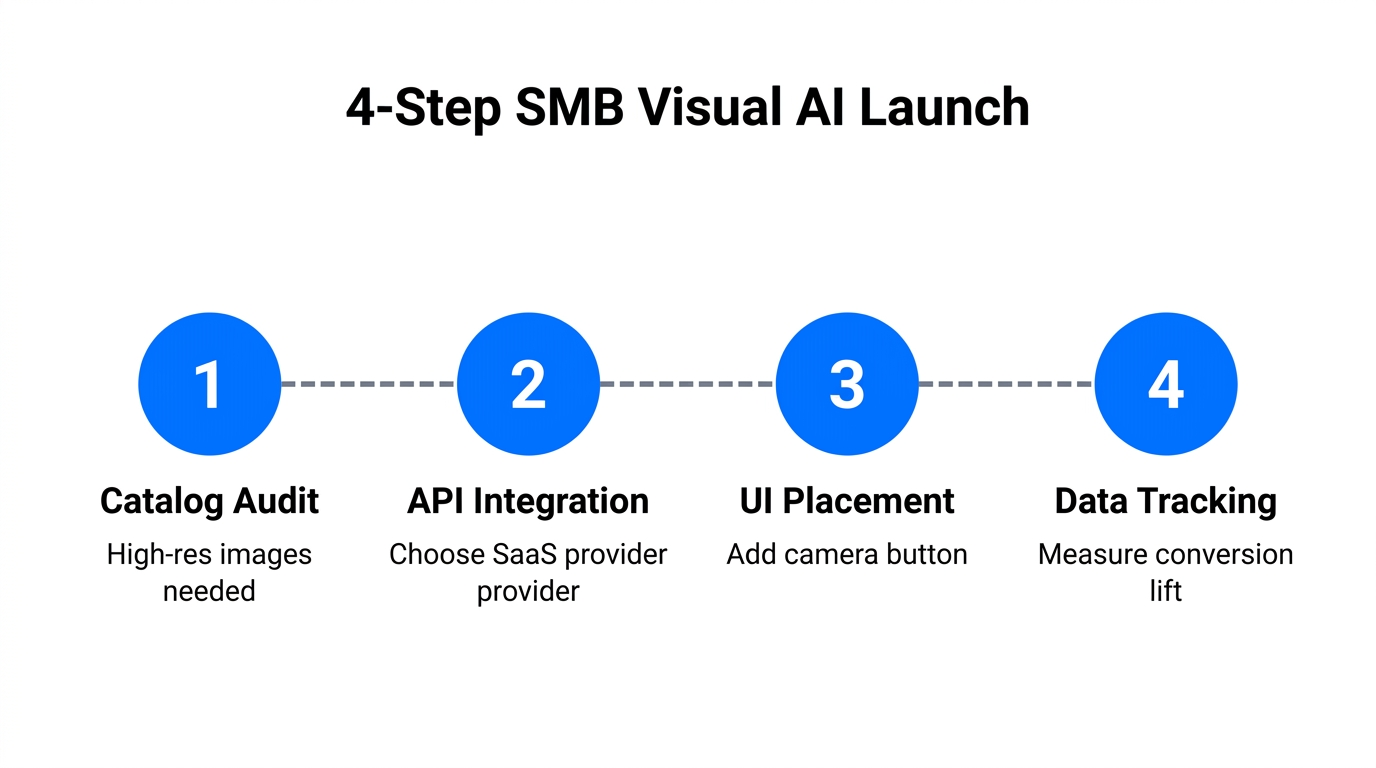

You don't need IKEA's budget to implement visual discovery. Small-to-mid-sized brands can leverage third-party AI platforms to compete. Tools like ViSenze and Syte offer plug-and-play visual search and automated product tagging that can be integrated into platforms like Shopify or Meta Ads Manager flows.

Step 1: Optimize for 'Machine Vision'

AI models require high-quality data to function. Ensure each product has 5–8 high-resolution images (at least 1080px) from multiple angles. This helps the AI build "confidence" in its matches. Replace generic filenames like photo1.jpg with descriptive names like emerald-green-velvet-armchair.jpg to assist in indexing, as recommended by AdsX.

Step 2: Implement Structured Data

Use Schema Markup to tell search engines and AI agents exactly what they are looking at. Implementing Product and ImageObject JSON-LD schemas is essential for appearing in Google’s multisearch results. Tools like Yoast SEO can help manage this technical layer without requiring a developer.

Step 3: Partner with Visual-First Creators

To drive adoption of your visual search tools, you need content that demonstrates how to use them. This is where partnering with UGC creators becomes vital. Using an AI-powered platform like Stormy AI allows you to discover creators who specialize in "Try-On Hauls" or "Room Makeovers." These creators can show their audience how to use your visual search tool to find looks, effectively bridging the gap between social content and your store's inventory.

"In 2026, the brands that win aren't just the ones with the best products, but the ones that make those products easiest to find with a single click of a camera."Conclusion: The Future of Agentic Shopping

As we look toward the remainder of 2026, the rise of agentic shopping is the next frontier. AI agents will soon use visual search autonomously to compare products across the web for their human users. For brands, this means that visual discoverability is no longer optional—it is the baseline for survival. By following the lead of ASOS and IKEA, optimizing your technical SEO via 1SEO guidelines, and leveraging AI tools for discovery and outreach, you can transform your mobile experience into a high-conversion visual powerhouse.

Ready to find the creators who will showcase your brand's visual innovation? Start your search on Stormy AI today and lead the visual commerce revolution.