In 2026, the influencer marketing landscape has undergone a seismic shift. We are no longer just managing human relationships; we are overseeing complex ecosystems of autonomous software and virtual personas. With the global influencer marketing industry having surpassed the $32.55 billion mark last year according to SuperAGI, the stakes for CMOs have never been higher. As 69.1% of marketers now integrate AI into their daily operations on platforms like TikTok and Instagram, the line between efficiency and ethical liability has blurred. This guide serves as a risk-management playbook for navigating the legal and moral complexities of the AI influencer agent era.

1. The FTC 'Double Disclosure' Rule: Beyond the Simple #Ad

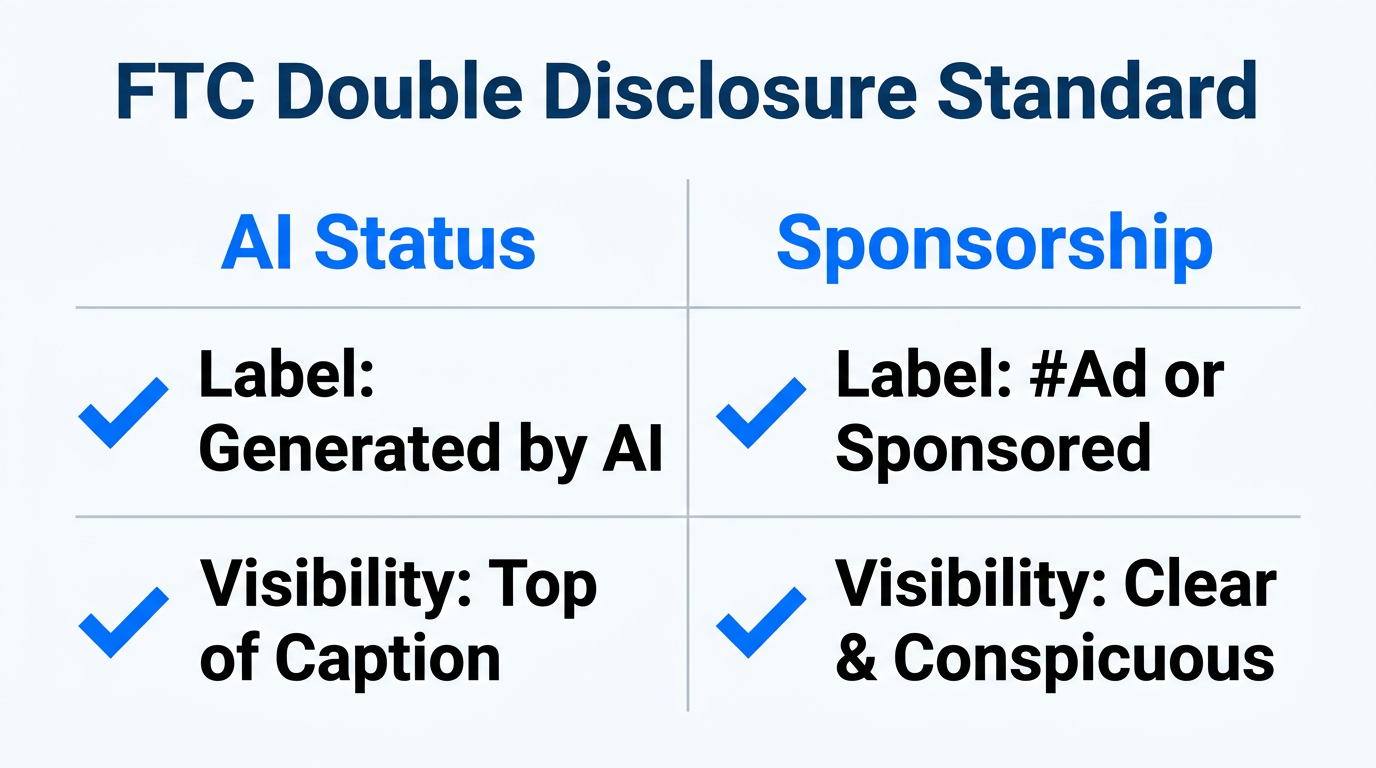

The most common compliance failure in 2026 is the lack of transparency regarding the nature of the creator itself. While the industry was once satisfied with a simple #ad tag, the Adweek reports that the Federal Trade Commission (FTC) now enforces what is known as 'Double Disclosure.' This requires brands to label content as both sponsored and AI-generated. If your virtual influencer, like the digital models managed by The Clueless, posts a product recommendation, the disclosure must be unambiguous to the average consumer.

Failure to follow these FTC AI disclosure guidelines doesn't just result in legal trouble; it destroys brand equity. Research from Creators Synergy shows that while AI influencers maintain a high 2.84% engagement rate, that trust evaporates the moment a follower feels deceived. Modern audiences in 2026 value transparency over perfection. When using agentic AI platforms to handle your outreach, ensure your automated scripts include these compliance mandates as a non-negotiable step in the workflow.

"In 2026, transparency is the only currency that doesn't devalue. If the audience can't tell where the human ends and the algorithm begins, you've already lost their trust."2. Preventing 'Digital Plagiarism' and the 'Synthia Error'

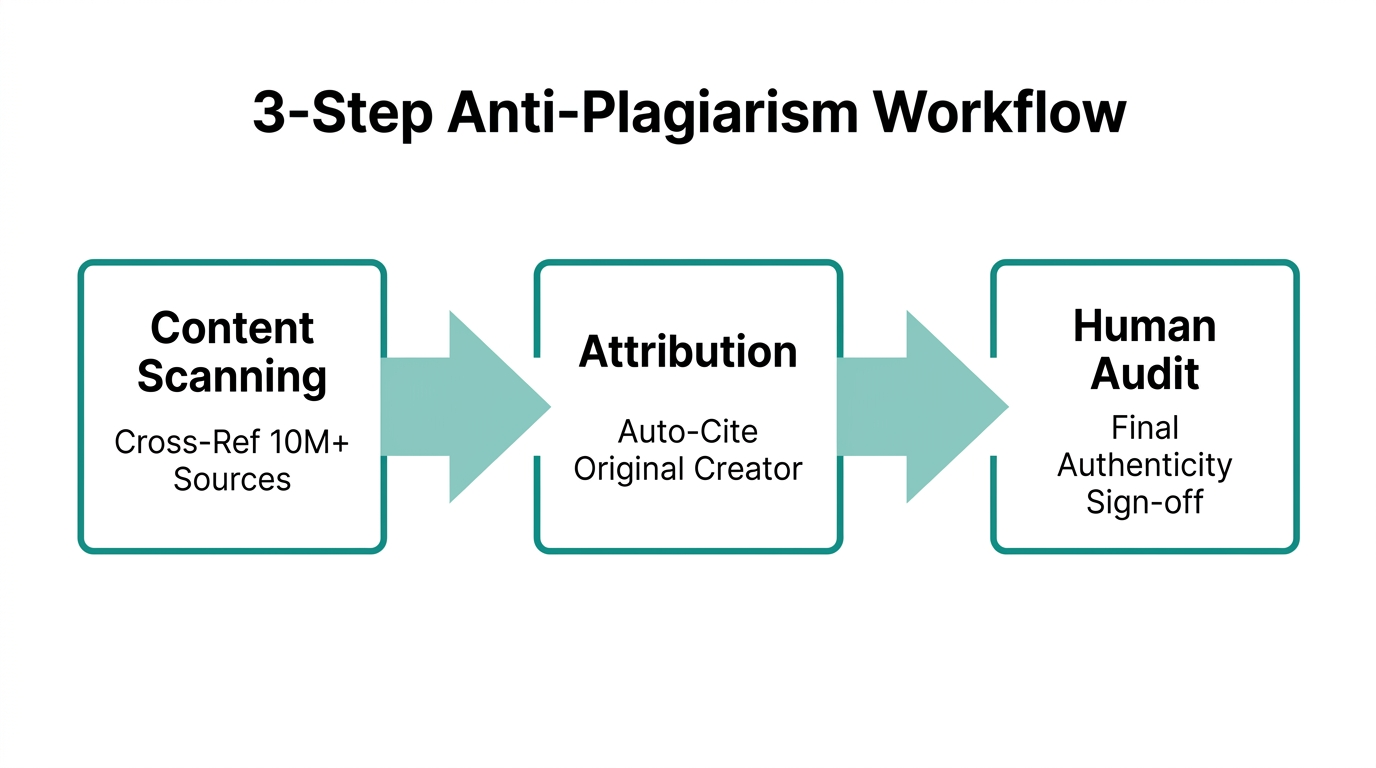

One of the most dangerous AI marketing risk management pitfalls is the unauthorized use of human likenesses. The 'Synthia Error'—named after a high-profile 2025 lawsuit—occurs when an AI influencer is trained on the physical traits, voice, or 'vibe' of real human creators without their explicit consent. This form of 'Digital Plagiarism' has led to massive class-action suits and the immediate de-platforming of brand accounts. According to 5W PR, these errors are often the result of poorly vetted third-party AI agencies or 'black box' training models.

| Risk Factor | Legal Consequence | Mitigation Strategy |

|---|---|---|

| Unlicensed Training | Copyright Infringement | Use LoRA or Dreambooth with proprietary data. |

| Voice Cloning | Right of Publicity Violation | Secure written 'Digital Persona' licenses via DocuSign. |

| Vibe Mimicry | Trade Dress Disputes | Ensure the AI persona has a distinct, documented backstory. |

To stay compliant, CMOs must demand a clear 'data lineage' from their AI partners. If you are building a virtual mascot, as many brands are for Metaverse activations, the training data must be ethically sourced and legally cleared. Using a tool like Stormy AI can help you discover human creators who are open to licensing their likeness, ensuring a responsible AI influencer strategy from the start.

3. The Ethics of Automated Community Management

In 2026, AI agents aren't just finding creators; they are talking to them. Autonomous agents now handle the 'boring 80%' of administrative work, including outreach and rate negotiation. While this offers a 40–60% reduction in campaign costs per Ignite Social Media, over-automation in community management is a trap. When an AI agent responds to sensitive DMs or comments with 'uncanny valley' logic, it creates a PR nightmare.

The consensus among experts, such as Shahrzad Rafati of RHEI, is that AI should act as a 'tireless digital C-suite' for logistics, not a replacement for human empathy. As human influencer Dana Brooke noted, brands hire humans for their 'messy authenticity'—something that marketing experts agree is difficult to replicate with pixels. Automated community management should be reserved for transactional queries (e.g., 'What is your shipping policy?'), while any nuanced brand sentiment should be escalated to a human representative using tools like Zendesk.

"AI agents amplify what’s already organized; they don’t rescue what’s broken. You cannot hand an agent your chaos and expect order." — David Mainiero, Chief AI Officer.4. Data Hygiene: Avoiding 'Hallucinated' Metrics

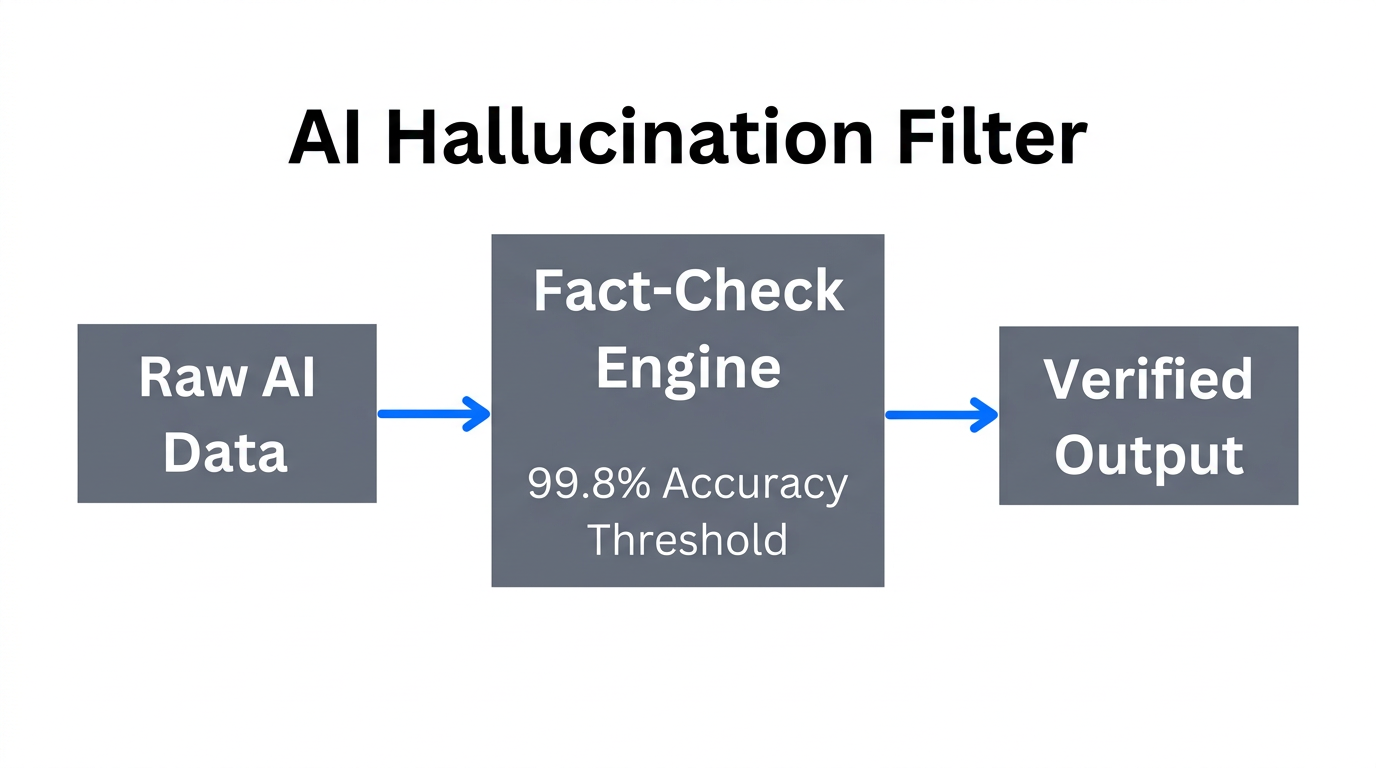

Perhaps the most insidious mistake in 2026 is feeding AI agents 'dirty data.' AI agents require structured inputs, such as historical engagement rates and past campaign ROI, to function effectively. When these agents are fed outdated spreadsheets or incomplete CRM data, they begin to 'hallucinate' performance metrics. This leads to brands wasting budgets on influencers who appear high-performing on paper but have zero actual reach or audience quality. According to AIPoweredDigital, this is the leading cause of failed AI-driven campaigns this year.

To prevent this, brands must implement 'Data Scaffolding.' This involves cleaning your data within CRM systems like Pipedrive or internal databases before deploying any autonomous agents. Platforms like Stormy AI solve this by providing real-time, AI-verified creator data, ensuring that your outreach agents are operating on facts rather than fabrications. Without data hygiene, your influencer marketing compliance 2026 strategy is built on sand.

5. Building a Transparent Brand Identity in the Era of Pixels

Finally, the most successful brands in 2026 are those that embrace the 'Human-in-the-Loop' (HITL) model. This strategy uses AI agents for the heavy lifting—discovery, enrichment, and initial outreach—while keeping human marketers in charge of the 'Vibe Check' and final contract approval. Whether you are working with virtual icons like Lil Miquela or India's Kyra from FUTR Studios, the creative direction must remain human-centric.

We are seeing a rise in niche specialists—AI experts in fields like quantum computing or sustainable fashion. These personas provide deep credibility but require a transparent brand identity to thrive. If your brand is using responsible AI influencer strategy, you should be proud to showcase your innovation using high-quality assets from Canva rather than hiding it. Brands that try to pass off virtual models as real humans are almost always caught by the 2026 'pixel-detecting' community, leading to irreparable reputational damage.

"The future of influencer marketing is a hybrid. AI provides the scale, but humans provide the soul. Don't let your agents run your brand into a wall of automation."Conclusion: The Compliance Playbook for 2026

Navigating the world of AI influencer agents in 2026 requires a balance of technological bravery and ethical caution. By adhering to the FTC's double disclosure rules, respecting intellectual property, maintaining rigorous data hygiene, and keeping a human in the loop, CMOs can harness the power of AI without falling into the trap of 'uncanny valley' marketing.

For teams looking to automate their workflow responsibly, tools like Stormy AI offer the perfect middle ground: an AI-powered search engine and outreach agent that empowers human marketers rather than replacing them. In the end, the goal of AI influencer agent ethics is simple—use the machine to find the creators, but keep the human connection at the heart of the campaign. Compliance is not a hurdle; it is the foundation of long-term ROI.