The traditional SEO playbook is being rewritten by a process most marketers haven't even heard of yet. While we’ve spent two decades optimizing for single keywords and backlink clusters, platforms like ChatGPT and Perplexity are utilizing a sophisticated technical logic known as AI fanning. This mechanism doesn't just look for a match; it expands a single user intent into a massive web of synthetic queries to ensure the response is as comprehensive as possible. For product founders and technical SEOs, understanding this LLM search mechanism is no longer optional—it is the difference between being the top-cited source in an AI response or disappearing from the digital landscape entirely.

The Anatomy of a Query: How AI Fanning Works

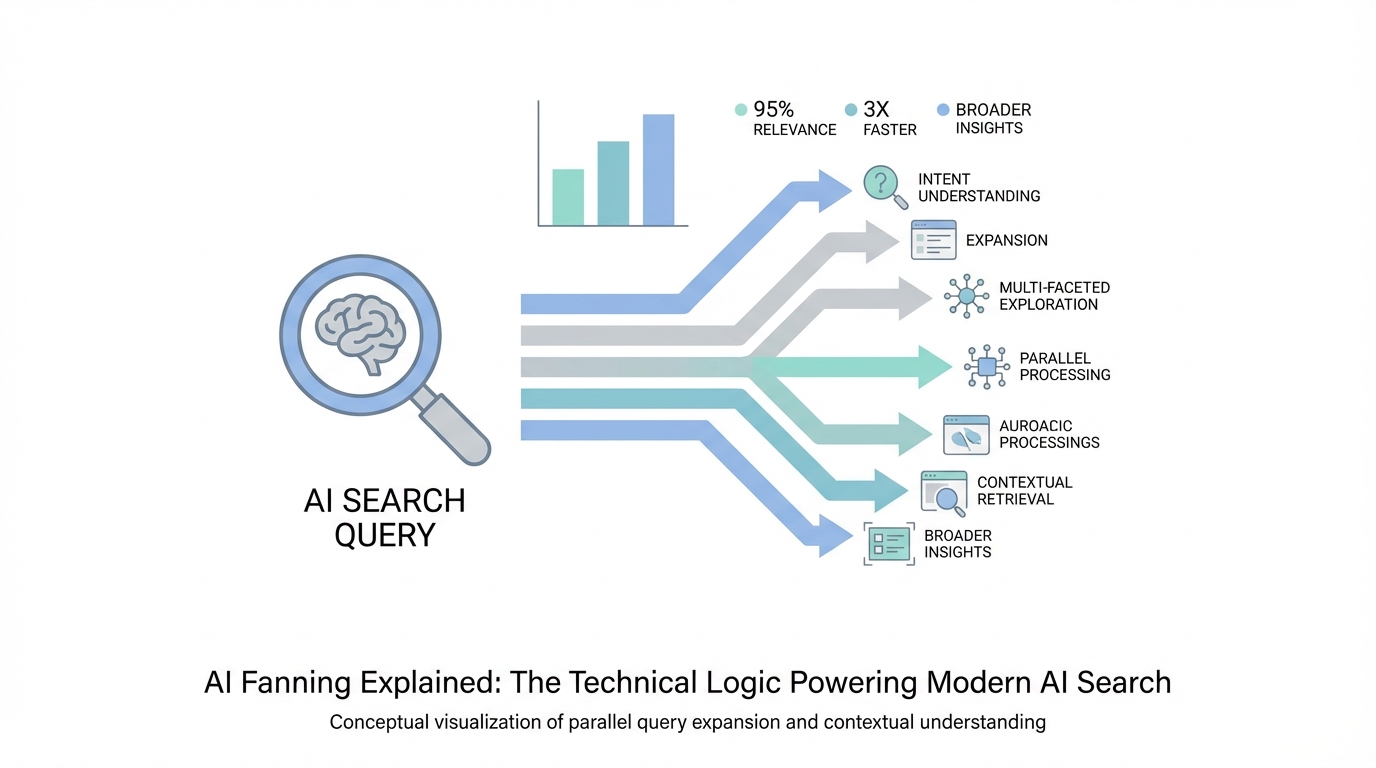

When a user types a prompt into an AI search engine—for example, "best funnel building software for mobile devices"—the system does not simply pass that string into a search index. Instead, it engages in what experts call AI fanning. The AI takes the initial prompt and programmatically expands it into approximately 100 derivative queries. These are hyper-descriptive, multi-angle questions that act like a comprehensive word map of the user's intent.

Think of it as a synthetic FAQ page generated in milliseconds. If the user asks about CRM software, the LLM search mechanism might generate variations like "most affordable CRM for small med spas," "CRM software with the best mobile app performance 2025," or "security features of top-tier CRMs." By fanning out the search, the AI ensures it captures every niche detail that might be buried in the depths of the web. This process relies heavily on OpenAI's ability to predict relevant context before even hitting the live web.

The Indexing Paradox: Why LLMs Rely on Google Page 1-3

Despite the hype surrounding "Google Killers," modern AI search tools are surprisingly dependent on traditional search giants. Search engine indexing for AI is an incredibly capital-intensive endeavor. For a startup like Perplexity, building a ground-up index of the entire internet would cost billions and take years. Instead, they lean on the heavy lifting already done by Google Ads and the main Google index.

Currently, the primary source material for AI-generated answers is what ranks on Pages 1 through 3 of Google and Bing. The AI uses these established indices as a discovery layer. It identifies the top results for its 100+ fanned-out queries and then proceeds to the next technical phase: the deep scrape. For marketers, this means that "old-school" SEO—ranking in the top 30 results—is actually the prerequisite for appearing in the AI search results. If you aren't visible to Google, you are invisible to the LLM.

The 'Context Window' and the 1,000-Page Scrape

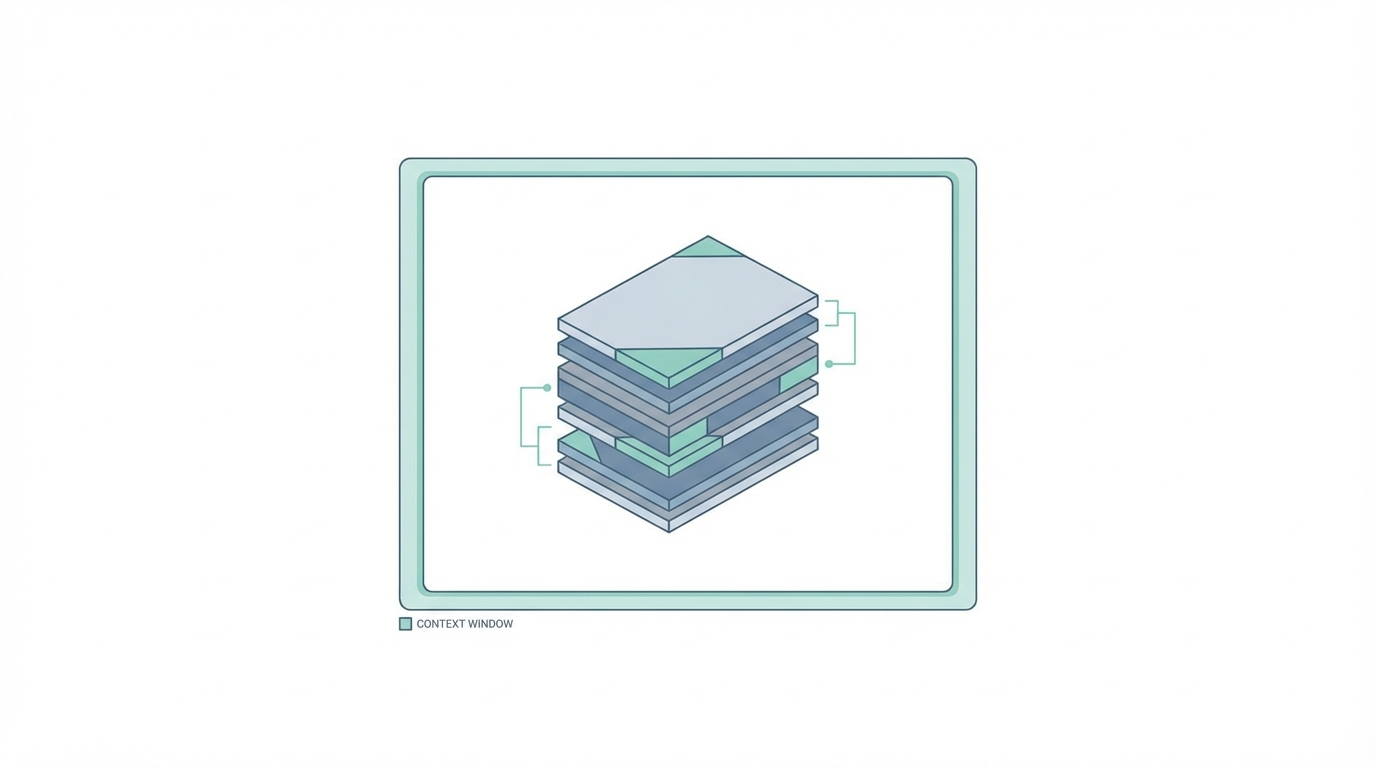

Once the AI has its list of URLs from the fanned-out queries, it begins a massive retrieval process. It doesn't just look at the metadata; it scrapes the full content of up to 1,000 individual pages. This massive volume of data is then funneled into the context window of the model. The context window is essentially the AI's short-term memory—the space where it holds all the information it has gathered to synthesize an answer.

This is why listicles and brand mentions are the most powerful currency in the world of Generative Engine Optimization (GEO). The AI loves content that is structured, authoritative, and frequently cited across those 1,000 scraped pages. If your brand appears on 40% of the pages the AI pulls into its context window, you are statistically more likely to be recommended as the primary solution. This is a numbers game where surface area equals authority.

The Power of Listicles in AI Retrieval

AI search engines have a technical bias toward "Top 10" or "Best of" lists because they are easy to parse and extract data from. When an LLM scrapes a thousand pages, it looks for consensus. If your product is consistently listed alongside other market leaders in high-ranking listicles, the AI views that as a verified signal of quality. Using tools like Notion to track your presence across these ranking pages is a basic requirement for modern growth teams.

AI Search Analytics: Detecting Synthetic Queries in Your Data

One of the most fascinating aspects of AI fanning is that it leaves a digital footprint in your analytics. If you look at Google Search Console, you may notice queries that no human would ever realistically type. These are often over 50 characters long, extremely descriptive, and hyper-specific. These are "ghost queries"—AI-generated searches looking for source material.

A key indicator of AI search analytics is seeing a high number of impressions for these long-tail queries but zero clicks. This happens because the AI is searching for the query, finding the page, and scraping the data directly through a bot rather than clicking a link in a browser. To verify this, you can drop suspicious queries into an AI detection tool like AIornot.com. If the tool flags the query structure as machine-generated, you’ve just confirmed that an LLM is currently using your site as source material for its fanning process.

Winning the AI Search Game: How to Influence Results

To win in this new environment, you must shift from keyword targeting to surface area management. Since the AI builds its answers based on what it finds in the top 30 results of Google, your job is to occupy as much space as possible within those results. This is where modern influencer and UGC strategies become highly technical.

For instance, AI-native platforms like Stormy AI can help brands find and manage relationships with the very creators and niche publishers who dominate the listicles and review sites that LLMs scrape. By identifying which influencers already rank on Page 1 for your target queries and securing a brand mention or feature on their site, you are essentially pre-loading the AI's context window with your brand data. This isn't just about traffic; it's about controlling the source material the AI uses to form its opinions.

Steps to Optimize for AI Fanning:

- Identify Reference URLs: Use an AI SEO tracker to see which specific URLs are being cited by ChatGPT or Perplexity for your niche.

- Quantify Brand Mentions: Determine your current share of voice within the pages the AI is scraping.

- Execute Placement Campaigns: Reach out to the owners of those high-ranking URLs to get your brand added. This might involve affiliate deals or direct sponsorship through Shopify Collabs or similar platforms.

- Monitor via AI Search Analytics: Track the emergence of long-tail synthetic queries in your search console to measure the success of your outreach.

The Future of Indexing: Will AI Bypass Google?

The current reliance on search engine indexing for AI through Google and Bing is a temporary bridge. As companies like OpenAI continue to raise massive amounts of capital, the likelihood of them building their own independent web crawlers increases. We are already seeing this with GPTBot, which crawls the web to train future models and potentially build a proprietary real-time index.

However, the cost of indexing the entire web is astronomical. For the foreseeable future, AI fanning will continue to use Google as its discovery engine. This creates a hybrid world where "New SEO" happens in days, while "Old SEO" still takes months. If you can get a brand mention on a high-authority site today, you could see yourself appearing in AI search results within 24 hours. The lag time of traditional search is being bypassed by the rapid synthesis of LLMs.

Conclusion: Preparing for the Post-Search Era

The shift toward AI search analytics and LLM search mechanisms represents a fundamental change in how information is retrieved. We are moving away from a "click-and-browse" model to a "synthesize-and-serve" model. For brands, this means the goal is no longer just to have the best website; it's to be the most frequently mentioned and highly vetted brand across the entire web's high-authority source material.

Success in the age of AI fanning requires a mix of traditional authority and modern technical agility. Whether you are using Meta Ads Manager to drive awareness or leveraging Stormy AI to discover creators who can secure those crucial Page 1 listicle placements, the objective remains the same: occupy the context window. The internet is being re-indexed by machines, and it’s time to make sure they like what they find.