The potential of conversational engineering was recently put to the ultimate test: lowballing luxury watch dealers for a $35,000 Rolex Daytona. By utilizing sophisticated AI prompt engineering, a custom-built voice agent managed to engage second-hand dealers, extract pricing, and negotiate deals across the United States. This experiment, led by the team at Startup Empire, revealed a critical truth about the current state of automation: the difference between a robotic, hang-up-worthy interaction and a successful business transaction lies entirely in the nuances of the prompt.

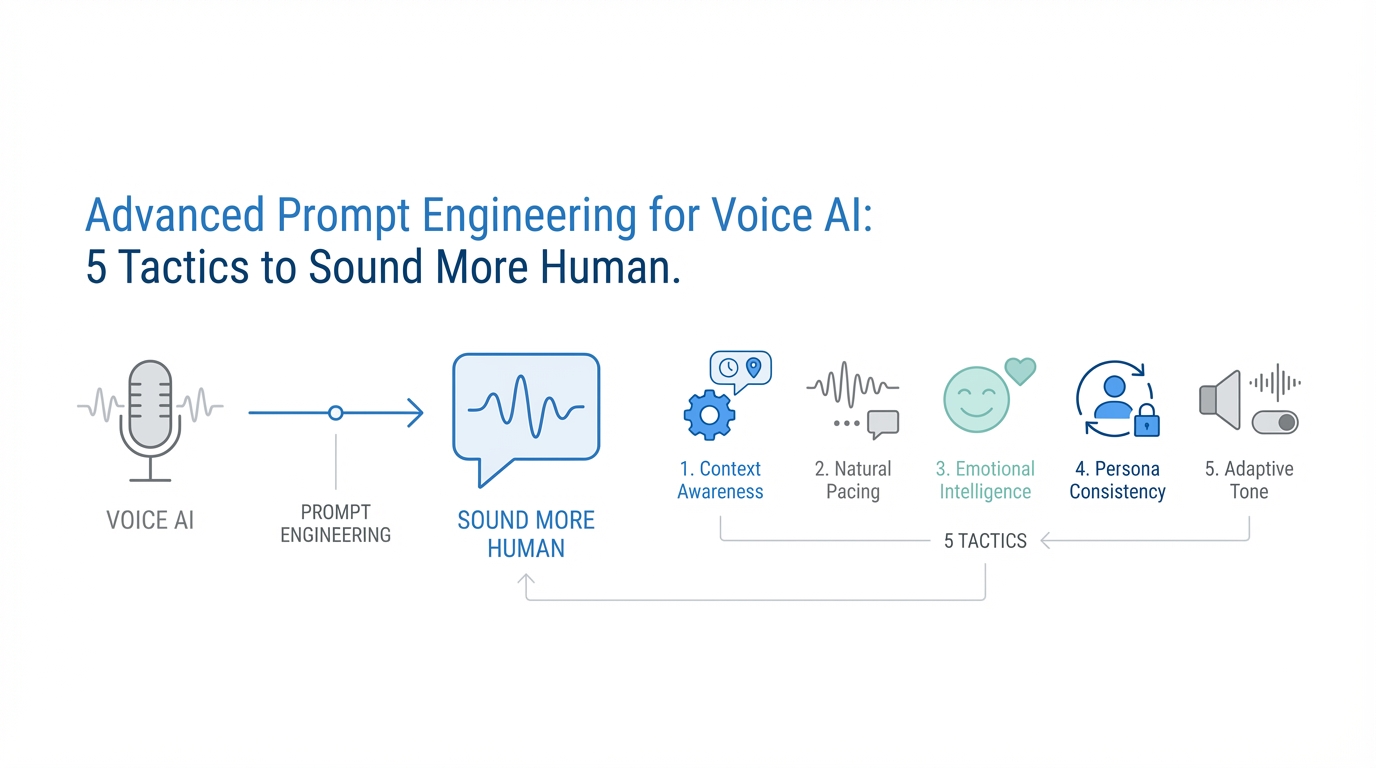

As voice AI platforms like Vapi become more accessible, the barrier to entry for automated phone systems has vanished. However, the barrier to effective communication remains high. To build a truly human-sounding AI, developers must move beyond basic instructions and embrace advanced tactics that mirror natural human psychology. Whether you are building an agent for sales, customer feedback, or market arbitrage, these five tactics will transform your conversational AI from a rigid bot into a high-performing digital representative.

The 6th Grade Rule: Why Simplicity Wins the Call

One of the most profound discoveries in voice AI testing is what experts call the "6th Grade Rule." In high-stakes business writing, we are often taught to use sophisticated vocabulary to convey authority. In conversational AI, however, complexity is the enemy of engagement. Real human speech, especially over the phone, is surprisingly simple. When the team at Startup Empire adjusted their prompts to explicitly use a sixth-grade English level, they observed significantly longer call durations and better prospect retention.

Humans don't use five-syllable words when they are checking the price of a watch or asking about real estate availability. They use short, punchy sentences and common idioms. By forcing your Large Language Model (LLM) to simplify its output, you reduce the cognitive load on the listener. This makes the interaction feel more familiar and less like a scripted robocall. For example, instead of asking, "Could you please clarify the status of the original manufacturer's documentation?" a human-sounding agent should simply ask, "Does it come with the original box and papers?"

Managing Turn-Taking: The Art of the Concise Response

A common failure in conversational AI best practices is "information dumping." When a standard LLM is asked a question, it often tries to provide a comprehensive, multi-paragraph answer. In a live phone call, this is a dead giveaway that the speaker is an AI. Natural human dialogue is a series of rapid back-and-forth exchanges, not long-form monologues.

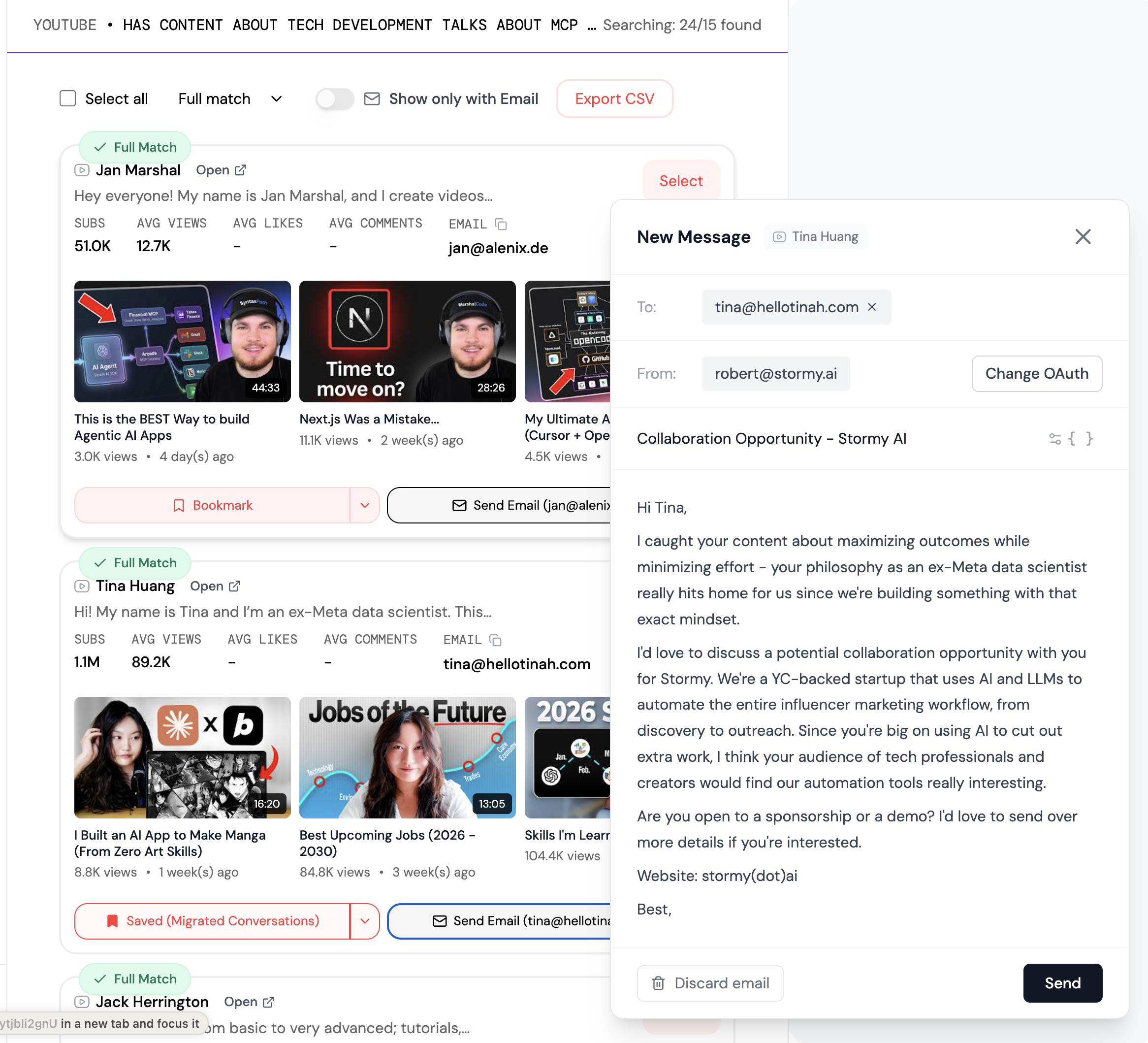

To solve this, your prompt must include strict constraints on response length. A highly effective instruction is to keep responses to 1 or 2 sentences max. This forces the AI to focus on one single point or question per turn. If the agent needs to gather three pieces of information—such as condition, price, and location—it should never ask for all three at once. For brands scaling their outreach efforts, platforms like Stormy AI streamline creator sourcing and outreach by using similar AI-driven personalization to keep conversations concise and effective. In voice AI, this iterative process mimics the natural flow of human conversation and keeps the user engaged.

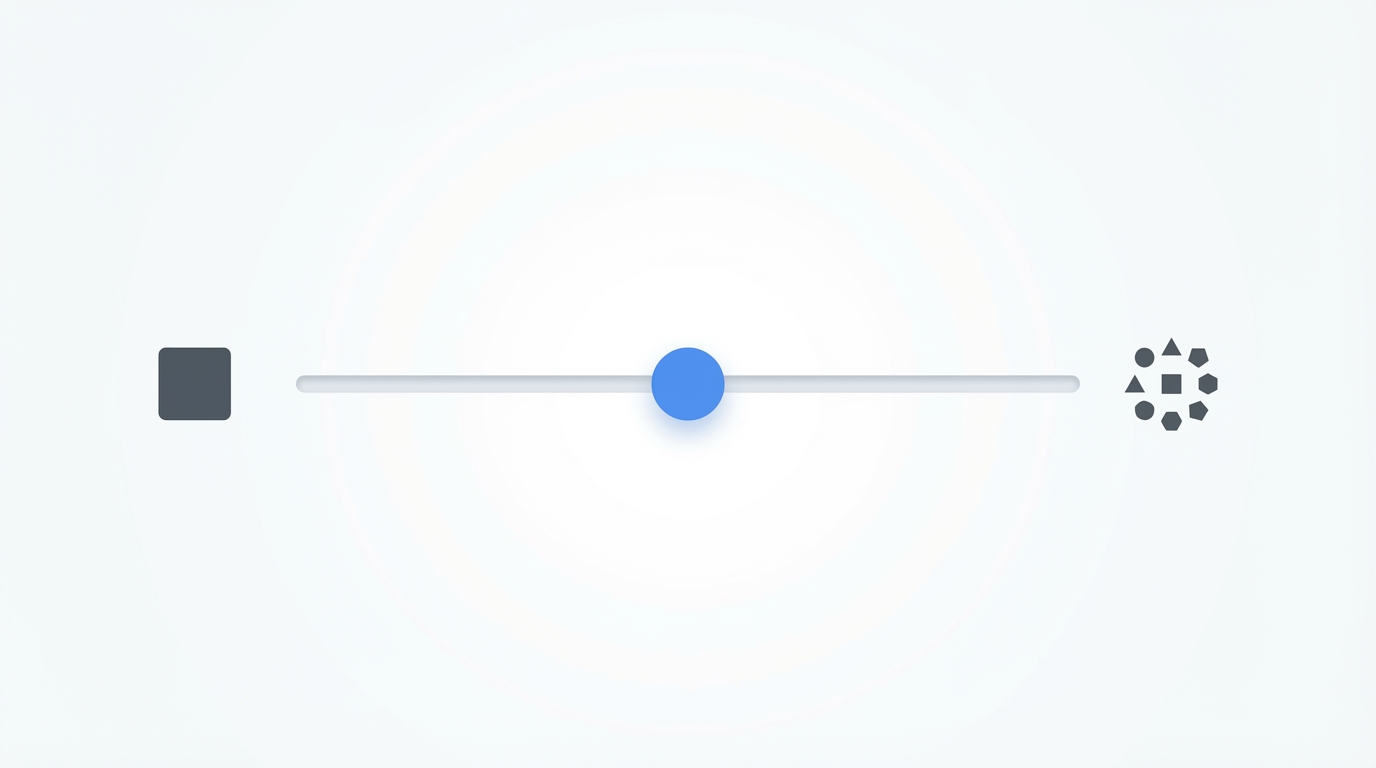

Optimizing Temperature Settings: Balancing Logic and Liveliness

In the world of LLM temperature settings, finding the right balance is crucial for maintaining a realistic persona while ensuring the agent doesn't hallucinate or lose focus on the goal.